The world of Artificial Intelligence is a whirlwind of conflicting narratives: a booming gold rush, a precarious bubble, a job-stealing menace, and yet, a technology still fumbling with basic tasks. Cutting through this cacophony is the 2026 AI Index from Stanford University’s Institute for Human-Centered Artificial Intelligence, offering an annual report card that provides much-needed clarity. Despite widespread predictions of development hitting a wall, the report reveals that top AI models are not just improving, but accelerating at an unprecedented pace. Adoption rates are soaring, outpacing even the personal computer and the internet. AI companies are achieving revenues that dwarf previous technological booms, but this rapid ascent is fueled by staggering investments in data centers and cutting-edge chips, running into the hundreds of billions of dollars. The very systems designed to measure AI’s progress, govern its development, and assess its impact on the job market are visibly struggling to keep up. AI is on a sprint, leaving the rest of the world scrambling to catch its breath.

This breakneck speed, however, comes with a significant environmental and logistical cost. The global network of AI data centers now consumes a staggering 29.6 gigawatts of power, a demand comparable to the peak electricity needs of the entire state of New York. The water footprint of running a single advanced model like OpenAI’s GPT-4o is projected to exceed the annual drinking water requirements of 12 million people. Compounding these concerns is the alarming fragility of the global chip supply chain. While the United States hosts the majority of the world’s AI data centers, the manufacturing of nearly all leading AI chips is concentrated in the hands of a single Taiwanese company, TSMC, highlighting a critical dependency. The data unequivocally points to a technology evolving at a speed that outstrips our current capacity for management and foresight. Here’s a deeper dive into the pivotal findings from this year’s comprehensive report.

The US and China are nearly tied in the AI race, with geopolitical implications intensifying.

In a highly competitive arena with significant geopolitical ramifications, the United States and China are now virtually neck-and-neck in terms of AI model performance, according to Arena, a platform that crowdsources comparisons of large language model outputs. While OpenAI held a commanding lead with ChatGPT in early 2023, this advantage has diminished as Google and Anthropic introduced their own advanced models in 2024. In a notable development in February 2025, R1, an AI model developed by the Chinese lab DeepSeek, briefly matched the performance of the leading US model, ChatGPT. As of March 2026, Anthropic currently holds the top position, closely followed by xAI, Google, and OpenAI. Chinese models, including DeepSeek and Alibaba, are only marginally behind. With the performance gap between the leading AI models now measured in razor-thin margins, the competition is shifting to factors like cost-effectiveness, reliability, and demonstrable real-world utility.

The AI Index report also highlights distinct advantages held by the US and China. The US boasts superior AI model capabilities, greater access to capital, and a substantial infrastructure of an estimated 5,427 data centers – more than ten times that of any other nation. Conversely, China leads in the volume of AI research publications, patents, and advancements in robotics. As this intense rivalry escalates, leading companies like OpenAI, Anthropic, and Google are increasingly withholding details about their training code, parameter counts, and dataset sizes. This lack of transparency, as noted by Yolanda Gil, a computer scientist at the University of Southern California and a co-author of the report, poses a significant challenge for independent researchers seeking to understand and enhance the safety of AI models.

AI models are advancing at an astonishing rate, surpassing human experts in many domains.

Contrary to forecasts of a development plateau, AI models continue their relentless improvement. By several metrics, they now match or even exceed human expert performance on tests designed to assess PhD-level understanding in science, mathematics, and language. The SWE-bench Verified benchmark, which evaluates AI models in software engineering, saw top scores dramatically increase from approximately 60% in 2024 to nearly 100% in 2025. In 2025, an AI system demonstrated the capability to autonomously generate a weather forecast.

“I am stunned that this technology continues to improve, and it’s just not plateauing in any way,” stated Gil. However, AI still faces considerable limitations in other areas. Due to their training on vast datasets of text and images rather than through direct interaction with the physical world, AI models exhibit what is termed "jagged intelligence." Robotics remains in its nascent stages, with success rates in household tasks averaging only 12%. While self-driving car technology is more mature, with Waymo vehicles operating in five US cities and Baidu’s Apollo Go providing rides in China, AI’s integration into professional fields like law and finance is still developing, with no single model yet dominating these sectors.

The methods used to test AI are fundamentally flawed and struggling to keep pace.

The impressive reports of AI progress should be viewed with a degree of skepticism. The benchmarks designed to track AI advancement are increasingly inadequate as models rapidly surpass their established limits, according to the Stanford report. Some benchmarks are poorly constructed; for instance, a widely used test for mathematical abilities exhibits a 42% error rate. Others are susceptible to manipulation, as models can be trained on benchmark test data, allowing them to achieve high scores without genuine cognitive improvement.

Furthermore, because AI is rarely deployed in the same manner it is tested, strong benchmark performance does not always translate to effective real-world application. For complex, interactive AI systems like agents and robots, dedicated benchmarks are still largely absent. Adding to these challenges, AI companies are becoming less transparent about their training methodologies, and independent evaluations sometimes reveal discrepancies compared to company-reported results. “A lot of companies are not releasing how their models do in certain benchmarks, particularly the responsible-AI benchmarks,” noted Gil. “The absence of how your model is doing on a benchmark maybe says something.”

AI is beginning to significantly impact the job market.

Within just three years of becoming widely accessible, AI is now utilized by over half the global population, a rate of adoption that surpasses that of both the personal computer and the internet. An estimated 88% of organizations incorporate AI into their operations, and a substantial four out of five university students are leveraging AI tools.

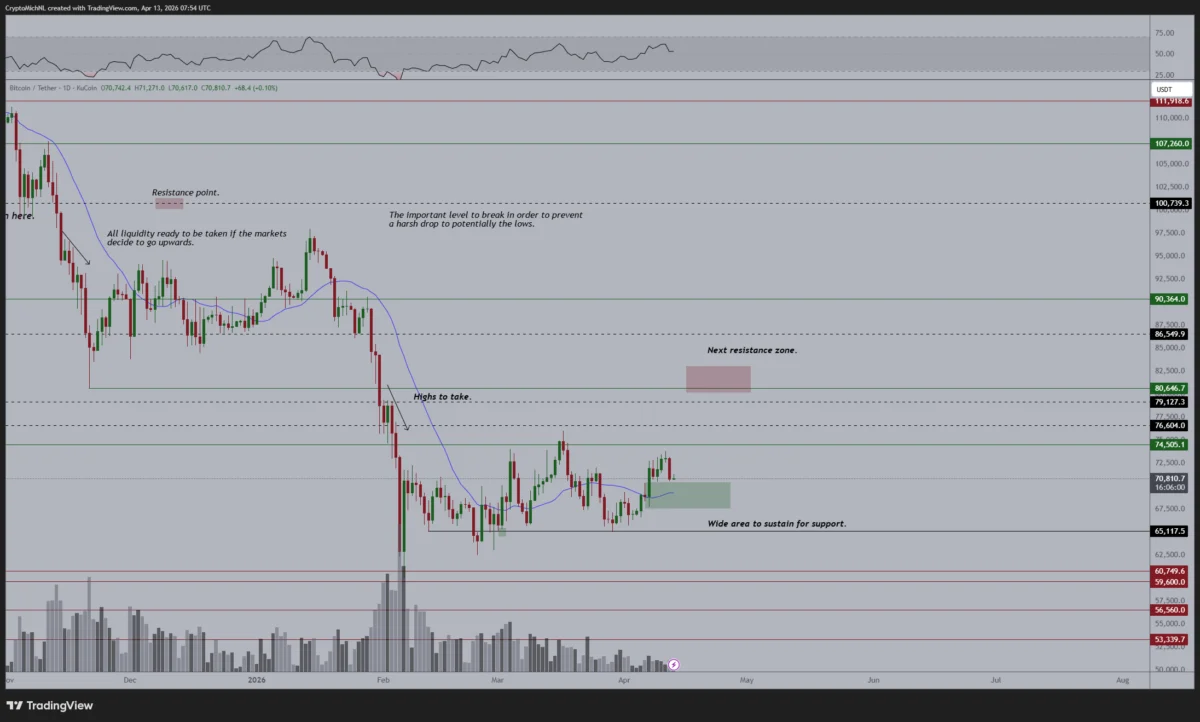

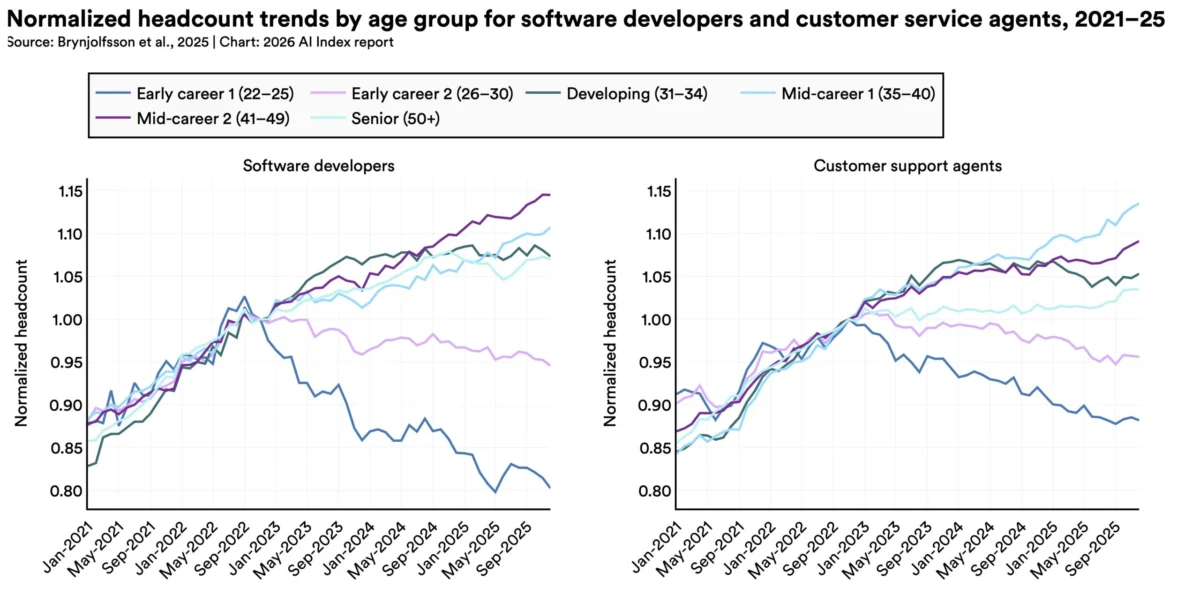

While AI deployment is still in its early stages, and its precise impact on employment is difficult to quantify, preliminary studies suggest that AI is starting to affect younger workers in specific professions. A 2025 study by Stanford economists indicated a nearly 20% decline in employment for software developers aged 22 to 25 since 2022. While macroeconomic factors may also contribute to this trend, AI appears to be a significant influencing factor.

Employers anticipate continued labor market tightening. A 2025 McKinsey & Company survey revealed that one-third of organizations expect AI to lead to workforce reductions in the upcoming year, particularly in sectors like service, supply chain operations, and software engineering. Research cited by the AI Index shows that AI is boosting productivity by 14% in customer service and 26% in software development. However, these productivity gains are not observed in tasks requiring higher levels of judgment. Overall, it remains too early to fully comprehend the broader economic ramifications of AI.

Public sentiment towards AI is complex, marked by both optimism and anxiety.

Globally, individuals express a dual sentiment towards AI, characterized by both optimism and apprehension. An Ipsos survey cited in the AI Index found that 59% of people believe AI will yield more benefits than drawbacks, while 52% admit to feeling nervous about it.

A notable divergence exists between expert and public perceptions of AI’s future, according to a Pew survey. The most significant disparity lies in the future of work: while 73% of experts anticipate a positive impact on how people perform their jobs, only 23% of the American public shares this view. Experts are also more optimistic than the general public regarding AI’s influence on education and healthcare. However, both groups concur that AI is likely to negatively affect elections and personal relationships.

Americans express the least confidence in their government’s ability to regulate AI effectively, according to another Ipsos survey. More Americans are concerned that federal AI regulation will be insufficient rather than overly stringent.

Governments are struggling to establish effective AI regulation amidst rapid technological advancement.

Governments worldwide are grappling with the challenge of regulating AI, though some incremental progress was made last year. The European Union’s AI Act began implementing its first prohibitions, banning the use of AI in predictive policing and emotion recognition technologies. Japan, South Korea, and Italy also enacted national AI legislation. In contrast, the US federal government adopted a deregulatory stance, with President Trump issuing an executive order aimed at limiting states’ authority to regulate AI.

Despite this federal action, US state legislatures passed a record 150 AI-related bills. California enacted landmark legislation, including SB 53, which mandates safety disclosures and whistleblower protections for AI model developers. New York passed the RAISE Act, requiring AI companies to publicly disclose safety protocols and report critical safety incidents.

However, for all the legislative activity, Gil emphasizes that regulation is lagging behind the technology due to a fundamental lack of understanding of how AI operates. “Governments are cautious to regulate AI because… we don’t understand many things very well,” she stated. “We don’t have a good handle on those systems.”