The 2026 AI Index report, released today, presents a wealth of striking statistics that can either validate pre-existing gut feelings or challenge them entirely. One prominent takeaway is the sheer scale of the United States’ commitment to AI development, evidenced by its hosting of 5,427 data centers – a number more than ten times that of any other nation. This aggressive pursuit underscores a global race for AI supremacy, with significant implications for economic and geopolitical landscapes. The report also illuminates critical vulnerabilities within the AI industry’s hardware supply chain, highlighting a precarious dependence on a single entity. The fact that "a single company, TSMC, fabricates almost every leading AI chip, making the global AI hardware supply chain dependent on one foundry in Taiwan," is a remarkable and frankly wild revelation, exposing a significant potential choke point for the entire sector.

However, the overarching narrative emerging from the 2026 AI Index is one of profound inconsistency in the current state of artificial intelligence. As my colleague Michelle Kim aptly summarized in her accompanying piece about the report, "If you’re following AI news, you’re probably getting whiplash. AI is a gold rush. AI is a bubble. AI is taking your job. AI can’t even read a clock." This sentiment is starkly illustrated by the Stanford report’s finding that Google DeepMind’s top reasoning model, Gemini Deep Think, while achieving a gold medal in the International Math Olympiad, falters by being unable to read analog clocks approximately half the time. This dichotomy, where AI demonstrates peak performance in highly complex logical tasks while struggling with seemingly elementary ones, is a defining characteristic of its present evolution.

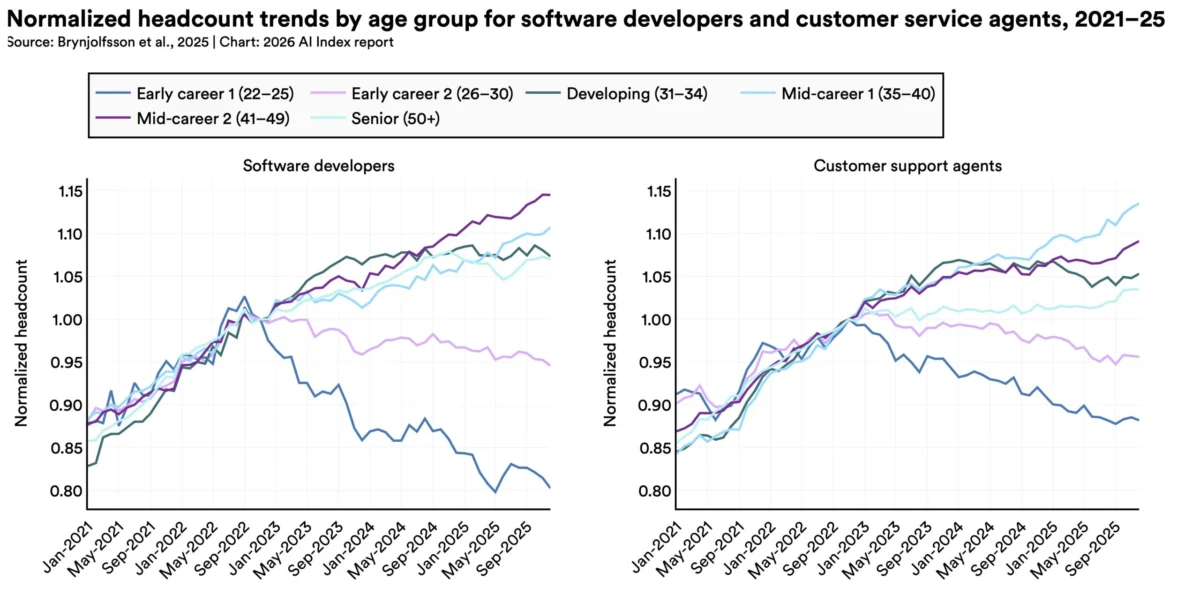

While Michelle’s article effectively captures the report’s key highlights, I find myself grappling with a persistent question: why is it so challenging to ascertain the precise state of AI today? The most significant chasm appears to lie between AI experts and the general public. The authors of the AI Index observe a stark divergence in perspectives: "AI experts and the general public view the technology’s trajectory very differently." This is most evident in their assessments of AI’s impact on employment. A staggering 73% of U.S. experts express a positive outlook on AI’s influence on jobs, in stark contrast to a mere 23% of the general public, revealing a 50-percentage-point disparity. Similar significant divides emerge when examining attitudes towards AI’s impact on the economy and healthcare.

This enormous gap begs a critical question: what do these experts, predominantly U.S.-based researchers who participated in AI conferences in 2023 and 2024, understand about AI that the broader public does not? My hypothesis is that the divergent experiences of experts and non-experts form a fundamental basis for these contrasting views. A software developer recently posted on X, "The degree to which you are awed by AI is perfectly correlated with how much you use AI to code." While this statement might carry a hint of jest, it points to a significant underlying truth.

The most advanced AI models from leading research institutions are now exceptionally adept at generating code. The inherent nature of technical tasks like coding, which possess definitive right or wrong outcomes, makes them comparatively easier to train AI models to perform effectively. Furthermore, the demonstrable profitability of AI-powered coding tools has incentivized model developers to channel substantial resources into their ongoing improvement. Consequently, individuals who leverage these tools for coding or other technical endeavors are experiencing AI at its most refined and capable.

Conversely, outside these specialized use cases, the performance of AI becomes more varied and less uniformly impressive. Large Language Models (LLMs) continue to exhibit what have become known as "dumb mistakes," a phenomenon aptly described by the term "jagged frontier." This signifies a reality where AI models excel in certain domains while lagging significantly in others.

The influential AI researcher Andrej Karpathy echoed this sentiment, noting on X, "Judging by my [timeline] there is a growing gap in understanding of AI capability." He further elaborated that "power users" – defined as those who employ LLMs for coding, mathematics, or research – not only stay abreast of the latest model releases but are often willing to invest substantial sums, such as $200 per month, for access to the most sophisticated versions. Karpathy emphasized the "staggering" nature of the improvements witnessed in these specific domains over the past year.

This rapid pace of advancement creates a dynamic where a user paying for a premium coding service like Claude Code is effectively interacting with a vastly different and more capable technology than someone who, six months prior, utilized a free version of Claude for a less demanding task like wedding planning. This disconnect in user experience leads to a situation where these two groups are, in essence, speaking past each other, their perceptions shaped by entirely different encounters with AI.

Where does this leave us? I believe we are navigating a landscape defined by two coexisting realities. On one hand, AI is undeniably more sophisticated and capable than many people realize. On the other hand, it continues to struggle with a multitude of tasks that are important to a significant portion of the population, and this limitation may persist. Any entity making predictions or significant investments regarding the future of AI, whether optimistically or pessimistically inclined, must acknowledge and incorporate this inherent duality into their assessments. The future of AI is not a singular, uniform trajectory, but rather a complex interplay of remarkable advancements and persistent shortcomings, experienced differently by various user groups. Understanding this "jagged frontier" is paramount to grasping the current divided opinions surrounding artificial intelligence.