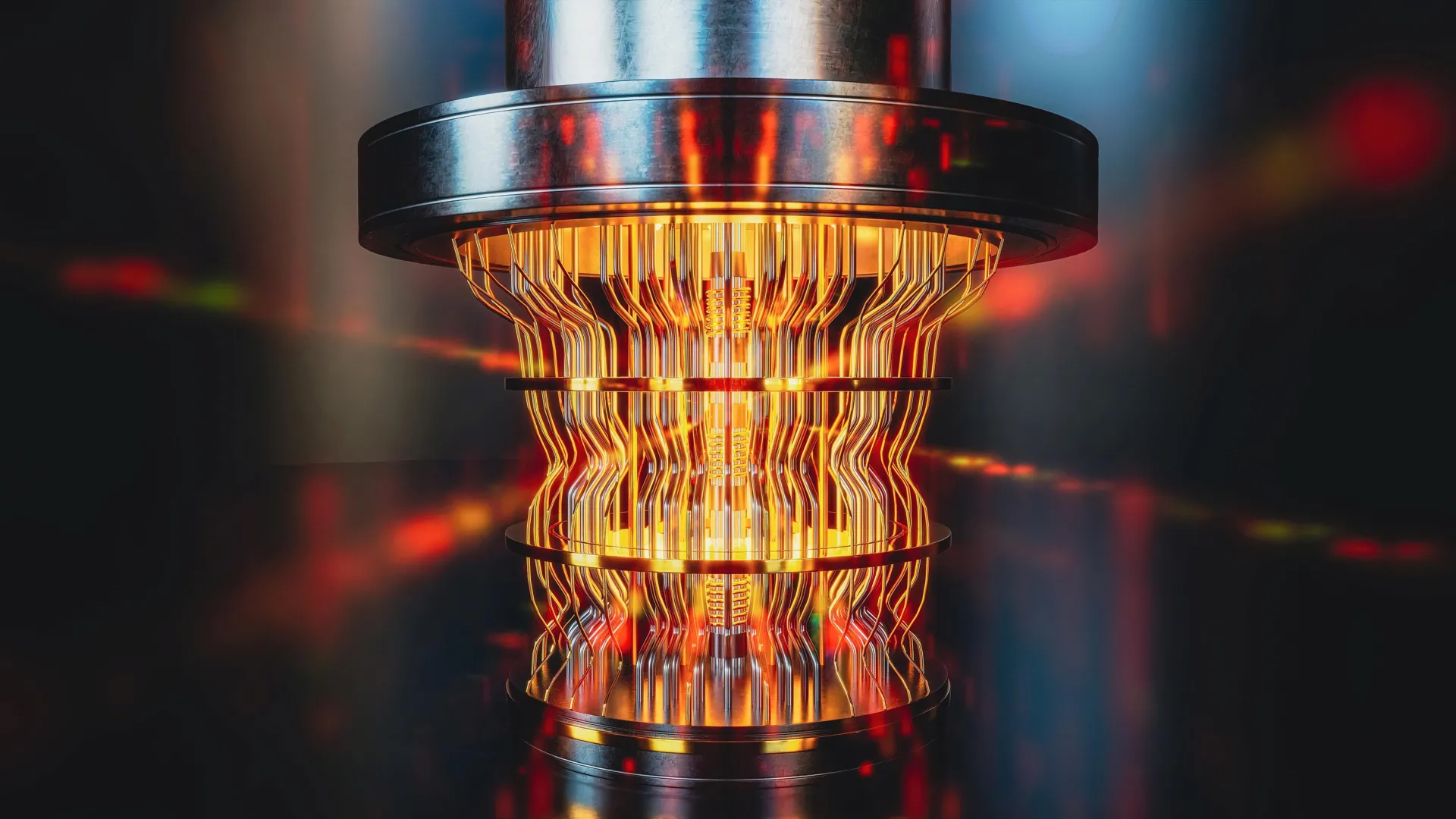

The intensifying global race to engineer the first truly reliable, large-scale commercial quantum computer has brought a pressing challenge into sharp focus: as these revolutionary devices begin to yield answers to problems deemed intractable for classical machines, how can we definitively verify the accuracy of these groundbreaking results? This is precisely the dilemma that a groundbreaking recent study, spearheaded by researchers at Swinburne University, has set out to address with innovative new methodologies.

The inherent difficulty in verifying quantum computations stems from the very nature of the problems they are designed to solve. As lead author Alexander Dellios, a Postdoctoral Research Fellow from Swinburne’s Centre for Quantum Science and Technology Theory, eloquently explains, "There exists a range of problems that even the world’s fastest supercomputer cannot solve, unless one is willing to wait millions, or even billions, of years for an answer." This staggering timescale renders direct comparison with classical counterparts practically impossible for validation purposes. Therefore, Dellios emphasizes, "in order to validate quantum computers, methods are needed to compare theory and result without waiting years for a supercomputer to perform the same task."

The Swinburne research team has risen to this challenge by developing novel techniques specifically designed to confirm the accuracy of a particular class of quantum devices known as Gaussian Boson Samplers (GBS). These GBS machines operate by harnessing photons, the fundamental particles of light, to perform intricate probability calculations. The complexity of these calculations is so immense that even the most formidable classical supercomputers would require millennia to complete them. The newly developed methods, however, offer a startlingly efficient alternative. "In just a few minutes on a laptop," Dellios proudly states, "the methods developed allow us to determine whether a GBS experiment is outputting the correct answer and what errors, if any, are present."

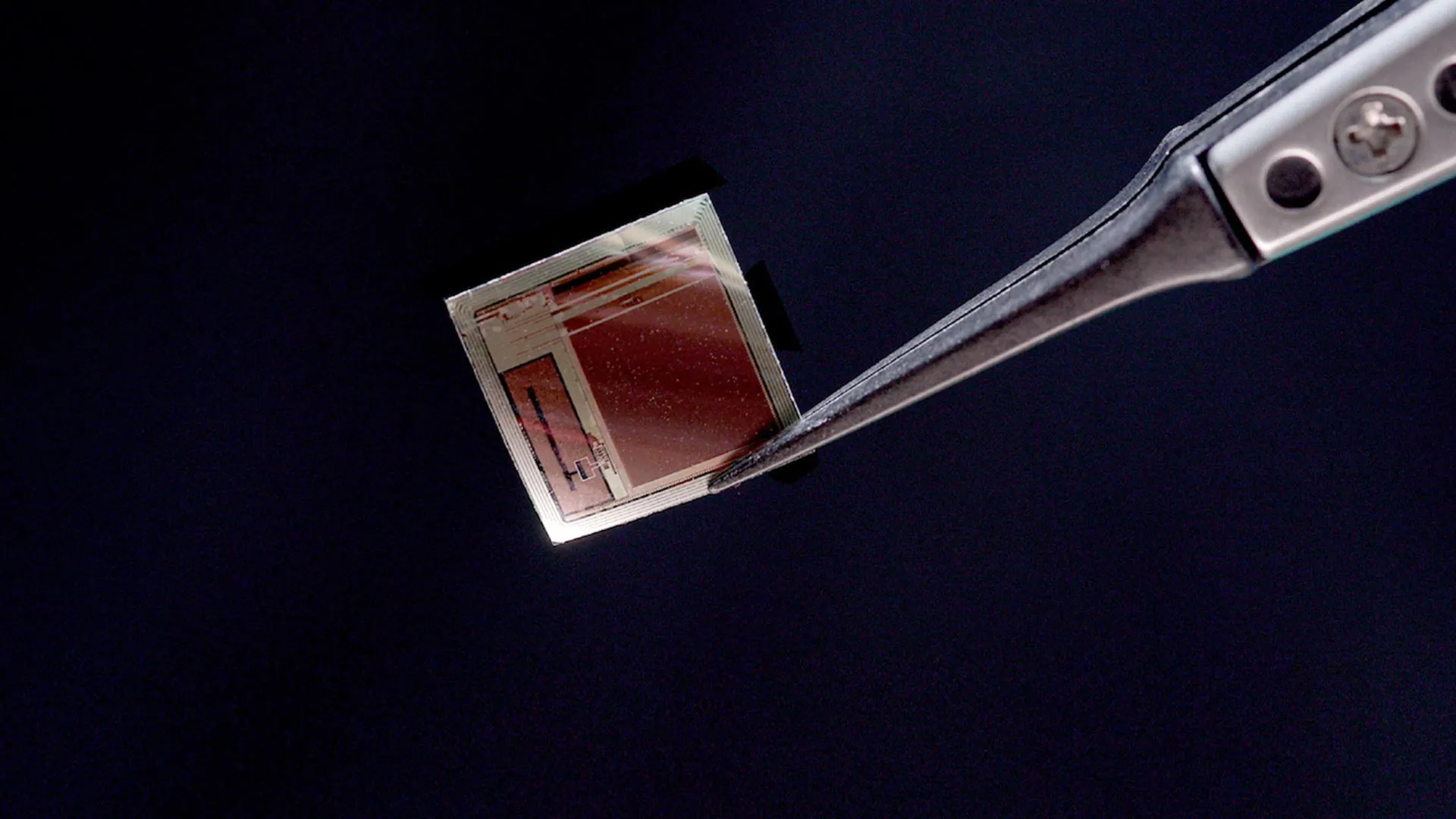

To rigorously test their innovative approach, the researchers applied it to a recently published GBS experiment. This experiment, in its original analysis, would have necessitated a staggering 9,000 years to reproduce using the most advanced supercomputers currently available. The results of the Swinburne team’s analysis were both revealing and critical. They demonstrated that the probability distribution generated by the experiment did not align with the intended target. More importantly, their techniques uncovered subtle, previously unacknowledged sources of "extra noise" within the experiment. This noise, a common impediment in quantum systems, can degrade the quality and reliability of the computations.

The discovery of this discrepancy and the identification of previously uncharacterized noise raise further questions that the researchers are now eager to explore. The next crucial step in their investigation involves determining whether the unexpected probability distribution observed is itself a computationally difficult phenomenon to reproduce, or if the identified errors were significant enough to cause the device to lose its fundamental "quantumness." The concept of "quantumness" refers to the unique properties of quantum mechanics, such as superposition and entanglement, that give quantum computers their extraordinary power. If errors are causing a loss of quantumness, it indicates a fundamental flaw in the quantum operation itself.

The implications of this research are profound and far-reaching, with the potential to significantly influence the trajectory of development for large-scale, error-free quantum computers that are suitable for widespread commercial adoption. This is a goal that Dellios and his team are deeply committed to achieving. He articulates the monumental nature of this endeavor: "Developing large-scale, error-free quantum computers is a herculean task that, if achieved, will revolutionize fields such as drug development, AI, cyber security, and allow us to deepen our understanding of the physical universe."

Dellios further emphasizes the critical role of robust validation in realizing this ambitious vision. "A vital component of this task is scalable methods of validating quantum computers," he asserts, "which increase our understanding of what errors are affecting these systems and how to correct for them, ensuring they retain their ‘quantumness’." This focus on understanding and correcting errors is paramount. Quantum computers are inherently sensitive to their environment, and even minor disturbances can lead to computational errors. Without reliable methods to detect and quantify these errors, it becomes impossible to build trust in their results, hindering their progress from laboratory curiosities to indispensable tools.

The development of techniques that can swiftly and accurately assess the output of quantum devices like GBS is not merely an academic exercise; it is a foundational requirement for progress. It allows researchers to iterate and improve their quantum hardware and algorithms with greater speed and confidence. Imagine a scenario where a quantum computer performs a complex simulation for drug discovery, and the results are presented. Without a way to verify the accuracy of these results, the entire endeavor, no matter how promising, would be built on shaky ground. The Swinburne University study provides precisely this missing piece of the puzzle, offering a tangible method for ensuring the integrity of quantum computations.

The "extra noise" identified in the GBS experiment serves as a salient example of the types of subtle issues that can plague quantum systems. This noise might manifest as unwanted interactions with the environment, imperfections in the quantum hardware, or even limitations in the control mechanisms used to manipulate the quantum bits (qubits). By being able to detect and characterize this noise, scientists can then work towards mitigating its effects, either by improving the physical isolation of the quantum system, refining the control pulses, or developing more sophisticated error correction codes.

The ability to determine if an observed deviation from the expected result is due to a computationally hard problem or a loss of quantumness is a significant distinction. If it’s the former, it could indicate that the quantum computer is indeed performing a task that is difficult for classical computers, and the challenge lies in understanding how to interpret the output. If it’s the latter, it points to a more fundamental problem with the quantum device’s ability to harness quantum phenomena. This nuanced understanding is essential for guiding future research and development efforts.

Ultimately, this research represents a critical step forward in the journey toward realizing the full potential of quantum computing. By providing a scalable and efficient means of verifying quantum computations, scientists are building the necessary infrastructure of trust and reliability that will underpin the commercialization of this transformative technology. As Dellios and his team continue their work, they are not just solving a scientific problem; they are laying the groundwork for a future where quantum computers are not only powerful but also dependable, ushering in an era of unprecedented innovation across science, technology, and beyond. The ability to confidently say, "Yes, this quantum computer is correct," is a quiet revolution in itself, enabling us to unlock the profound secrets of the universe and solve humanity’s most pressing challenges.