The current enterprise AI discourse often fixates on the race between foundation models, comparing benchmarks and marginal capability gains of behemoths like GPT and Gemini. However, the true, enduring competitive advantage lies not in the models themselves, but in the underlying infrastructure that governs how intelligence is applied, refined, and scaled. This is the concept of treating AI not as an on-demand utility, but as a foundational operating layer – a sophisticated combination of workflow software, data capture mechanisms, iterative feedback loops, and robust governance that seamlessly integrates AI into the fabric of real-world operations and compounds value with every use.

Model providers like OpenAI and Anthropic, while offering highly capable general-purpose intelligence via APIs, deliver a service that is largely stateless and loosely tethered to the intricate daily workflows where critical decisions are made. While their capabilities are impressive and increasingly interchangeable, the crucial distinction for enterprises lies in whether this intelligence resets with every prompt or accumulates and learns over time.

Incumbent organizations possess a unique advantage: the ability to embed AI as an integral operating layer. This involves instrumenting existing workflows with AI capabilities, establishing feedback loops that capture human decision-making, and implementing governance structures that transform individual tasks into repeatable, auditable policies. Within this framework, every exception, correction, and approval becomes a potent learning opportunity, allowing AI to continuously improve as the platform absorbs the organization’s collective work. The enterprises poised to lead the enterprise AI era will be those that can embed intelligence directly into their operational platforms and instrument those platforms to generate actionable signals from ongoing work.

The prevailing narrative often champions nimble startups, envisioning them out-innovating established players by building AI-native solutions from the ground up. This narrative holds true if AI is primarily viewed as a model-centric challenge. However, for many enterprise domains, AI is fundamentally a systems problem. It involves complex integrations, permission management, rigorous evaluation, and seamless change management. In such scenarios, the advantage accrues to those who already occupy high-volume, high-stakes workflows and can leverage that position to drive continuous learning and automation.

The Inversion: AI Executes, Humans Adjudicate

Traditional service organizations are built on a straightforward architecture: human experts leverage software to perform specialized tasks. Operators log into systems, navigate intricate workflows, make critical decisions, and process numerous cases. Technology serves as the medium, but human judgment remains the ultimate product.

An AI-native platform fundamentally inverts this paradigm. It ingests a problem, applies accumulated domain knowledge, and autonomously executes tasks with high confidence. When situations demand judgment beyond its current capabilities, it intelligently routes targeted sub-tasks to human experts.

This inversion of human-AI interaction transcends mere user interface redesign; it necessitates a rich foundation of raw material. This is only achievable when the platform is built upon years of accumulated domain expertise, comprehensive behavioral data, and deep operational knowledge.

The Three Compounding Assets Incumbents Already Possess

While AI-native startups benefit from a clean architectural slate and agility, they often struggle to manufacture the essential raw materials that make domain-specific AI defensible at scale. These critical assets include:

- Domain Expertise: Deep, nuanced understanding of a specific industry or business function, often tacit and difficult to codify.

- Behavioral Data: A rich history of how users interact with systems, make decisions, and navigate complex processes.

- Operational Knowledge: Insights into the real-world execution of tasks, including common exceptions, edge cases, and best practices.

Services companies, by their very nature, already possess all three of these critical components. However, these ingredients are not inherently insurmountable moats. They transform into a significant competitive advantage only when a company can systematically convert its complex, often messy, operational realities into AI-ready signals and institutional knowledge. This refined knowledge is then fed back into the workflow, creating a virtuous cycle of continuous system improvement.

Codifying Expertise into Reusable Signals

In most services organizations, expertise is tacit, tacit, and perishable. The most accomplished operators possess an intuitive understanding – heuristics developed over years, finely tuned edge-case instincts, and pattern recognition abilities that operate below the threshold of conscious thought.

At Ensemble, the strategic approach to tackling this challenge is through knowledge distillation. This involves the systematic conversion of expert judgment and operational decisions into machine-readable training signals.

Consider the example of healthcare revenue cycle management. Systems can be initially seeded with explicit domain knowledge. Through structured daily interactions with human operators, these systems can then progressively deepen their understanding. In Ensemble’s implementation, the system actively identifies knowledge gaps, formulates targeted questions for operators, and cross-references answers from multiple experts. This process captures not only consensus views but also the nuances of edge cases. The synthesized output forms a dynamic knowledge base that mirrors the situational reasoning behind expert-level performance.

Turning Decisions into a Learning Flywheel

Once a system achieves a sufficient level of reliability to be trusted, the next critical question becomes: how does it improve without waiting for infrequent, annual model upgrades? Every decision made by a skilled operator generates more than just a completed task. It produces a potential labeled example – a pairing of context with an expert action, and sometimes an observed outcome. When aggregated across thousands of operators and millions of decisions, this continuous stream of data can power supervised learning, rigorous evaluation, and targeted forms of reinforcement learning. This process effectively teaches systems to emulate expert behavior in real-world conditions.

For instance, an organization processing 50,000 cases weekly, capturing just three high-quality decision points per case, generates 150,000 labeled examples every week. This occurs without the need for a separate, resource-intensive data collection program.

A more sophisticated human-in-the-loop design places experts directly within the decision-making process. This allows systems to learn not only the correct answers but also the intricate ways in which ambiguity is resolved. Practically, humans intervene at critical branching points, selecting from AI-generated options, correcting underlying assumptions, and guiding the workflow. Each intervention serves as a high-value training signal. When the platform encounters an edge case or a deviation from the expected process, it can prompt for a brief, structured rationale, capturing key decision factors without demanding lengthy free-form reasoning logs.

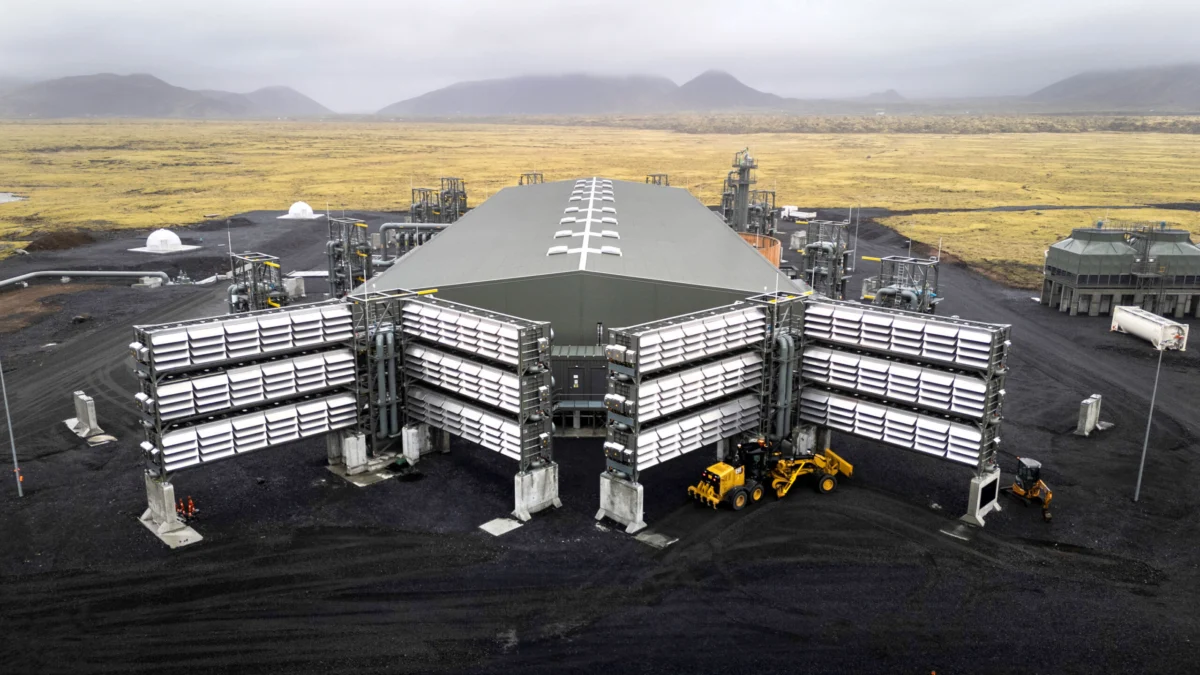

Building Toward Expertise Amplification

The ultimate objective is to permanently embed the collective expertise of thousands of domain experts – their knowledge, decisions, and reasoning processes – into an AI platform. This platform, in turn, amplifies the capabilities of every operator. When executed effectively, this strategy yields a quality of execution that neither humans nor AI can achieve in isolation: enhanced consistency, improved throughput, and measurable operational gains. Operators can then redirect their focus to more consequential work, supported by an AI that has already completed the analytical groundwork across thousands of analogous prior cases.

The broader implication for enterprise leaders is clear and actionable. Competitive advantages in AI will no longer be solely determined by access to generalized, off-the-shelf models. True differentiation will emerge from an organization’s capacity to capture, refine, and compound its intrinsic knowledge, its data, its decision-making processes, and its operational judgment. This must be coupled with the development of robust controls essential for high-stakes environments. As AI transitions from a phase of experimentation to becoming integral infrastructure, the most sustainable edge will likely belong to those companies that possess a deep understanding of their operations, can effectively instrument them, and can transform that understanding into systems that demonstrably improve with continuous use.