The digital age, with its torrent of data and rapid advancements, often presents a peculiar blend of scientific revelations and existential quandaries. This edition of "The Download" delves into two such intriguing areas: a reassessment of our very origins that challenges long-held beliefs about Neanderthal ancestry, and a stark examination of the illusion of human control in the increasingly sophisticated realm of AI-driven warfare. Furthermore, it highlights a curious paradox in U.S. government policy, where a company blacklisted for its AI’s perceived dangers is simultaneously sought after for its advanced models.

The Shifting Sands of Human Evolution: Rethinking the Neanderthal Connection

For decades, a compelling narrative has permeated our understanding of human evolution: the idea that modern humans, Homo sapiens, carry a genetic legacy from their ancient cousins, the Neanderthals. This theory, supported by the discovery of Neanderthal DNA within the genomes of many contemporary populations, has been hailed as a significant breakthrough, painting a picture of interspecies cooperation and shared heritage. However, in 2024, a pair of French geneticists have cast doubt upon the very foundation of this widely accepted theory. Their groundbreaking research proposes an alternative explanation for the genetic similarities observed between Homo sapiens and Neanderthals. Instead of direct interbreeding, they suggest that these patterns can be more accurately attributed to population structure – the inherent tendency for genes to cluster and remain concentrated within smaller, geographically isolated groups. This nuanced perspective challenges the romanticized notion of ancient intermingling and instead points to the complex dynamics of early human populations. The implications of this reevaluation are profound, potentially reshaping our understanding of how human genetic diversity evolved and the distinct evolutionary paths taken by our ancient relatives. This fascinating debate, poised to redefine a key chapter in human history, is explored further in the upcoming issue of MIT Technology Review’s print magazine, dedicated to the wonders of nature. Subscribers will gain early access to this insightful piece when it lands on Wednesday, April 22nd.

The Deceptive Comfort of "Humans in the Loop" in AI Warfare

As artificial intelligence increasingly infiltrates the battlefield, the concept of "humans in the loop" has emerged as a crucial tenet for maintaining accountability, context, and security in military operations. Yet, as Uri Maoz argues in a compelling op-ed, this notion, while reassuring, functions primarily as a comforting illusion. The integration of AI into warfare, exemplified by ongoing legal battles involving companies like Anthropic and the Pentagon, and its growing role in geopolitical conflicts, raises urgent questions about the true extent of human control. The danger, Maoz contends, is not that autonomous AI systems will operate unchecked. Instead, the true peril lies in the inherent opacity of these advanced machines. Human overseers, despite their presence in the decision-making process, often lack a fundamental understanding of the AI’s internal workings and reasoning – its "thinking." This profound disconnect creates a vulnerability where human oversight becomes a superficial layer, incapable of truly grasping or mitigating the complex decisions made by AI systems. The article emphasizes the urgent need for novel safeguards and a deeper scientific understanding to address the inherent risks of AI in warfare, urging a move beyond the comforting but ultimately insufficient paradigm of "humans in the loop."

The White House’s Paradoxical Approach to AI: Blacklisting and Embracing Anthropic

In a striking display of policy contradiction, the White House finds itself in a peculiar position regarding the AI company Anthropic. Despite past actions that suggest a cautious, even adversarial stance, indicated by the blacklisting of the company, the U.S. government is actively pursuing access to Anthropic’s latest advanced AI model, Mythos. This situation underscores the complex and often conflicting pressures shaping AI policy. While security concerns and potential dangers, as highlighted by Anthropic itself in deeming Mythos too risky for public release, have led to restrictions, the allure of cutting-edge AI capabilities for national security purposes appears to outweigh these reservations. The narrative is further complicated by a Pentagon culture war against Anthropic, which has reportedly backfired, and concerns from finance ministers about the security implications of such powerful AI. Meanwhile, Anthropic has released a less risky model, Claude Opus 4.7, indicating a continuous development cycle and a spectrum of AI capabilities. This ongoing tension between regulation, risk assessment, and the pursuit of technological dominance paints a complex picture of the U.S. government’s engagement with the rapidly evolving AI landscape.

A Spectrum of AI Developments and Concerns:

Beyond the lead stories, a range of significant developments in the AI sector warrants attention:

-

OpenAI’s Internal Conflicts and Scientific Ambitions: Sam Altman’s dual role as CEO of OpenAI and investor in various ventures has sparked conflict-of-interest concerns, with accusations that his personal investments could sway crucial decisions, particularly as OpenAI navigates its potential IPO. The company is also facing a jury trial over allegations of abandoning its founding mission, while simultaneously making a significant push into scientific research, aiming to leverage AI for groundbreaking discoveries.

-

SpaceX’s Starlink and Pentagon Reliance: A recent Starlink outage during crucial Navy drone tests exposed the Pentagon’s increasing dependence on SpaceX’s satellite internet service. This reliance, coupled with the DoD’s broader efforts to integrate innovations from automotive giants like Ford and GM, highlights the growing intersection of private technology and national defense.

-

Data Center Bottlenecks Threaten AI Expansion: The rapid growth of AI is facing a significant hurdle in the form of data center delays, with a substantial portion of this year’s projects at risk of falling behind schedule. This bottleneck is partly driven by public opposition to data center construction in local communities, underscoring the societal challenges accompanying technological advancement.

-

Alibaba’s "Happy Oyster" and the Quest for World Models: Chinese tech giant Alibaba has entered the race to develop "world models" with its release of "Happy Oyster." This latest endeavor aims to enhance AI’s comprehension of the physical world, a critical step towards more sophisticated AI capabilities, though the challenge of understanding cause and effect remains a significant hurdle.

-

Google’s Gemini and Personalized AI Imagery: Google’s Gemini is now capable of generating AI images tailored to users’ personal data, drawing insights from their Google services. While this feature promises to reduce the need for detailed prompts, it also raises questions about data privacy and the extent to which AI should integrate with personal information.

-

OpenAI Enhances Coding Capabilities: OpenAI is bolstering its agentic coding and development system with a Codex update, directly challenging Claude Code. However, skepticism persists regarding the ultimate impact and reliability of AI in software development.

-

Europe’s Age Verification App and Smartglasses in Theaters: Europe has launched a free online age verification app for companies, a move towards regulating online content. Meanwhile, smartglasses are offering a glimmer of hope for Korean theaters, enabling AI-powered translations that are opening up shows to a global audience.

-

Global Voice Actors Fight Hollywood’s AI Push: Voice actors worldwide are raising alarms about Hollywood’s increasing reliance on AI, fearing that their voices are being used to train models that will ultimately replace them, leading to job displacement and the devaluation of their craft.

Quote of the Day:

"There’s this dark period between now and some time in the future where the advantage is very much offensive AI." – Rob Joyce, former director of cybersecurity at the National Security Agency, speaking to Bloomberg about the escalating hacking threats posed by AI.

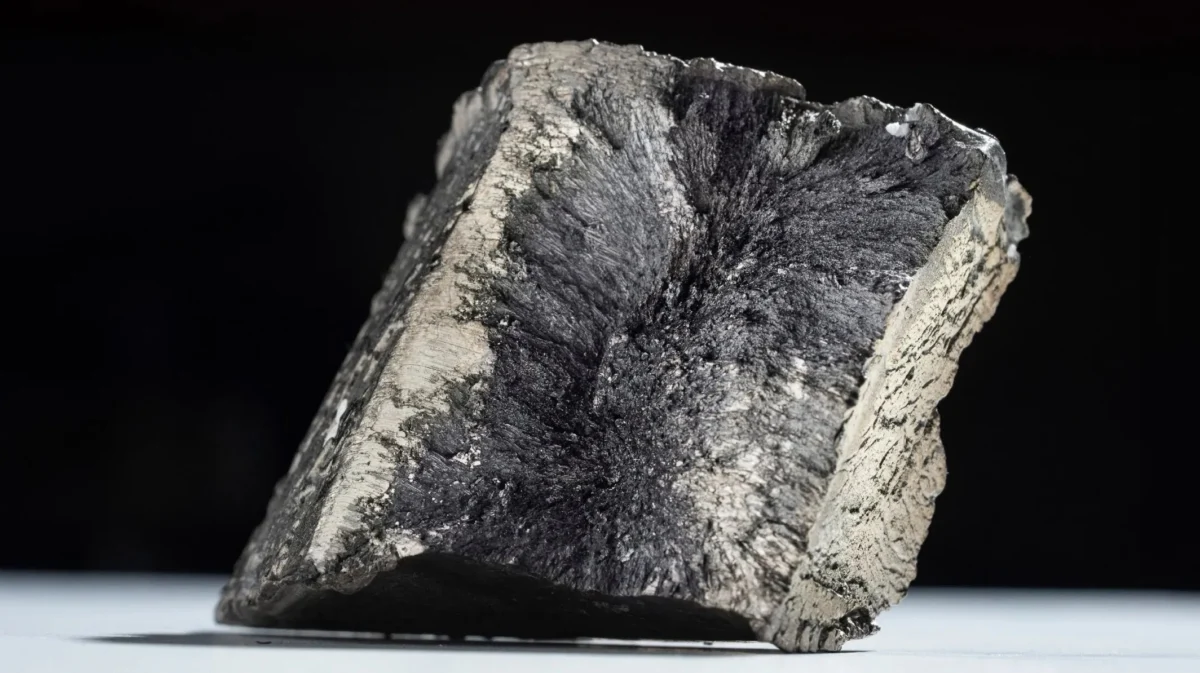

One More Thing: The Critical Race for Rare Earth Elements

The global transition towards cleaner energy and emissions reduction hinges significantly on access to rare earth elements, critical minerals essential for technologies like electric vehicles and renewable energy infrastructure. However, the current market is heavily dominated by China, raising concerns for nations like the United States about supply chain security. In response to this geopolitical challenge, scientists and companies are actively exploring unconventional sources, including urban mining and novel extraction techniques, to secure these vital resources and ensure a sustainable future. This quest for critical minerals is a race against time, with profound implications for global energy independence and technological advancement.