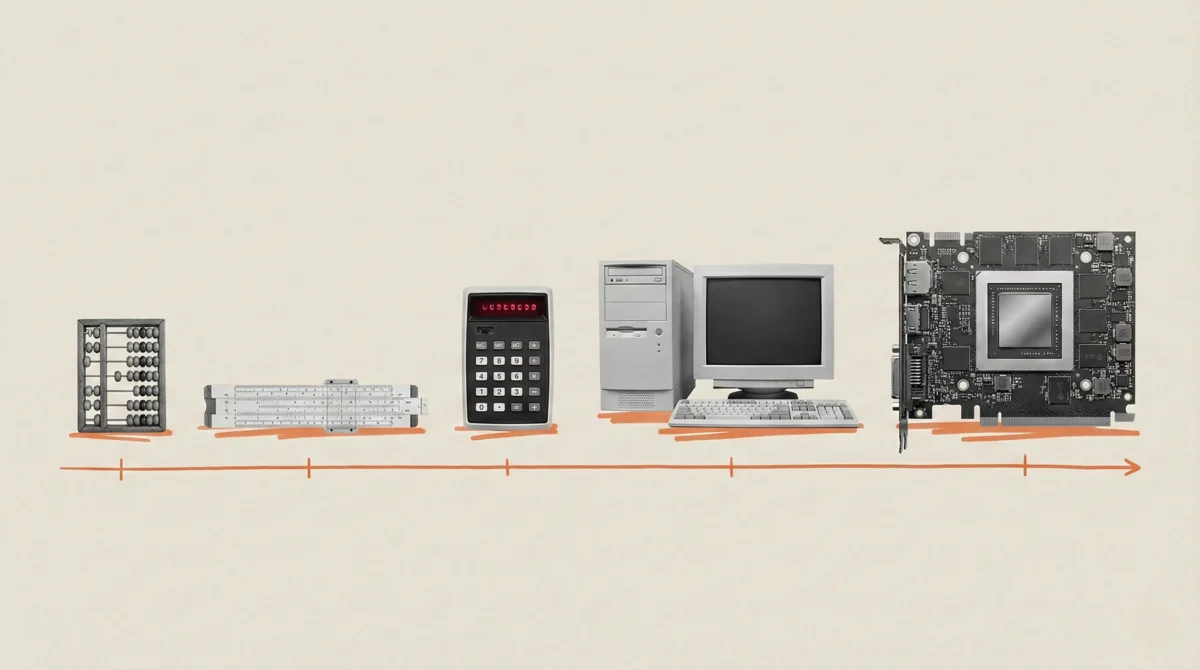

Our evolutionary wiring is fundamentally calibrated for a linear existence. We grasp the concept of covering a certain distance by walking for an hour, and intuitively understand that doubling that time will double the distance. This ingrained understanding, honed on the savannah, is a powerful tool for navigating our physical reality, but it proves catastrophically inadequate when confronting the accelerating, exponential nature of Artificial Intelligence. Since Mustafa Suleyman began his work in AI in 2010, the sheer volume of training data fed into cutting-edge AI models has escalated by an almost incomprehensible factor of one trillion. This dramatic increase, from approximately 10¹⁵ floating-point operations (flops) – the fundamental unit of computation – for early AI systems to over 10²⁵ flops for today’s most advanced models, represents an explosion. This singular fact underpins and drives every subsequent advancement in the field.

Despite this relentless progress, a chorus of skeptics continues to forecast inevitable roadblocks and limitations. These predictions, however, have consistently failed to materialize against the backdrop of an epic, generational surge in computational power. Common arguments from skeptics often center on the perceived slowdown of Moore’s Law, concerns about data scarcity, or limitations imposed by energy consumption. Yet, when one examines the confluence of forces propelling this AI revolution, the underlying exponential trend becomes strikingly apparent and remarkably predictable. To truly grasp why, it is essential to delve into the intricate and rapidly evolving realities that lie beneath the surface of the headlines.

Imagine, for a moment, the process of AI training as a vast room filled with individuals diligently working with calculators. For an extended period, augmenting computational power was akin to simply adding more people with calculators to that room. A significant portion of their time, however, was spent in idleness, their fingers tapping rhythmically on desks, awaiting the next set of numbers for their calculations. Every pause represented a squandered opportunity, a wasted potential. The current AI revolution transcends this simplistic model of merely providing more and better calculators. It is fundamentally about ensuring that these computational tools are never idle and that they operate in perfect, synchronized concert.

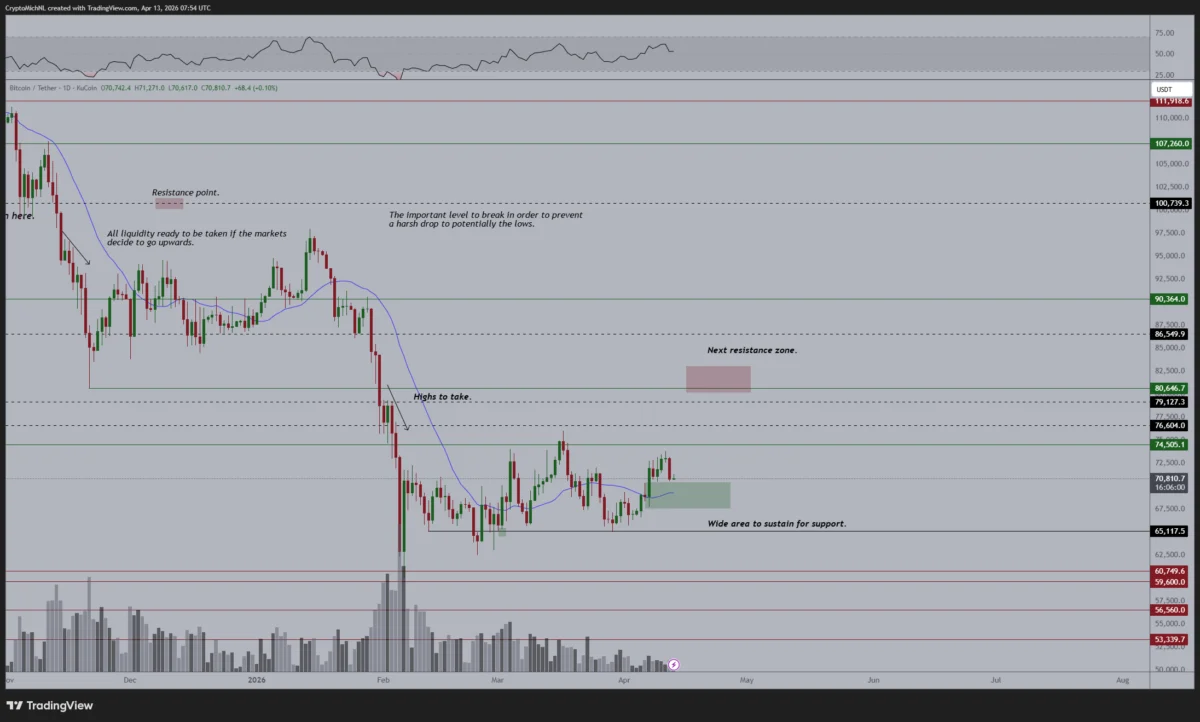

This paradigm shift is enabled by the convergence of three critical technological advancements. Firstly, the basic computational units – the "calculators" – have themselves become dramatically faster. Nvidia’s chips, for instance, have delivered a performance increase exceeding sevenfold in just six years, escalating from 312 teraflops in 2020 to an impressive 2,250 teraflops today. Microsoft’s own Maia 200 chip, launched in January, offers a remarkable 30% improvement in performance per dollar compared to other hardware in their fleet. Secondly, the speed at which data can be fed to these processors has been revolutionized by High Bandwidth Memory (HBM). This technology stacks chips vertically, much like miniature skyscrapers, with the latest generation, HBM3, tripling the data bandwidth of its predecessor. This ensures that processors are consistently supplied with data, eliminating the bottlenecks of waiting. Thirdly, the analogy of a room filled with individuals has evolved into a sophisticated, interconnected infrastructure. Technologies such as NVLink and InfiniBand are now capable of linking hundreds of thousands of GPUs, forming warehouse-scale supercomputers that function as unified cognitive entities. This level of interconnectedness was simply unachievable just a few years ago.

These synergistic gains culminate in a dramatic amplification of overall compute power. Consider the training of a language model: in 2020, this task required 167 minutes on eight GPUs. Today, on equivalent modern hardware, it takes under four minutes. To provide perspective, Moore’s Law would have predicted only about a fivefold improvement over this same period. Instead, we have witnessed a fiftyfold increase. The journey has been from the early days of training AlexNet, the seminal image recognition model that ignited the modern deep learning boom in 2012, using just two GPUs, to the deployment of over 100,000 GPUs in today’s most expansive clusters, each individual GPU far surpassing the capabilities of its predecessors.

Furthermore, a parallel revolution is unfolding in the realm of software. Research from Epoch AI indicates that the computational resources required to achieve a specific performance level are now halving approximately every eight months. This rate of improvement significantly outpaces the traditional 18-to-24-month doubling period associated with Moore’s Law. The costs associated with deploying some recent AI models have plummeted by as much as 900 times on an annualized basis, rendering AI significantly more affordable to implement.

The projections for the near future are equally astonishing. Leading AI research labs are currently expanding their capacity at a rate of nearly 400% annually. Since 2020, the compute power utilized for training frontier AI models has grown by an astounding 500% every year. Global AI-relevant compute is anticipated to reach the equivalent of 100 million H100 processors by 2027, representing a tenfold increase within a three-year span. When all these factors are aggregated, we are looking at a potential thousandfold increase in effective compute power by the close of 2028. It is entirely plausible that by 2030, we could be bringing an additional 200 gigawatts of compute online each year – a figure comparable to the peak energy consumption of the United Kingdom, France, Germany, and Italy combined.

What are the tangible outcomes of this unprecedented surge in computational power? Suleyman envisions a profound transition from rudimentary chatbots to sophisticated, near-human-level agents. These will be semiautonomous systems capable of undertaking complex, multi-day coding projects, managing week- and month-long initiatives, initiating calls, negotiating contracts, and orchestrating intricate logistics. The era of simple question-answering assistants will recede, replaced by dynamic teams of AI workers capable of deliberation, collaboration, and execution. We are currently in the nascent stages of this transformative period, and its implications extend far beyond the technology sector. Every industry that relies on cognitive work stands to be fundamentally reshaped.

The most apparent constraint on this exponential growth is energy. A single AI rack, comparable in size to a refrigerator, can consume 120 kilowatts, an amount equivalent to the power usage of approximately 100 homes. However, this escalating energy demand is now encountering another powerful exponential trend: the dramatic reduction in the cost of solar energy, which has fallen by nearly 100-fold over the past 50 years, and battery prices, which have experienced a 97% decrease over three decades. This convergence offers a viable pathway towards sustainable and clean energy scaling for AI development.

The necessary capital is being deployed, and the engineering challenges are being met with innovative solutions. The $100 billion clusters, the 10-gigawatt power demands, and the warehouse-scale supercomputers are no longer the stuff of science fiction. Ground is actively being broken for these monumental projects across the United States and globally. Consequently, we are progressing towards a state of genuine cognitive abundance. At Microsoft AI, this is precisely the future that our superintelligence lab is actively planning for and constructing.

Skeptics, still operating within the confines of a linear mindset, will undoubtedly continue to forecast diminishing returns and anticipate plateaus. They will continue to be surprised by the relentless pace of innovation. The explosion in computational power is, unequivocally, the defining technological narrative of our era. And, crucially, it is only just beginning.