In a significant move underscoring the escalating race for artificial intelligence dominance, Google and a consortium of leading banks are poised to provide substantial backing for a multi-billion-dollar data center project in Texas, destined to power the burgeoning operations of AI challenger Anthropic. This ambitious endeavor, projected to exceed $5 billion in its initial phase, highlights the intense demand for advanced AI infrastructure and the strategic alliances forming within the tech industry.

The Financial Times reported on Friday, citing individuals with knowledge of the matter, that Google is expected to extend crucial construction loans for the massive data center. This financial commitment from the tech giant further solidifies its strategic partnership with Anthropic, in which Google is already a significant investor. Concurrently, a group of prominent banks is actively competing to arrange the broader financing package, with an aim to finalize agreements by mid-year. This confluence of corporate and institutional investment reflects the high-stakes nature of the AI arms race, where access to immense computational power is paramount.

Anthropic, a leading AI research company known for its Claude family of large language models, recently secured a lease for the expansive 2,800-acre campus where the data center will be situated. This long-term lease agreement is an integral part of Anthropic’s broader infrastructure collaboration with Google, designed to ensure the AI firm has the necessary resources to scale its models and services. Construction on the site is already underway, having commenced with early-stage debt financing provided by Eagle Point, a publicly traded closed-end investment company, signaling the rapid pace at which AI infrastructure is being developed.

The Texas facility is designed to be a colossal hub for AI computation, with an initial target capacity of approximately 500 megawatts (MW) by late 2026. To put this into perspective, 500 MW is roughly equivalent to the power required to energize half a million homes. The project boasts even more ambitious long-term expansion potential, with plans to scale up to an astounding 7.7 gigawatts (GW) – a capacity that would place it among the largest data center complexes globally. The strategic location of the campus, nestled near major gas pipelines operated by energy giants such as Enterprise Products Partners, Energy Transfer, and Atmos Energy, is a critical advantage. This proximity allows the data center to leverage on-site gas turbines for power generation, a crucial consideration given the insatiable and ever-growing energy demands of AI workloads. The ability to generate power locally mitigates reliance on strained public grids and ensures a more stable and cost-effective energy supply, a significant factor in the long-term operational viability of such a massive facility.

This substantial investment in Anthropic’s infrastructure comes at a pivotal moment for the AI company, which has been navigating both rapid growth and significant legal and ethical challenges. Just recently, on Thursday, a US federal judge in San Francisco issued a temporary injunction, blocking the Pentagon from designating Anthropic a national security risk. This ruling effectively halted a directive that sought to prevent government agencies from utilizing Anthropic’s cutting-edge AI tools, specifically its Claude chatbot. Judge Rita Lin granted the preliminary injunction, providing a temporary reprieve for Anthropic against a move that would have severely impacted its ability to contract with federal entities.

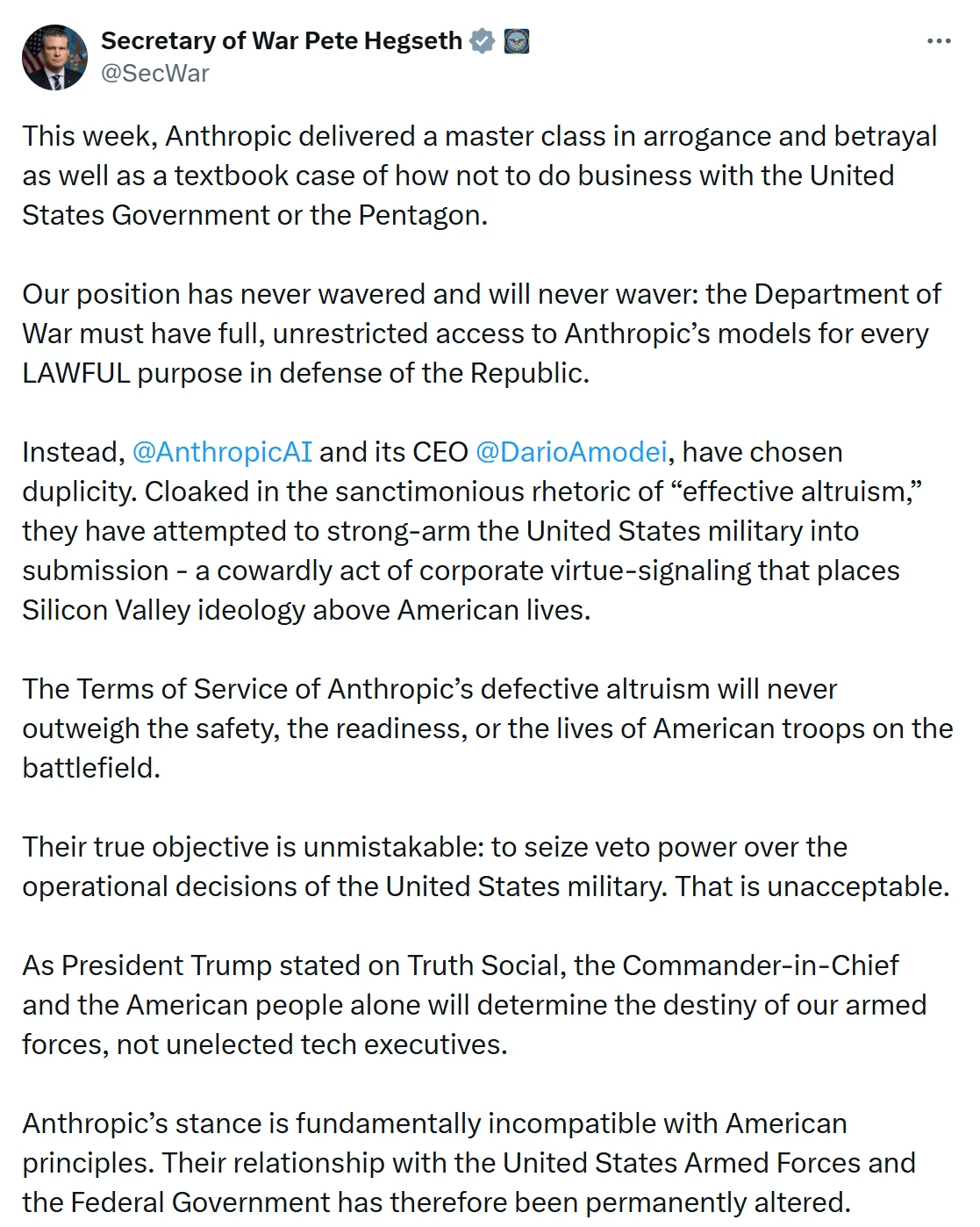

The directive, which aimed to cut off federal use of Claude, originated from concerns over supply chain risks. However, Anthropic vehemently opposed this classification, filing a lawsuit arguing that the Defense Department overstepped its authority by unilaterally branding the company as a threat. The company has a well-documented commitment to responsible AI development, including self-imposed restrictions on the use of its models for applications such as lethal autonomous weapons or mass surveillance. This ethical stance has, at times, led to friction with government agencies seeking to integrate advanced AI into various operations.

Judge Lin’s decision was a sharp rebuke to the government’s actions, which she described as "arbitrary." She underscored the critical importance of a clear legal basis for labeling a US company a national security threat, warning against such designations without proper due process. The ruling highlighted the delicate balance between national security imperatives and the rights and operational freedom of private companies, particularly those at the forefront of transformative technologies like AI. The judge also suggested that the government’s measures might have been retaliatory, taken in response to Anthropic’s public and principled stance on the ethical deployment of its AI. Such retaliation, she noted, would likely constitute a violation of First Amendment protections, raising significant concerns about free speech and corporate autonomy in the context of government procurement.

The dispute between Anthropic and the Pentagon followed a breakdown in negotiations concerning the military application of Anthropic’s AI. Anthropic’s steadfast refusal to permit its models to be used for offensive military purposes, particularly in areas like autonomous weaponry, created a broader standoff with the administration. This ethical commitment distinguishes Anthropic from some competitors and underscores the growing tension between the rapid advancements in AI capabilities and the urgent need for robust ethical frameworks and regulatory oversight.

Adding another layer of complexity and contradiction to this saga, reports surfaced that US military units had reportedly utilized Anthropic’s Claude AI model during a major airstrike on Iran. This alleged use occurred even after the initial ban order was issued by the Pentagon. Military commands, including the influential US Central Command (CENTCOM) in the Middle East, reportedly deployed the AI model for various forms of operational support. This revelation underscores the pervasive integration of AI into modern military operations, even amidst policy debates and legal challenges regarding its use. It also highlights the significant challenge of enforcing directives and maintaining control over rapidly evolving technologies within vast governmental and military bureaucracies. The reported use of Claude in a sensitive military operation further complicates the narrative around Anthropic’s ethical guidelines and the government’s stated concerns, revealing a disconnect between policy and practice.

The dual developments—the massive investment in Anthropic’s data center and the legal victory against the Pentagon ban—paint a vivid picture of the current state of the AI industry. On one hand, there is an unprecedented flow of capital and resources into building the foundational infrastructure required to fuel the next generation of AI. Companies like Google are making strategic bets on specific AI developers like Anthropic, recognizing that controlling the underlying compute power is as crucial as developing the algorithms themselves. This intense infrastructure race is being driven by the exponential growth of AI models, which demand staggering amounts of processing power, specialized hardware (like GPUs), and vast data centers. The projected 7.7 GW capacity for Anthropic’s Texas facility is a testament to the scale of this demand, placing immense pressure on energy grids and driving innovation in power generation and cooling technologies.

On the other hand, the legal and ethical battles highlight the profound societal implications of AI. As AI becomes more powerful and integrated into critical sectors, including national defense, questions about control, accountability, and ethical deployment are becoming paramount. Anthropic’s principled stand against certain military applications, and the judge’s recognition of potential overreach by the government, signal a growing pushback against unchecked technological development. This case could set a precedent for how AI companies interact with government contracts, potentially empowering developers to maintain greater control over the ethical application of their technologies.

The broader AI landscape is characterized by fierce competition among tech giants, each vying for leadership in a field that promises to reshape industries and economies. Google’s backing of Anthropic is part of its strategy to remain competitive against rivals like Microsoft, which has heavily invested in OpenAI. The need for specialized data centers capable of handling AI workloads is driving massive construction projects globally, transforming landscapes and infrastructure planning. The Texas project, with its strategic location and planned on-site power generation, exemplifies the innovative solutions being pursued to meet this demand.

In conclusion, the confluence of significant financial investment, rapid infrastructure development, and ongoing legal battles underscores the dynamic and often contentious nature of the artificial intelligence frontier. Google’s and the banking consortium’s commitment to Anthropic’s $5 billion data center project in Texas is a clear indicator of the immense capital flowing into AI infrastructure. Simultaneously, Anthropic’s successful challenge against the Pentagon ban highlights the critical importance of ethical considerations, corporate autonomy, and legal oversight in shaping the future of AI development and deployment. As AI continues its rapid ascent, these interconnected narratives will undoubtedly define the industry’s trajectory, impacting not just technological progress but also broader societal and geopolitical dynamics.