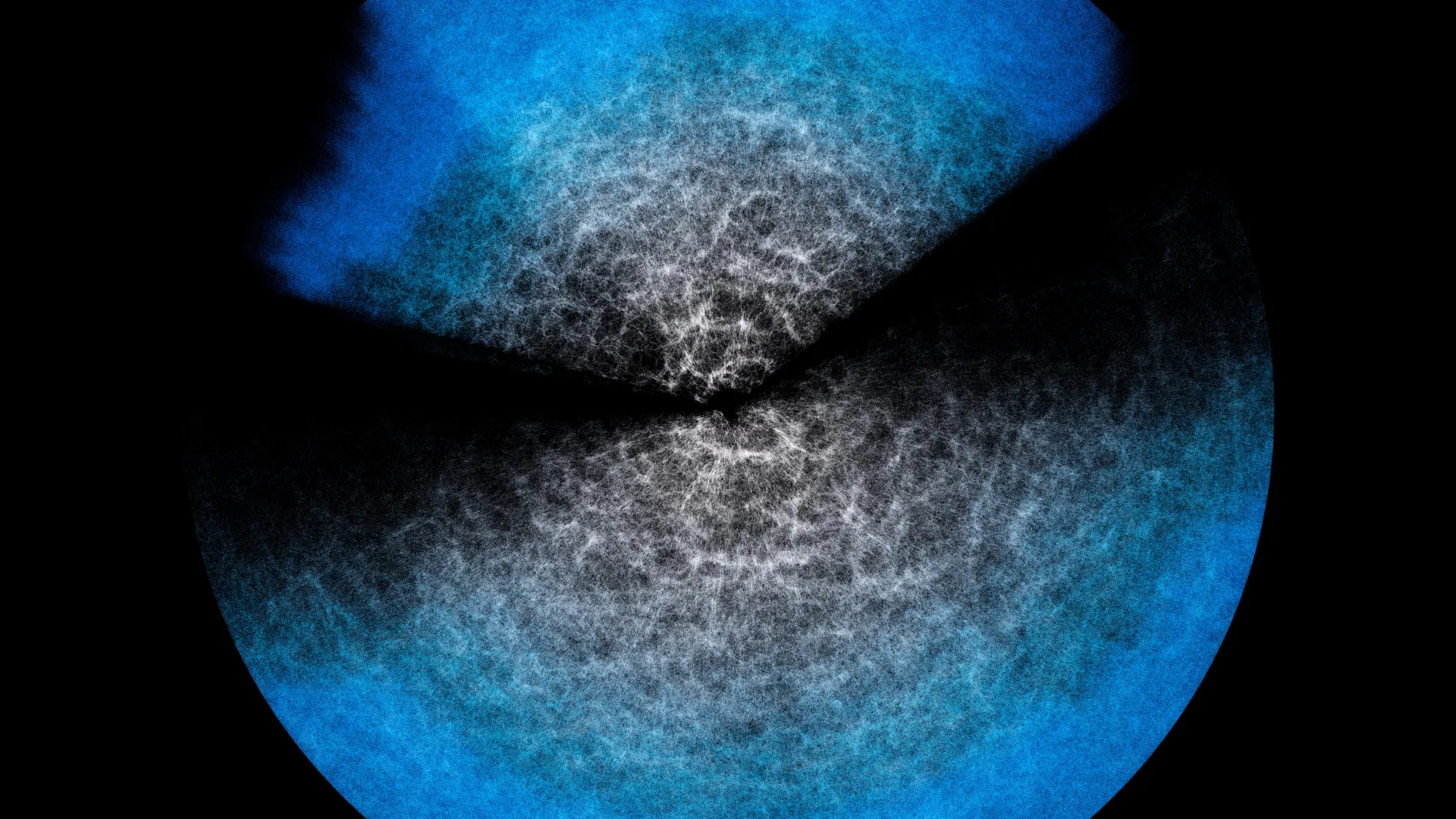

The sheer immensity of the Universe can be a mind-boggling concept. Imagine the grandeur of a galaxy – a swirling collection of billions of stars, gas, and dust, bound together by gravity. Yet, in the grand tapestry of the cosmos, even our most magnificent galaxies are but minuscule specks. These "tiny dots," as they are often referred to, are not scattered randomly but are organized into vast structures. They coalesce into clusters, which in turn aggregate into superclusters, forming an intricate cosmic web. This colossal, three-dimensional skeletal framework of the Universe is characterized by dense filaments of matter threaded with immense, empty voids. To grasp this mind-bending scale and complexity, scientists employ sophisticated theoretical models and leverage cutting-edge astronomical data.

Understanding and visualizing this gargantuan structure presents a formidable challenge. The solution lies in a meticulous fusion of fundamental physics governing the Universe with observational data gathered by powerful astronomical instruments. From this synthesis, theoretical models are constructed, such as the Effective Field Theory of Large-Scale Structure (EFTofLSS). These models, when "fed" with real-world astronomical observations, provide a statistical description of the cosmic web. This statistical approach allows researchers to infer and estimate the key parameters that define its structure and evolution.

However, models like EFTofLSS are notoriously computationally intensive, demanding significant time and processing power. As the volume of astronomical datasets available to scientists grows exponentially, driven by increasingly sensitive telescopes and ambitious sky surveys, the need for more efficient analysis methods becomes paramount. This necessity for speed without sacrificing precision has led to the development of "emulators." Emulators are designed to "imitate" the behavior of these complex models, but crucially, they operate at a dramatically accelerated pace.

The inherent question that arises with any "shortcut" is the potential risk of compromising accuracy. To address this, an international consortium of researchers, comprising institutions such as the Italian National Institute for Astrophysics (INAF), the University of Parma in Italy, and the University of Waterloo in Canada, has undertaken a comprehensive study. Their findings, published in the prestigious Journal of Cosmology and Astroparticle Physics (JCAP), focus on the performance of an emulator they designed, named Effort.jl. The results are striking: Effort.jl demonstrates that it can achieve essentially the same level of correctness as the intricate model it emulates, and in some instances, it can even reveal finer details. What is truly revolutionary is its computational speed: Effort.jl can perform these analyses in a matter of minutes on a standard laptop, a stark contrast to the supercomputers traditionally required for the original models.

Marco Bonici, a researcher at the University of Waterloo and the lead author of the study, eloquently illustrates the concept with an analogy. "Imagine wanting to study the contents of a glass of water at the level of its microscopic components, the individual atoms, or even smaller," he explains. "In theory, you can. But if we wanted to describe in detail what happens when the water moves, the explosive growth of the required calculations makes it practically impossible." He continues, "However, you can encode certain properties at the microscopic level and see their effect at the macroscopic level, namely the movement of the fluid in the glass. This is what an effective field theory does, that is, a model like EFTofLSS, where the water in my example is the Universe on very large scales and the microscopic components are small-scale physical processes." This analogy effectively highlights how EFTofLSS abstracts complex, small-scale physics to model the large-scale structure of the Universe.

The theoretical model, in essence, statistically explains the underlying structure that gives rise to the observed data. Astronomical observations are fed into the computational code, which then generates a "prediction" of the cosmic web. However, this process is time-consuming and requires substantial computing resources. Given the current sheer volume of astronomical data – and the even greater deluge expected from ongoing and upcoming surveys like DESI (Dark Energy Spectroscopic Instrument), which has already released its initial data, and the Euclid space telescope – performing this exhaustive computation every single time an analysis is needed is simply not practical.

"This is why we now turn to emulators like ours, which can drastically cut time and resources," Bonici elaborates. An emulator functions by mimicking the computations of the original model. At its core lies a sophisticated neural network. This neural network is trained to recognize and associate specific input parameters with the model’s pre-computed predictions. Once trained on a diverse set of the model’s outputs, the network gains the ability to generalize, meaning it can accurately predict the model’s response for combinations of parameters it has not explicitly encountered during training. It’s important to note that the emulator does not "understand" the underlying physics in the way the original model does. Instead, it becomes exceptionally adept at recognizing and anticipating the theoretical model’s established responses.

The originality of Effort.jl lies in its innovative approach to further optimizing the training phase. It ingeniously incorporates existing knowledge about how predictions change when parameters are slightly altered. Rather than forcing the neural network to "re-learn" these fundamental relationships from scratch, Effort.jl utilizes this pre-existing information from the outset. Furthermore, Effort.jl leverages the power of gradients – essentially, quantifying "how much and in which direction" predictions shift when a parameter is subtly tweaked. This additional feature significantly reduces the number of examples the emulator needs to learn from, thereby cutting down on computational demands and enabling its operation on less powerful machines.

For a tool like Effort.jl to be truly valuable, it requires rigorous validation. If an emulator, by its nature, doesn’t possess a deep understanding of the underlying physics, how can we be certain that its accelerated "shortcut" consistently yields correct answers – specifically, the same answers the original, more complex model would produce? The newly published study directly addresses this critical question. It provides compelling evidence that Effort.jl’s accuracy, when tested against both simulated cosmic data and real astronomical observations, aligns remarkably closely with the results obtained from the full EFTofLSS model.

"And in some cases," Bonici proudly concludes, "where with the model you have to trim part of the analysis to speed things up, with Effort.jl we were able to include those missing pieces as well." This suggests that in certain scenarios, the emulator not only matches the model’s accuracy but can even offer a more complete analysis. Consequently, Effort.jl emerges as an indispensable ally for the analysis of the forthcoming data releases from groundbreaking experiments such as DESI and Euclid. These ambitious surveys are poised to dramatically expand our understanding of the Universe’s large-scale structure, and tools like Effort.jl will be crucial in unlocking their full scientific potential. The study, titled "Effort.jl: a fast and differentiable emulator for the Effective Field Theory of the Large Scale Structure of the Universe," authored by Marco Bonici, Guido D’Amico, Julien Bel, and Carmelita Carbone, is now accessible in the Journal of Cosmology and Astroparticle Physics (JCAP), marking a significant advancement in the field of computational cosmology.