Researchers at the Niels Bohr Institute have achieved a significant advancement in quantum computing, dramatically accelerating the detection of changes within delicate quantum states housed in qubits. By ingeniously integrating commercially available hardware with novel adaptive measurement techniques, the team has unlocked the ability to observe rapid shifts in qubit behavior that were previously imperceptible. This breakthrough promises to revolutionize our understanding and control of these fundamental building blocks of quantum computers, paving the way for more stable and powerful quantum machines.

Qubits, the cornerstone of quantum computing, hold the potential to surpass the capabilities of today’s most advanced supercomputers. However, their inherent sensitivity poses a substantial challenge. The materials used in qubit construction frequently harbor microscopic defects, the precise nature of which remains a subject of ongoing scientific inquiry. These minute imperfections are highly dynamic, capable of shifting their positions hundreds of times every second. As these defects move, they exert a profound influence on a qubit’s energy loss rate, thereby compromising its valuable quantum information. The speed at which a qubit degrades its quantum state, known as its relaxation rate, is a critical parameter for its functionality.

Historically, conventional testing methodologies for measuring qubit performance were notably sluggish, often requiring up to a minute to complete a single assessment. This temporal lag rendered them inadequate for capturing the fleeting nature of rapid fluctuations. Consequently, scientists were relegated to determining an average energy loss rate, a metric that masked the true, often erratic, and unstable behavior of the qubit. This situation can be likened to tasking a powerful draft horse with pulling a plow while an endless stream of obstacles materializes in its path, appearing too quickly for any meaningful reaction. Despite the animal’s inherent strength, such unpredictable disruptions render the task exceedingly difficult and inefficient.

FPGA-Powered Real-Time Qubit Control: A Paradigm Shift in Measurement

A groundbreaking development has emerged from the Niels Bohr Institute’s Center for Quantum Devices and the Novo Nordisk Foundation Quantum Computing Programme. Led by postdoctoral researcher Dr. Fabrizio Berritta, a team of scientists has engineered a real-time adaptive measurement system designed to meticulously track changes in a qubit’s relaxation rate as they transpire. This ambitious undertaking involved a collaborative effort with esteemed scientists from the Norwegian University of Science and Technology, Leiden University, and Chalmers University, pooling expertise from diverse research institutions.

The innovative approach hinges on the deployment of a high-speed classical controller. This controller is capable of updating its estimation of a qubit’s relaxation rate within milliseconds, a timeframe that precisely aligns with the inherent speed of the fluctuations themselves. This marks a stark departure from previous methods, which lagged significantly behind, taking seconds or even minutes to register changes. The ability to measure and react in near real-time is paramount for understanding and mitigating the detrimental effects of these rapid environmental influences on qubit coherence.

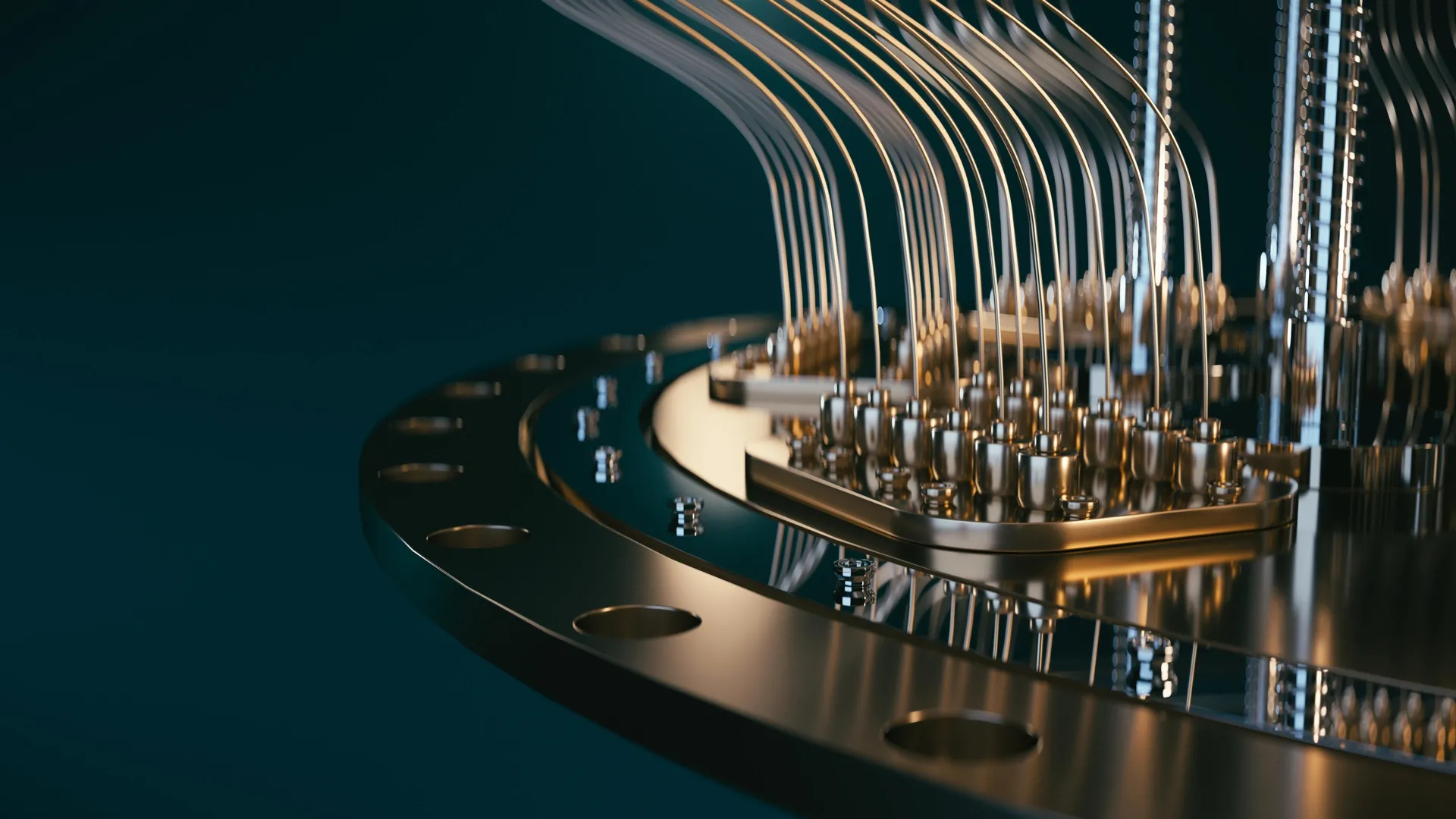

To achieve this unprecedented speed, the researchers harnessed the power of a Field Programmable Gate Array (FPGA). FPGAs are a specialized type of classical processor engineered for exceptionally rapid operations, offering a level of parallelism and low-latency processing that is crucial for real-time control. By executing the experimental procedures directly on the FPGA, the team was able to generate a highly accurate "best guess" of the qubit’s energy loss rate using only a minimal number of measurements. This direct on-chip processing circumvented the need for slower data transfers to conventional, more generalized computing systems, which would have introduced unacceptable delays.

While programming FPGAs for such highly specialized and demanding tasks can present significant challenges, the researchers demonstrated remarkable ingenuity. They successfully implemented an adaptive control loop where the controller’s internal Bayesian model was continuously updated after every single qubit measurement. This dynamic updating mechanism allowed the system to perpetually refine its understanding of the qubit’s current state and its susceptibility to environmental noise. This real-time learning capability is a critical component of the adaptive measurement strategy.

The direct consequence of this sophisticated control mechanism is that the system now effortlessly keeps pace with the dynamic and ever-changing environment surrounding the qubit. Measurements and subsequent adjustments to the experimental parameters occur on a timescale that is nearly identical to that of the fluctuations themselves. This synchronization results in a system that is approximately one hundred times faster than any previously demonstrated method for tracking such dynamic qubit behavior.

Beyond the technological achievement, this research has yielded a significant scientific discovery. Prior to these experiments, the precise speed at which fluctuations occur in superconducting qubits remained largely unknown. These meticulously conducted experiments have now provided concrete empirical evidence, shedding light on this crucial aspect of qubit dynamics. Understanding the temporal characteristics of these fluctuations is essential for developing effective error correction strategies.

Commercial Quantum Hardware Meets Advanced Control: Bridging the Gap

The integration of FPGAs into quantum computing research is not entirely novel, as these versatile processors have found applications in various scientific and engineering domains for many years. However, the specific application in this context involved the utilization of a commercially available FPGA-based controller from Quantum Machines, model OPX1000. This off-the-shelf hardware solution, when programmed with the researchers’ novel algorithms, provided a powerful and accessible platform for their advanced control experiments. The controller’s programming interface, which utilizes a language familiar to many physicists (akin to Python), further enhances its accessibility to research groups worldwide, democratizing access to cutting-edge quantum control capabilities.

The seamless integration of this advanced controller with sophisticated quantum hardware was made possible through a close and synergistic collaboration. The research group at the Niels Bohr Institute, under the leadership of Associate Professor Morten Kjaergaard, worked hand-in-hand with researchers at Chalmers University, the institution responsible for the design and fabrication of the quantum processing unit itself. Associate Professor Kjaergaard highlights the crucial role of the controller: "The controller enables very tight integration between logic, measurements and feedforward: these components made our experiment possible." This statement underscores the importance of the synergistic interplay between hardware and sophisticated control software in achieving experimental breakthroughs. The ability to precisely control the timing and sequence of operations, coupled with rapid feedback mechanisms, is what unlocks the potential of these complex quantum systems.

Why Real-Time Calibration Matters for Quantum Computers: The Path to Scalability

Quantum technologies hold the promise of unlocking transformative new capabilities, although the realization of practical, large-scale quantum computers remains an ongoing endeavor. While progress in scientific endeavors often unfolds incrementally, punctuated by periods of significant leaps forward. This recent breakthrough represents one such significant step.

By illuminating these previously hidden dynamics within qubits, the findings of this research fundamentally reshape how scientists approach the testing and calibration of superconducting quantum processors. In the current landscape of materials science and manufacturing techniques for quantum hardware, the development of real-time monitoring and adjustment capabilities appears not just beneficial, but essential for enhancing the reliability and performance of these sensitive systems. The success of this project also serves as a compelling testament to the vital importance of fostering strong partnerships between academic research institutions and industry leaders, alongside the creative and innovative application of readily available technologies.

"Nowadays, in quantum processing units in general, the overall performance is not determined by the best qubits, but by the worst ones: those are the ones we need to focus on," explains Dr. Berritta. This insight is critical for understanding the challenges of scaling quantum computers. Improving the performance of the weakest links in the quantum processor is paramount. He continues, "The surprise from our work is that a ‘good’ qubit can turn into a ‘bad’ one in fractions of a second, rather than minutes or hours." This rapid degradation highlights the dynamic nature of qubit decoherence and the need for equally rapid interventions.

The developed algorithm, coupled with the fast control hardware, offers a solution to this challenge: "With our algorithm, the fast control hardware can pinpoint which qubit is ‘good’ or ‘bad’ basically in real time. We can also gather useful statistics on the ‘bad` qubits in seconds instead of hours or days." This dramatic reduction in measurement time for diagnosing problematic qubits is a significant practical advantage for quantum hardware development and optimization. It allows for much faster iteration cycles in improving qubit design and control protocols.

However, the journey towards fully understanding and controlling quantum systems is far from over. Dr. Berritta concludes with a note of ongoing research: "We still cannot explain a large fraction of the fluctuations we observe. Understanding and controlling the physics behind such fluctuations in qubit properties will be necessary for scaling quantum processors to a useful size." This candid admission points to the frontier of quantum research, where the fundamental physics governing qubit behavior are still being unraveled. The quest to fully comprehend and manipulate these subtle quantum phenomena is a central challenge in the pursuit of fault-tolerant and scalable quantum computers. This breakthrough, however, provides a powerful new tool for tackling that very challenge, offering unprecedented insight into the dynamic heart of quantum computation.