Scientists at the USC Viterbi School of Engineering and the School of Advanced Computing have achieved a groundbreaking feat by creating artificial neurons that precisely replicate the intricate electrochemical behavior of authentic brain cells. This revolutionary discovery, detailed in the prestigious journal Nature Electronics, represents a monumental stride forward in the field of neuromorphic computing. Neuromorphic computing is dedicated to designing hardware that emulates the sophisticated architecture and functionality of the human brain. The implications of this advancement are profound, promising to drastically reduce chip sizes by orders of magnitude, slash energy consumption dramatically, and propel artificial intelligence towards the elusive goal of achieving artificial general intelligence (AGI).

For decades, the pursuit of artificial intelligence has largely relied on digital processors and earlier generations of neuromorphic chips that, while simulating brain activity, did so through abstract mathematical models. These conventional approaches, though powerful, remained a level removed from the biological reality of neural computation. The newly developed artificial neurons, however, break this mold by physically reproducing the actual operational mechanisms of real neurons. In essence, they don’t just simulate; they recreate. Just as the complex tapestry of natural brain activity is initiated and propagated by a cascade of chemical signals, these artificial counterparts harness actual chemical interactions to trigger and drive their computational processes. This fundamental shift means they are not mere symbolic representations of neural function but tangible, bio-mimetic recreations that operate on the same physical principles as their biological counterparts.

A New Class of Brain-Like Hardware: Beyond Electrons to Ions

The pioneering research, spearheaded by Professor Joshua Yang of USC’s Department of Computer and Electrical Engineering, builds upon his earlier seminal work on artificial synapses conducted over a decade ago. This latest breakthrough centers on a novel device dubbed a "diffusive memristor." The team’s findings meticulously describe how these innovative components can serve as the foundation for an entirely new generation of computer chips. These chips are poised to not only complement but also significantly enhance the capabilities of traditional silicon-based electronics. While conventional silicon systems rely on the flow of electrons to perform computations, Yang’s diffusive memristors ingeniously leverage the motion of atoms instead. This atomic-level movement more closely mirrors the way biological neurons transmit information, offering a pathway to chips that are not only smaller and more energy-efficient but also process information with the inherent elegance and adaptiveness of the human brain, potentially paving the way for true artificial general intelligence (AGI).

The intricate communication network within the brain is a marvel of biological engineering, driven by a dynamic interplay of both electrical and chemical signals between nerve cells. When an electrical impulse traverses a neuron and reaches its terminal at a junction known as a synapse, it undergoes a crucial transformation, converting into a chemical signal. This chemical messenger then bridges the synaptic gap to transmit information to the next neuron in the chain. Upon reception, this chemical signal is reconverted back into an electrical impulse, which then propagates through the receiving neuron, perpetuating the neural communication. Professor Yang and his dedicated colleagues have succeeded in replicating this complex, multi-stage biological process within their artificial devices with an astonishing degree of accuracy. A key advantage of their innovative design lies in its remarkable miniaturization: each artificial neuron occupies a physical footprint equivalent to that of a single transistor. This is a stark contrast to older neuromorphic designs that often required tens or even hundreds of transistors to achieve comparable, albeit less biologically accurate, functionality.

The fundamental unit of electrical activity in biological neurons is the creation of electrical impulses, which are facilitated by charged particles known as ions. The human brain relies on a precise choreography of ions, including potassium, sodium, and calcium, to orchestrate this vital neural activity. These ions act as the carriers of electrical charge, enabling the rapid and complex signaling that underpins all cognitive functions.

Using Silver Ions to Recreate Brain Dynamics: The Power of Diffusion

In their groundbreaking new study, Professor Yang, who also holds the esteemed position of director at the USC Center of Excellence on Neuromorphic Computing, utilized silver ions embedded within carefully engineered oxide materials. The controlled diffusion of these silver ions generates electrical pulses that remarkably mimic the fundamental processes of natural brain functions, including essential cognitive capabilities like learning, motor control, and strategic planning.

"Even though it’s not exactly the same ions in our artificial synapses and neurons, the physics governing the ion motion and the dynamics are very similar," Professor Yang explains, highlighting the underlying scientific principle that makes their approach so effective.

Professor Yang further elaborated on their strategic choice of materials: "Silver is easy to diffuse and gives us the dynamics we need to emulate the biosystem so that we can achieve the function of the neurons, with a very simple structure." The innovative device that makes this brain-like chip architecture possible is precisely this "diffusive memristor," named for the crucial role of ion motion and the dynamic diffusion that occurs when using silver.

He passionately articulated the team’s rationale for choosing ion dynamics as the cornerstone for building artificial intelligent systems: "because that is what happens in the human brain, for a good reason and since the human brain, is the ‘winner in evolution-the most efficient intelligent engine.’" This statement underscores a deep respect for biological intelligence as the ultimate benchmark for efficiency and effectiveness in information processing.

"It’s more efficient," Professor Yang states emphatically, underscoring a core advantage of their neuromorphic approach.

Why Efficiency Matters in AI Hardware: The Energy Bottleneck

Professor Yang is a strong advocate for the idea that the primary limitation of modern computing is not a lack of raw processing power but rather a fundamental inefficiency. "It’s not that our chips or computers are not powerful enough for whatever they are doing. It’s that they aren’t efficient enough. They use too much energy," he explains. This concern is particularly salient given the prodigious energy consumption of today’s large-scale artificial intelligence systems, which require immense power to process the vast datasets they are trained on.

Professor Yang further elucidated that, in stark contrast to the biological brain, "Our existing computing systems were never intended to process massive amounts of data or to learn from just a few examples on their own. One way to boost both energy and learning efficiency is to build artificial systems that operate according to principles observed in the brain." This points to a fundamental paradigm shift needed in AI hardware design.

While electrons undeniably offer superior speed for rapid computational operations, Professor Yang clarified their limitations in the context of brain emulation: "Ions are a better medium than electrons for embodying principles of the brain. Because electrons are lightweight and volatile, computing with them enables software-based learning rather than hardware-based learning, which is fundamentally different from how the brain operates." The volatility of electrons leads to a computational model that relies heavily on software algorithms for learning, a process distinct from the embodied learning that occurs in biological systems.

In direct contrast, he emphasizes, "The brain learns by moving ions across membranes, achieving energy-efficient and adaptive learning directly in hardware, or more precisely, in what people may call ‘wetware’." This "wetware" concept refers to the biological substrate of intelligence, where learning is an intrinsic property of the physical hardware.

To illustrate this point, Professor Yang offered a compelling example: a young child can master the recognition of handwritten digits after encountering only a handful of examples for each numeral. A conventional computer, however, typically requires thousands of examples to achieve the same level of proficiency. Astonishingly, the human brain accomplishes this remarkable feat of rapid learning while consuming a mere 20 watts of power, a minuscule fraction of the megawatts demanded by today’s supercomputers. This stark comparison highlights the unparalleled efficiency of biological neural processing.

Potential Impact and Next Steps: Towards Sustainable AI

Professor Yang and his research team envision this pioneering technology as a pivotal step towards achieving a true replication of natural intelligence. However, he candidly acknowledged a practical hurdle: the silver used in their current experiments is not yet fully compatible with established semiconductor manufacturing processes. Consequently, future research will focus on exploring alternative ionic materials that can achieve similar bio-mimetic effects while adhering to existing industrial standards.

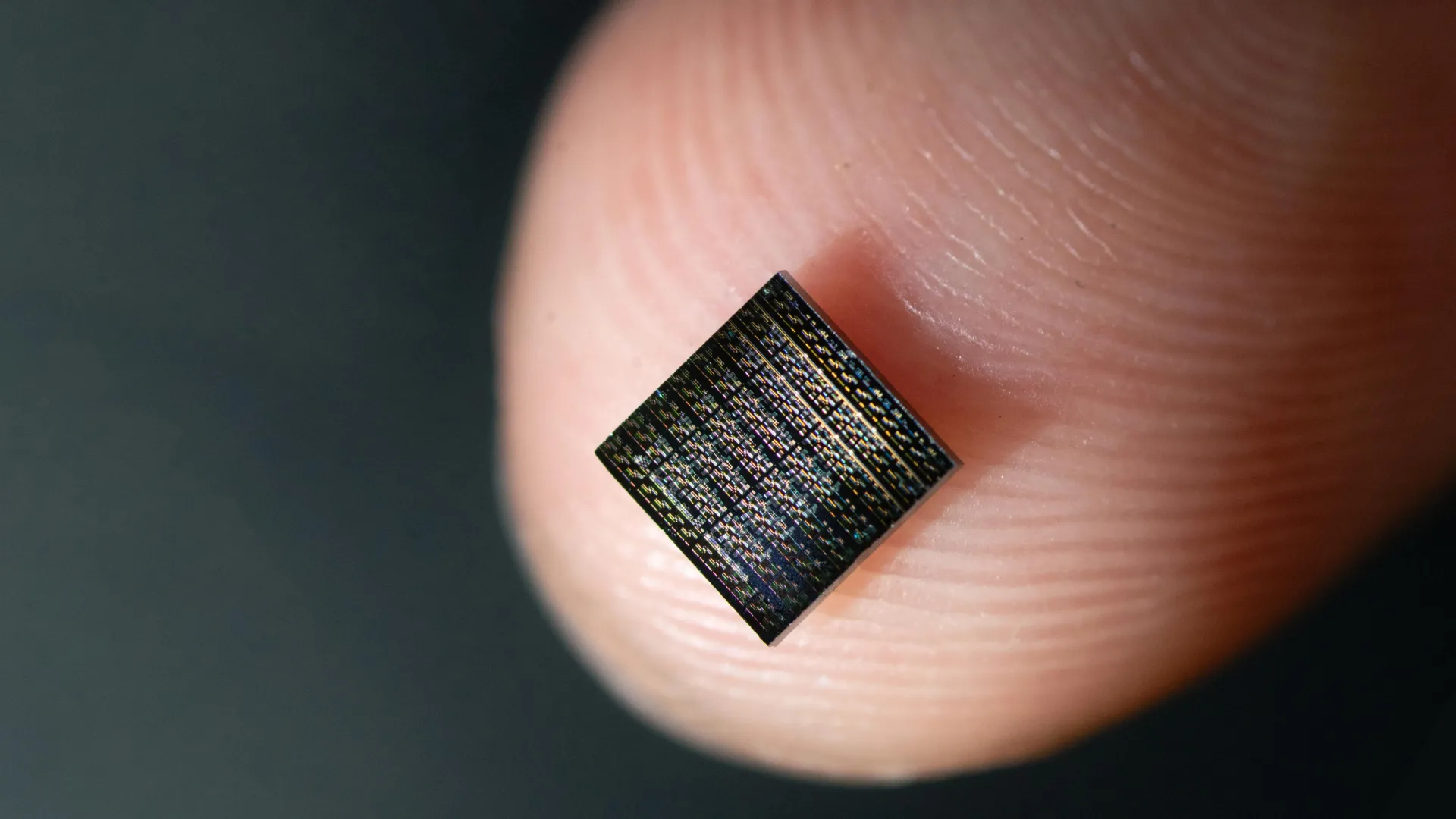

The diffusive memristors developed by the USC team offer remarkable efficiency in both energy consumption and physical size. A typical smartphone, for instance, houses numerous chips, each containing billions of transistors that toggle on and off to perform complex calculations.

"Instead [with this innovation], we just use a footprint of one transistor for each neuron. We are designing the building blocks that eventually led us to reduce the chip size by orders of magnitude, reduce the energy consumption by orders of magnitude, so it can be sustainable to perform AI in the future, with similar level of intelligence without burning energy that we cannot sustain," Professor Yang stated, outlining the transformative potential of their work for the future of AI.

Having successfully demonstrated the creation of capable and compact building blocks—both artificial synapses and neurons—the next critical phase of research involves integrating vast numbers of these components. The team aims to meticulously test how closely these integrated systems can replicate the brain’s unparalleled efficiency and cognitive capabilities. "Even more exciting," Professor Yang concluded, "is the prospect that such brain-faithful systems could help us uncover new insights into how the brain itself works." This suggests that the pursuit of artificial intelligence may, in turn, unlock deeper understanding of our own biological intelligence.