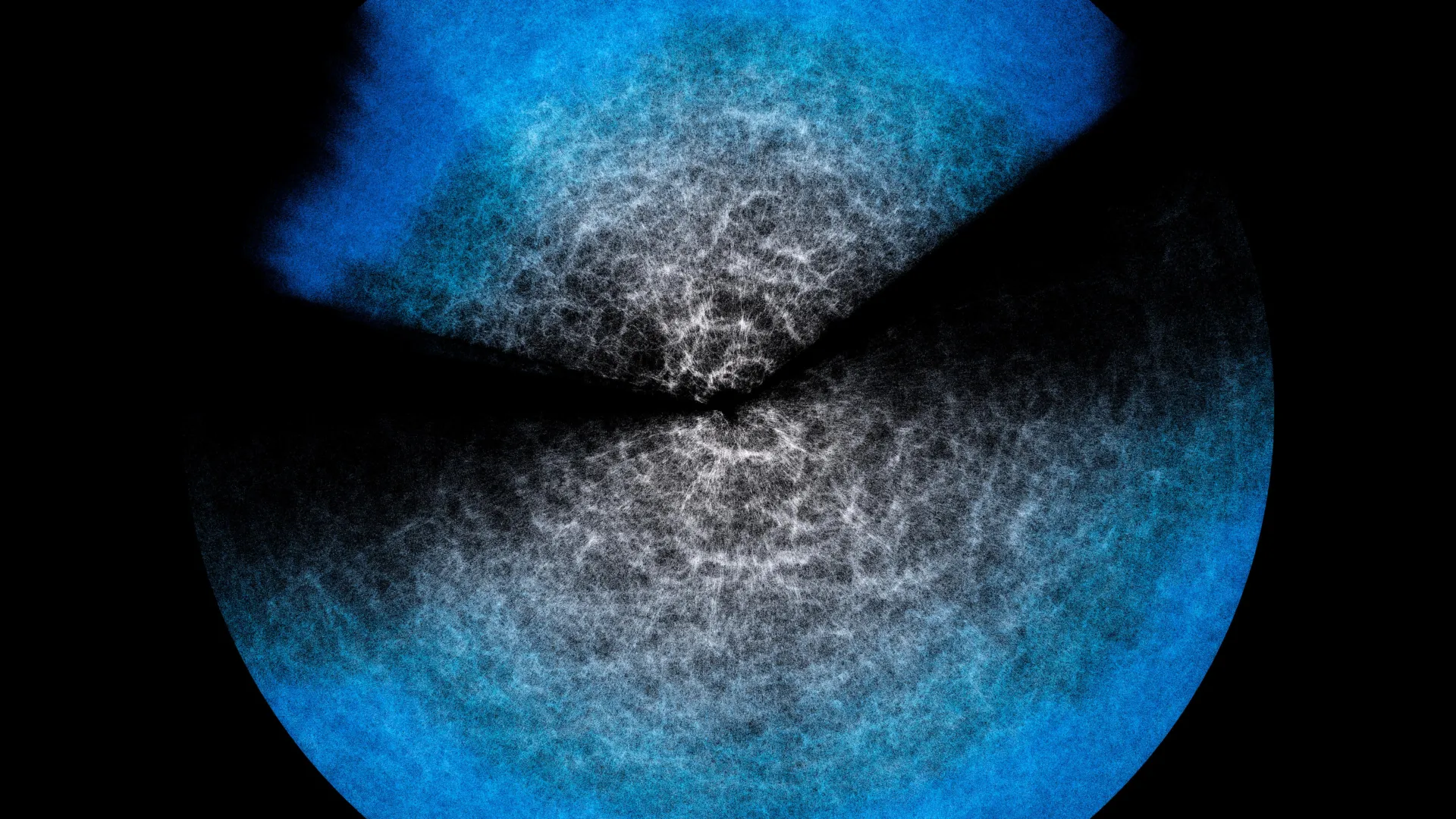

The universe, a canvas of unimaginable scale, presents a profound paradox: galaxies, vast in their own right, shrink to mere specks when juxtaposed against the cosmic expanse. These celestial islands, numbering in the billions, coalesce into intricate clusters, which in turn aggregate into colossal superclusters. These superclusters, like cosmic threads, weave a breathtaking tapestry, forming vast filaments that are intricately laced with immense voids. This grand, three-dimensional skeletal structure, often referred to as the "cosmic web," is the ultimate architecture of our universe. Contemplating such immensity can indeed induce a sense of vertigo, prompting the crucial question: how do scientists not only comprehend but also "visualize" something so profoundly vast? The answer, as it turns out, is a testament to human ingenuity and the power of scientific modeling.

Understanding this cosmic web is an arduous yet exhilarating endeavor. Astronomers and cosmologists meticulously combine the fundamental laws of physics that govern the universe with an ever-increasing torrent of data harvested from sophisticated astronomical instruments. This confluence of empirical observation and theoretical framework allows them to construct robust theoretical models. One such powerful framework is the Effective Field Theory of Large-Scale Structure, or EFTofLSS. These models, when infused with observational data, statistically encapsulate the intricate patterns and distributions that define the cosmic web. By analyzing these statistical descriptions, scientists can then derive and estimate the key parameters that characterize its fundamental properties.

However, the very sophistication that makes models like EFTofLSS so powerful also presents a significant hurdle: they demand substantial computational resources and considerable processing time. The relentless pace of astronomical observation means that the datasets at our disposal are growing exponentially. This data deluge necessitates the development of innovative analytical methods that can lighten the computational burden without compromising the precision of our scientific conclusions. This is where the concept of emulators emerges as a game-changer. Emulators are essentially sophisticated computational tools designed to "imitate" the behavior and outputs of complex theoretical models. They achieve this imitation by operating at a dramatically accelerated pace, offering a much faster route to generating predictions and analyzing data.

The inherent question that arises with any "shortcut" in scientific computation is the potential risk of sacrificing accuracy. To address this critical concern, an international team of researchers, comprising esteemed institutions such as the Italian National Institute for Astrophysics (INAF), the University of Parma in Italy, and the University of Waterloo in Canada, embarked on a rigorous study. Their findings, published in the prestigious Journal of Cosmology and Astroparticle Physics (JCAP), meticulously tested an emulator they designed, named Effort.jl. The results were nothing short of remarkable: Effort.jl demonstrated that it could deliver essentially the same level of correctness as the complex theoretical model it emulates. In some instances, it even managed to reveal finer details that might be obscured or computationally prohibitive to extract using the original model. Crucially, Effort.jl achieves this while operating in mere minutes on a standard laptop, a stark contrast to the days or weeks typically required on a supercomputer.

Marco Bonici, a researcher at the University of Waterloo and the lead author of the study, eloquently illustrates the underlying principle with a relatable analogy. "Imagine wanting to study the contents of a glass of water at the level of its microscopic components, the individual atoms, or even smaller," Bonici explains. "In theory, you can. But if we wanted to describe in detail what happens when the water moves, the explosive growth of the required calculations makes it practically impossible. However," he continues, "you can encode certain properties at the microscopic level and see their effect at the macroscopic level, namely the movement of the fluid in the glass. This is what an effective field theory does, that is, a model like EFTofLSS, where the water in my example is the Universe on very large scales and the microscopic components are small-scale physical processes." This analogy effectively captures how EFTofLSS simplifies complex, small-scale physics into overarching principles that govern the large-scale structure of the cosmos.

The theoretical model, such as EFTofLSS, acts as a statistical interpreter of the vast datasets collected by astronomical observations. Observational data is fed into the code, which then generates a "prediction" of the universe’s large-scale structure. However, as Bonici highlighted, this process is computationally intensive and time-consuming. With the current volume of astronomical data already at our disposal – and the even greater influx expected from ongoing and soon-to-be-launched surveys like DESI, which has already begun releasing its initial data, and the ambitious Euclid mission – it is simply not practical to perform these exhaustive calculations every single time an analysis is needed.

"This is why we now turn to emulators like ours, which can drastically cut time and resources," Bonici elaborates. An emulator functions by mimicking the predictive capabilities of the original model. At its core lies a sophisticated neural network, a powerful form of artificial intelligence, that learns to establish a direct association between input parameters and the model’s pre-computed predictions. The neural network is trained on a substantial set of outputs generated by the original model. Once trained, it gains the remarkable ability to generalize, meaning it can accurately predict the model’s response for novel combinations of parameters it has not explicitly encountered during training. It is crucial to understand that the emulator does not possess an intrinsic "understanding" of the underlying physics itself. Instead, it becomes exceptionally adept at recognizing and replicating the theoretical model’s responses, thereby anticipating its output for any given set of input parameters.

The true innovation of Effort.jl lies in its further optimization of the training phase. It ingeniously incorporates existing knowledge about how predictions change when parameters are subtly adjusted. Instead of forcing the neural network to "re-learn" these fundamental relationships from scratch, Effort.jl leverages this prior knowledge from the outset. Furthermore, Effort.jl utilizes gradients – essentially, calculations that quantify "how much and in which direction" predictions shift when a parameter is infinitesimally altered. This sophisticated use of gradients is another key factor that enables the emulator to learn from significantly fewer examples. This reduction in computational demand is precisely what allows Effort.jl to run efficiently on smaller, more accessible machines.

Any tool designed for scientific analysis, especially one that offers a computational shortcut, necessitates rigorous validation. The fundamental question remains: if the emulator does not inherently "understand" the physics, how can we be certain that its accelerated predictions are indeed accurate and reliable, mirroring the results that the original, more computationally demanding model would produce? The newly published study in JCAP directly addresses this critical point. It provides compelling evidence that Effort.jl’s accuracy, when tested against both simulated cosmological data and real astronomical observations, exhibits close agreement with the predictions of the EFTofLSS model. "And in some cases," Bonici proudly notes, "where with the model you have to trim part of the analysis to speed things up, with Effort.jl we were able to include those missing pieces as well." This indicates that Effort.jl can not only match but sometimes even surpass the original model’s analytical capabilities by overcoming its computational limitations.

Consequently, Effort.jl emerges as an invaluable ally for cosmologists and astrophysicists, particularly as they prepare to analyze the forthcoming data releases from pioneering experiments like DESI and Euclid. These upcoming datasets promise to dramatically expand our comprehension of the universe’s large-scale structure, and tools like Effort.jl will be indispensable in unlocking their profound insights. The study, titled "Effort.jl: a fast and differentiable emulator for the Effective Field Theory of the Large Scale Structure of the Universe," authored by Marco Bonici, Guido D’Amico, Julien Bel, and Carmelita Carbone, is now publicly available in the esteemed Journal of Cosmology and Astroparticle Physics (JCAP), marking a significant advancement in the field of computational cosmology.