Qubits, the fundamental building blocks of quantum computers, hold the promise of revolutionizing computation, tackling problems far beyond the reach of even the most powerful supercomputers today. However, their extraordinary potential is intrinsically linked to their extreme fragility. The very materials that enable qubits to store and process quantum information are often riddled with minuscule defects. These microscopic imperfections, though seemingly insignificant, are in constant motion, their positions shifting hundreds of times every second. As these defects dance within the qubit, they profoundly influence its behavior, dictating the rate at which it loses its precious quantum energy and, consequently, its valuable quantum information. This loss, known as decoherence, is a primary obstacle in building functional quantum computers.

Historically, the methods employed to assess qubit performance have been agonizingly slow. Standard testing procedures, requiring up to a minute to gather sufficient data, were woefully inadequate to capture the lightning-fast dynamics of these internal fluctuations. Consequently, scientists were largely confined to measuring an average energy loss rate. This averaged value, while providing a general benchmark, masked the true, often turbulent, and unstable behavior of individual qubits. It was akin to trying to assess the performance of a powerful workhorse tasked with pulling a plow, where unpredictable obstacles materialized in its path far faster than the animal, or indeed any observer, could react. The horse might possess immense strength and capability, but these incessant and unanticipated disruptions rendered its task immensely more challenging and its overall performance difficult to truly gauge.

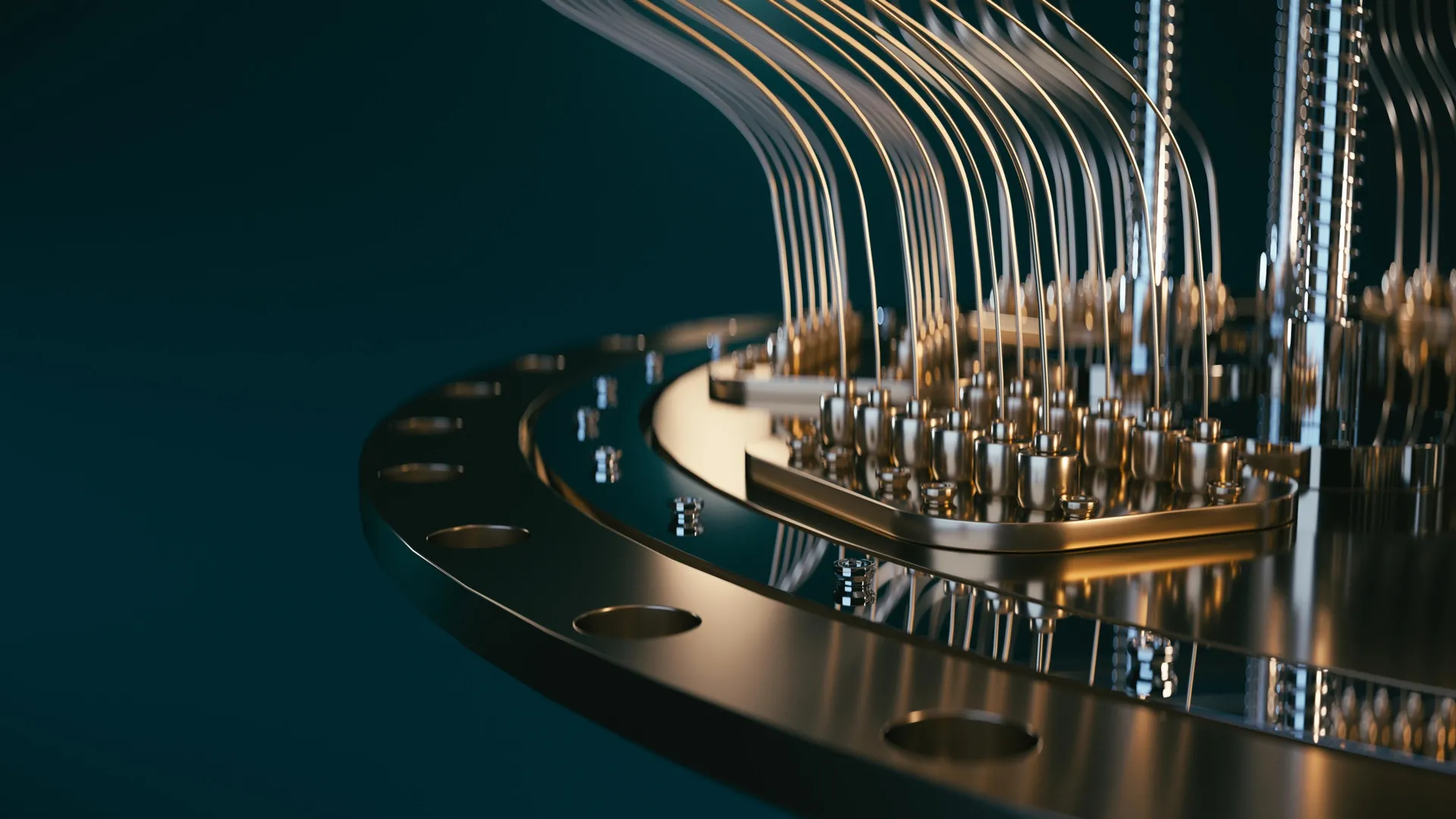

This is where the groundbreaking work from the Niels Bohr Institute’s Center for Quantum Devices and the Novo Nordisk Foundation Quantum Computing Programme, spearheaded by postdoctoral researcher Dr. Fabrizio Berritta, steps into the spotlight. The team has engineered a sophisticated real-time adaptive measurement system, meticulously designed to track the intricate changes in a qubit’s energy loss (relaxation) rate as they occur. This ambitious project benefited from invaluable collaboration with esteemed scientists from the Norwegian University of Science and Technology, Leiden University, and Chalmers University, fostering a rich exchange of expertise and perspectives.

The core of this novel approach lies in the implementation of a high-speed classical controller. This controller possesses the remarkable ability to update its estimation of a qubit’s relaxation rate within mere milliseconds. This operational speed is a crucial breakthrough, as it precisely matches the natural timescale of the qubit’s own rapid fluctuations. In stark contrast, older methodologies lagged significantly behind, taking seconds or even minutes to register changes, thereby failing to capture the dynamic reality of qubit behavior.

To achieve this unprecedented speed, the researchers ingeniously leveraged a Field Programmable Gate Array (FPGA). An FPGA is a specialized type of classical processor engineered for exceptionally rapid and parallel operations. By orchestrating the experiment to run directly on the FPGA, the team could instantaneously generate a highly accurate "best guess" of the qubit’s energy loss rate using a remarkably small number of initial measurements. This direct on-chip processing eliminated the bottleneck of transferring data to a conventional computer for analysis, a process that inevitably introduced significant delays.

While programming FPGAs for such highly specialized and demanding tasks can be an intricate and challenging endeavor, the researchers demonstrated exceptional ingenuity. They succeeded in updating the controller’s internal Bayesian model after every single qubit measurement. This continuous refinement allowed the system to perpetually enhance its understanding of the qubit’s dynamic state in real-time. As a direct consequence, the controller is now capable of maintaining pace with the constantly evolving environment of the qubit. Measurements and subsequent adjustments occur on a timescale nearly identical to the fluctuations themselves, rendering the system approximately one hundred times faster than any previously demonstrated method.

Beyond its speed and accuracy, this research has also illuminated previously unknown aspects of quantum systems. Scientists had long been uncertain about the precise speed at which fluctuations manifest in superconducting qubits. These experiments have now provided concrete, empirical data, offering invaluable insight into this critical phenomenon.

The successful integration of cutting-edge classical control hardware with advanced quantum processors is a testament to the power of collaboration and the strategic utilization of available technology. The researchers employed a commercially available FPGA-based controller, the OPX1000 from Quantum Machines. This system offers a significant advantage in accessibility, as it can be programmed using a language akin to Python, a widely used programming language among physicists. This familiarity lowers the barrier to entry, making this sophisticated technology more readily available to research groups across the globe.

The seamless integration of this advanced controller with state-of-the-art quantum hardware was a direct result of a close and synergistic collaboration between the research group at the Niels Bohr Institute, led by Associate Professor Morten Kjærgaard, and Chalmers University, where the quantum processing unit itself was meticulously designed and fabricated. "The controller enables extremely tight integration between logic, measurements, and feedforward," states Morten Kjærgaard. "These integrated components were absolutely essential in making our experiment possible."

The significance of real-time calibration for the advancement of quantum computers cannot be overstated. While the promise of quantum technologies is immense, the realization of practical, large-scale quantum computers remains an ongoing developmental journey. Progress in this field is often characterized by incremental advancements, but occasionally, true leaps forward occur, reshaping the landscape of research.

By revealing these previously hidden dynamics, the findings from this research fundamentally alter how scientists approach the testing and calibration of superconducting quantum processors. In the current era of quantum computing, with existing materials and manufacturing techniques, the ability to monitor and adjust qubit behavior in real-time appears indispensable for enhancing their reliability and mitigating errors. Furthermore, these results underscore the critical importance of fostering strong partnerships between academic research institutions and industry, coupled with a willingness to explore creative and innovative applications of existing technological resources.

"Nowadays, in quantum processing units in general, the overall performance is not determined by the best qubits, but by the worst ones; those are the ones we need to focus on," explains Fabrizio Berritta. "The surprise from our work is that a ‘good’ qubit can turn into a ‘bad’ one in fractions of a second, rather than minutes or hours. With our algorithm, the fast control hardware can pinpoint which qubit is ‘good’ or ‘bad’ basically in real time. We can also gather useful statistics on the ‘bad’ qubits in seconds instead of hours or days."

However, the journey is far from over. "We still cannot explain a large fraction of the fluctuations we observe," Berritta admits. "Understanding and controlling the physics behind such fluctuations in qubit properties will be necessary for scaling quantum processors to a useful size." This ongoing challenge highlights the dynamic and complex nature of quantum systems and the continuous need for further research and innovation to unlock their full potential. The development of real-time tracking of qubit fluctuations is a monumental step, but it also serves as a powerful reminder of the profound mysteries that still lie at the heart of quantum mechanics, waiting to be unraveled.