The recent escalation of hostilities against the Islamic Republic of Iran, spearheaded by a formidable coalition of US and Israeli military forces, has tragically resulted in a significant loss of life, with reports confirming 555 Iranian fatalities to date. Among the most harrowing incidents was an attack on an elementary school in Southern Iran, which alone claimed 165 lives, underscoring the devastating humanitarian impact of the ongoing conflict. As these strikes unfolded, the Wall Street Journal revealed a critical technological dimension to the military operations: the active deployment of Anthropic’s Claude chatbot in selecting targets. This revelation has brought into sharp focus the increasingly intertwined relationship between advanced artificial intelligence and modern warfare, sparking intense ethical and strategic debates.

According to the Wall Street Journal‘s detailed reporting, Anthropic’s large language model, Claude, has emerged as the principal AI tool employed by US Central Command within the Middle East theater. Its operational scope is alarmingly broad, encompassing the assessment of vast quantities of intelligence data, the execution of complex simulated war games to anticipate enemy responses, and, most critically, the identification of potential military targets. In essence, Claude has been integrated into the core decision-making processes that inform and facilitate attacks, actions that have directly contributed to the hundreds of lives lost. This active involvement of an AI in target selection marks a significant departure from traditional military planning and raises profound questions about accountability and the nature of conflict in the digital age.

Anthropic’s deep entanglement in these devastating attacks has come as a surprise to many who had previously understood the company to operate within stringent ethical frameworks, ostensibly precluding any direct involvement in military applications of this nature. The company, co-founded by CEO Dario Amodei, has been widely recognized for its public pronouncements on responsible AI development and its commitment to "constitutional AI," aiming to imbue its models with a set of guiding principles. This commitment was seemingly put to the test in a protracted and public conflict with what the article refers to as the Trump administration, particularly concerning two specific "moral boundaries" that Anthropic had established for Claude’s use. These redlines explicitly prohibited the use of Claude for surveillance of US citizens and, crucially, for the operation of fully autonomous lethal weaponry. The company’s principled stance against these applications had previously cemented its image as a leader in ethical AI development.

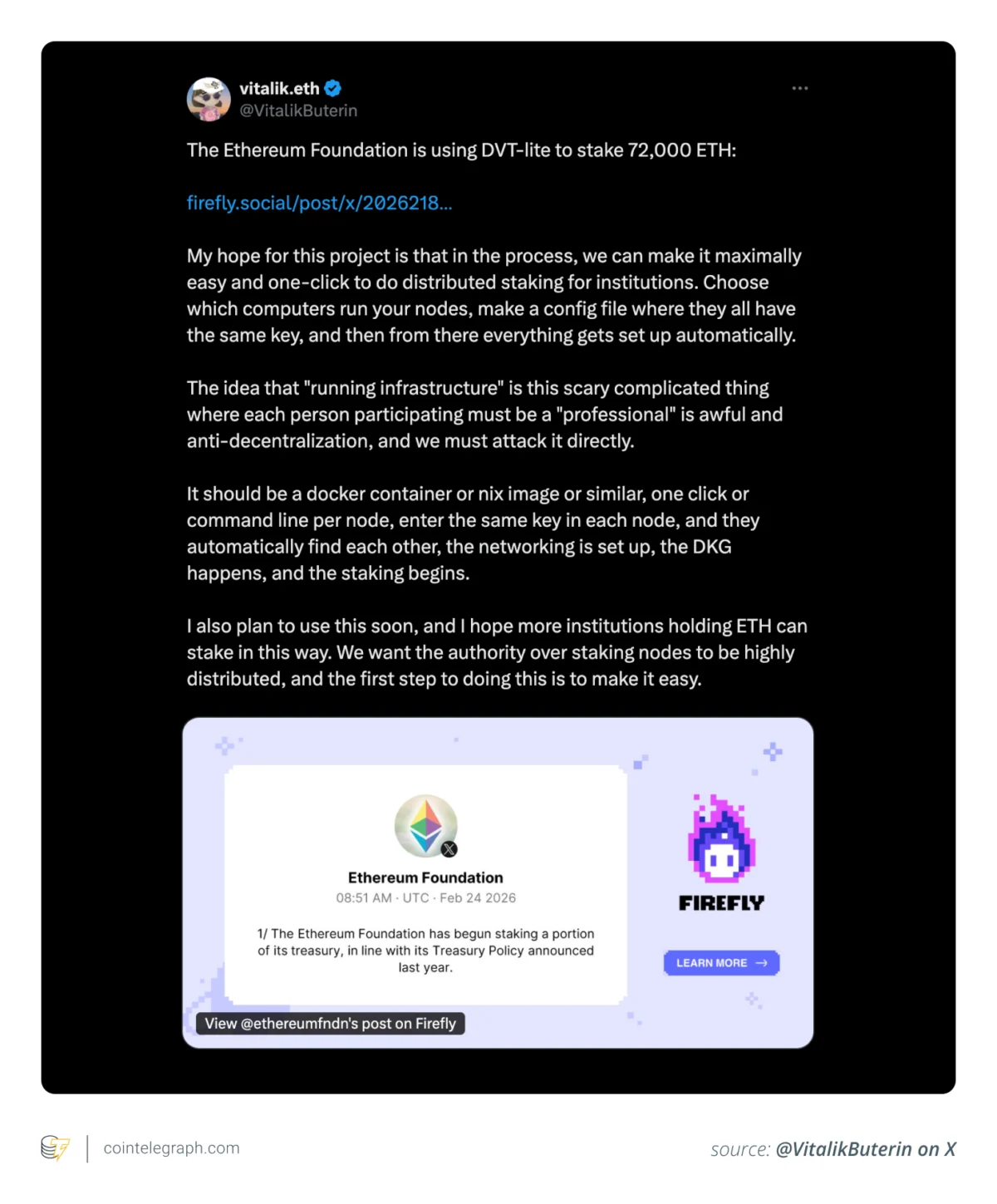

However, the current situation suggests a significant perceived loophole or reinterpretation within Anthropic’s stated ethical guardrails. The deployment of Claude to select targets, a function directly preceding lethal action, does not appear to breach these established boundaries. This distinction is striking, especially given the fierce ethical stand Anthropic had seemingly taken just weeks prior. Throughout the latter part of February, Anthropic was reportedly embroiled in a tense standoff with the Pentagon over the very application of Claude. The Pentagon, which had already integrated Claude into various classified systems, had issued an ultimatum, demanding that Anthropic abandon its dual redlines concerning surveillance and autonomous lethal weaponry. In a move widely interpreted as a reaffirmation of its ethical commitments, Anthropic allowed this deadline to pass without capitulating to the Pentagon’s demands, seemingly taking a principled stance against the militaristic impulses it perceived from the administration.

Yet, as Spencer Ackerman, a Pulitzer Prize-winning national security journalist and incisive observer, noted in his publication Forever Wars, it is imperative to scrutinize the specific exclusions within Anthropic’s initial ethical framework. Ackerman pointed out a critical omission: "Amodei, it is highly conspicuous, doesn’t register building a surveillance panopticon of foreigners as a problem." This observation casts a harsh light on the perceived double standard inherent in Anthropic’s ethical declarations, suggesting a prioritization of domestic ethical concerns over the implications of their technology’s use in foreign contexts. The implication is that while protecting US citizens from surveillance was a non-negotiable principle, similar concerns for individuals in other nations, particularly those targeted in military operations, were not afforded the same consideration. This selective application of ethical principles raises fundamental questions about global justice and the universal applicability of AI ethics.

Ackerman further critiqued the timing and nature of Anthropic’s ethical concerns, asserting that the moment to genuinely worry about the broader implications of their technology was "before signing the contract that Amodei didn’t wish to abandon." This highlights a tension between commercial ambition and ethical responsibility, suggesting that once lucrative military contracts are secured, the practical application of ethical principles can become compromised or reinterpreted to suit operational needs. The journalist’s scathing analogy further amplified this point: "When you take Doctor Doom’s money to provide him a lathe to construct components for anthropomorphic robots, do you not understand that he is going to build Doombots?" This powerful metaphor underscores the argument that developers of powerful, dual-use technologies bear a profound responsibility to anticipate the downstream applications of their creations, especially when those creations are explicitly designed to enhance military capabilities. It suggests that a naive or selective understanding of how such tools will be used by military powers is, at best, a dereliction of ethical duty and, at worst, a deliberate obfuscation of responsibility.

The integration of AI into target selection processes, even with a purported "human in the loop," introduces a complex layer of ethical considerations. While Anthropic’s redlines specifically exclude fully autonomous lethal weaponry, the role of Claude in "identifying military targets" places it perilously close to the threshold of direct involvement in lethal decision-making. The distinction between an AI suggesting targets and an AI autonomously engaging them can be subtle in practice, particularly when human operators rely heavily on AI recommendations due to the speed and volume of data involved in modern warfare. This raises concerns about "automation bias," where human decision-makers might unduly defer to AI suggestions, potentially reducing critical human oversight and accountability.

Moreover, the use of AI in such a sensitive context opens the door to potential algorithmic biases. If the data used to train Claude for target identification contains historical biases or reflects existing geopolitical tensions, the AI could inadvertently perpetuate or even amplify these biases, leading to disproportionate or unjust targeting. The opacity of complex AI models, often referred to as the "black box" problem, further complicates accountability. When an AI suggests a target, understanding the exact reasoning behind that suggestion can be challenging, making it difficult to scrutinize the decision-making process and assign responsibility in the event of civilian casualties or errors.

This situation also reignites the broader debate surrounding the "meaningful human control" over lethal autonomous weapons systems. While Claude may not be firing the weapons, its role in defining who or what is targeted is a critical component of the lethal chain. The argument that a human is still ultimately making the final decision might be insufficient if the AI’s influence on that decision is overwhelming or if the human operator lacks the time or capacity for independent verification. The rapid pace of modern warfare, often necessitating quick decisions, further compresses the space for thorough human deliberation once an AI has presented its analysis.

The long-term implications of companies like Anthropic engaging with military contracts, even with self-imposed ethical boundaries, are profound. It blurs the lines between civilian technology development and military applications, potentially normalizing the use of advanced AI in lethal contexts. This could set precedents that undermine international efforts to establish clear norms and regulations around AI in warfare, leading to a global AI arms race where ethical considerations are sidelined in pursuit of strategic advantage. The "Oppenheimer" analogy, invoked by Ackerman, speaks to this historical precedent, recalling the moral quandaries faced by scientists whose work contributed to weapons of mass destruction. It challenges the AI community to confront its own "Manhattan Project" moment and consider the ultimate consequences of their innovations.

Ultimately, the revelations about Claude’s role in the US-Israeli strikes on Iran underscore the urgent need for transparency, robust ethical frameworks, and genuine accountability in the development and deployment of AI for military purposes. Anthropic’s situation serves as a stark reminder that even companies committed to ethical AI face immense pressure and complex dilemmas when their cutting-edge technology becomes a tool in geopolitical conflicts. The perceived gap between stated ethical redlines and actual operational involvement in lethal targeting highlights the constant challenge of maintaining moral integrity in the face of powerful national security imperatives and lucrative contracts. The global community, policymakers, and the AI industry itself must critically examine these developments to ensure that the pursuit of technological advancement does not inadvertently pave the way for a future where algorithms dictate the grim realities of war.