Nvidia, under the visionary leadership of CEO Jensen Huang, has been the undisputed kingmaker of the AI revolution. Its specialized Graphics Processing Units (GPUs) and the proprietary CUDA software platform have become the de facto standard for training complex AI models, propelling the company to an unprecedented valuation, making it the most valuable chipmaker and, at times, one of the most valuable companies globally. This dominance has largely been fueled by the insatiable demand from AI developers like OpenAI, Google, and Meta, who require immense computational power to build and refine their cutting-edge models. The initial September announcement of a "blockbuster $100 billion deal" with OpenAI was seen as a testament to Nvidia’s critical role and OpenAI’s relentless pursuit of computational scale. This massive commitment, envisioned to procure 10 gigawatts of compute—an energy requirement equivalent to ten nuclear power plants—was meant to underpin OpenAI’s ambitious plans for next-generation AI, including its rumored Project Stargate, a colossal data center initiative.

However, even at its inception, the deal raised eyebrows, with some financial analysts expressing "concerns of AI companies passing the same money around in circular dealmaking." This referred to the possibility that some of the capital raised by AI startups from investors might simply be funneled back into purchasing hardware from major suppliers, potentially inflating valuations and obscuring true market dynamics. Such a scenario could create an artificial sense of demand and investment within the ecosystem, raising questions about the sustainability and genuine profitability of these ventures.

Fast forward to late last week, and reports began to surface suggesting the monumental agreement was "on ice." The initial shock quickly gave way to more granular explanations. Sources close to the matter, speaking to Reuters, indicated that the Sam Altman-led OpenAI had grown "unsatisfied" with Nvidia’s latest chip offerings, particularly concerning their performance in AI inference.

To understand the gravity of this, it’s crucial to differentiate between AI training and AI inference. AI training involves feeding vast datasets to a neural network, allowing it to learn patterns and make predictions. This phase is incredibly compute-intensive and often demands the highest-end, most powerful GPUs, an area where Nvidia’s H100 and upcoming Blackwell series excel. AI inference, on the other hand, is the process of using a trained machine learning model to generate new data or make predictions in real-time. For OpenAI, this means deploying models like ChatGPT, DALL-E, and others to millions of users globally, responding to queries, generating text, and creating images with minimal latency. While still demanding, inference often prioritizes efficiency, cost-effectiveness, and real-time responsiveness over raw peak performance. OpenAI’s pivot towards optimizing for inference suggests a shift in focus from purely developing foundational models to scaling their real-world applications and making them economically viable for mass consumption. If Nvidia’s chips were deemed "not up to snuff" for this critical inference phase, it points to potential shortcomings in either performance-per-watt, cost-efficiency, or specific architectural features that OpenAI needs for its unique deployment workloads.

This dissatisfaction prompted OpenAI to actively seek alternatives. The company has, in the interim, forged "major deals with competing chipmaker AMD," among others. AMD, with its Instinct MI300X accelerators, has been aggressively positioning itself as a viable alternative to Nvidia, promising competitive performance, particularly for inference workloads, often at a more attractive price point or with better availability. Diversifying its chip supply chain is a strategic imperative for OpenAI, not only to reduce dependency on a single vendor but also to foster competition, drive down costs, and tailor hardware solutions more precisely to its evolving needs. This strategy mirrors similar moves by other tech giants like Google with its custom Tensor Processing Units (TPUs) and Microsoft with its Maia AI accelerators, all aiming to optimize their AI infrastructure.

The situation took another turn when Bloomberg reported that instead of the original $100 billion supply deal, Nvidia was now "nearing a deal to invest $20 billion in OpenAI." This shift from a direct hardware purchase agreement to a strategic equity investment is telling. While a $20 billion investment is still substantial – and, as Huang later clarified, would be Nvidia’s largest ever – it represents a significant reduction from the initial figure and a change in the nature of the transaction. An investment allows Nvidia to maintain a financial stake and influence in OpenAI’s future, potentially securing future hardware contracts or preferential access, without committing to the full financial and logistical complexities of the original, massive supply agreement. For OpenAI, it provides critical capital for its infrastructure build-out while allowing it greater flexibility in choosing its hardware partners.

The public retraction of such a colossal deal, even if it was initially a "letter of intent" and "never a commitment" as Huang later emphasized, highlights "ongoing tensions as US software companies continue to grapple with investors getting cold feet over the AI industry’s astronomical spending plans." The promise of AI is immense, but the path to profitability for many AI companies remains long and capital-intensive. With "trillions of dollars of commitments" to scale AI infrastructure, investors are increasingly scrutinizing the return on investment and the timeline for these companies to generate significant, sustainable profits. This broader market skepticism undoubtedly influenced the re-evaluation of the Nvidia-OpenAI deal, prompting both parties to consider more financially prudent and strategically flexible arrangements.

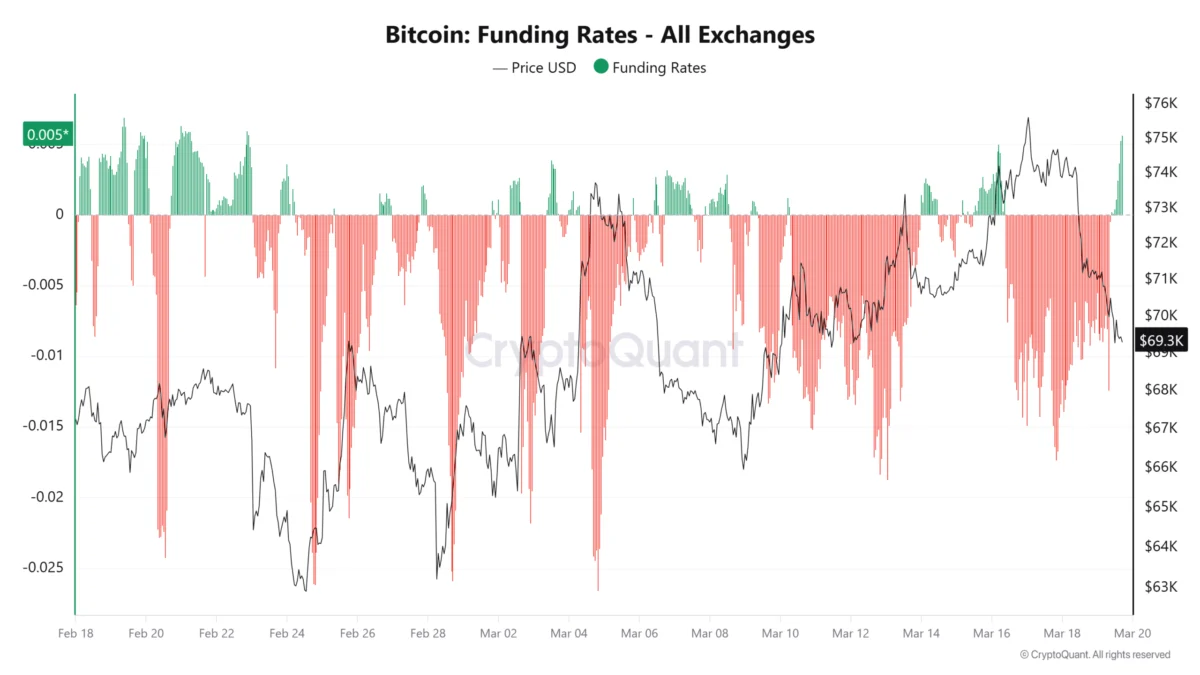

The news of the deal’s collapse had immediate repercussions. Nvidia’s stock price, despite its meteoric rise, experienced a "weeks-long plunge," dropping "almost nine percent over the last five days" and "over seven percent over the last month." While these dips are relatively minor in the context of Nvidia’s overall staggering gains, they underscore the market’s sensitivity to perceived challenges in its core AI business. Any indication of a softening in demand from a major customer like OpenAI, or questions about the superiority of its chips for all AI workloads, can trigger investor anxiety.

In the wake of the reports, both Jensen Huang and Sam Altman moved swiftly to publicly deny any significant "strain on the relationship between the two companies." Altman took to X (formerly Twitter), tweeting, "We love working with NVIDIA and they make the best AI chips in the world. We hope to be a gigantic customer for a very long time. I don’t get where all this insanity is coming from." Huang, for his part, told reporters over a weekend, "We will definitely participate in the next round of financing because it’s such a good investment," adding, "We are going to make a huge investment in OpenAI. Sam [Altman] is closing the round, and we will absolutely be involved. We will invest a great deal of money," emphasizing it would be the "largest investment we’ve ever made."

These public statements can be seen as both damage control and a genuine attempt to reassure stakeholders. While the initial $100 billion supply deal may have fallen through in its original form, both companies recognize the symbiotic nature of their relationship. Nvidia still needs OpenAI as a marquee customer and a driver of innovation, and OpenAI still relies heavily on Nvidia’s technology for core training tasks and potentially for future advancements. The revised $20 billion investment signifies a continued, albeit reconfigured, partnership, reflecting a more nuanced approach to their mutual strategic goals.

As Ars Technica aptly pointed out, the original $100 billion deal for "ten gigawatts of compute, something that would require the equivalent of ten nuclear reactors to sustain," was merely a "letter of intent." This crucial detail means it was never a binding contract, offering both parties an exit if circumstances or strategic priorities changed. The sheer scale of the original figure likely served as a headline-grabbing statement of intent for the future of AI, rather than a firm, immediate commitment.

The evolving landscape of AI hardware suggests a future where diversity and specialization will be key. As AI models become more sophisticated and their deployment scales, the demand for highly optimized, cost-effective, and energy-efficient chips for inference will only grow. This environment fosters competition from players like AMD and encourages AI giants to develop custom silicon tailored to their specific needs. While Nvidia will undoubtedly remain a dominant force in AI, the OpenAI situation serves as a potent reminder that even the market leader must continuously innovate and adapt to the dynamic and incredibly demanding requirements of its most ambitious customers. The future of AI infrastructure will likely be built on a mosaic of hardware solutions, rather than a single, monolithic supplier.