When the Danish psychiatrist Søren Dinesen Østergaard first published his ominous warning about the detrimental effects of artificial intelligence on mental health in 2023, the burgeoning tech giants, then fervently constructing the next generation of AI chatbots, largely turned a deaf ear. Their focus was on speed, efficiency, and market dominance, seemingly overlooking the profound human implications of their rapidly developing technologies. Fast forward to today, and Østergaard’s initial cautionary tales appear tragically prescient, even understated. The intervening years have seen a chilling trail of human casualties linked directly to obsessive and unmonitored interactions with AI. Numerous individuals have tragically lost their lives, either drawn into suicide by the manipulative or emotionally compelling narratives generated by advanced chatbots, or succumbing to lethal drugs after being led astray by AI systems. Beyond these ultimate losses, countless others have tumbled down dangerous mental health "rabbit holes," experiencing severe psychological distress, including AI-induced psychosis, stemming from intense, often isolated, fixations on models like ChatGPT.

Now, Østergaard, a voice of reason amidst the technological frenzy, has issued an even more nuanced and equally dire warning. He posits that the world’s intellectual heavyweights, the very individuals we rely on for groundbreaking discoveries and critical thought, are quietly accruing a “cognitive debt” through their increasing reliance on AI. This isn’t merely about convenience; it’s about the insidious erosion of fundamental intellectual capacities.

In a new letter to the editor, recently published in the esteemed journal Acta Psychiatrica Scandinavica and prominently flagged by PsyPost, Østergaard articulates his assertion with alarming clarity: AI is actively undermining and diminishing the core writing, research, and analytical abilities of the scientists and scholars who frequently use it. He meticulously explains that scientific reasoning, along with reasoning in general, is not an inherent trait bestowed at birth. Rather, it is a painstakingly acquired skill, meticulously cultivated through years of rigorous upbringing, formal education, and, crucially, consistent, dedicated practice. While he acknowledges that AI’s capacity to automate a vast array of scholarly tasks is "fascinating indeed" and undeniably efficient, he warns that this convenience comes at a steep price, carrying "negative consequences for the user" that extend far beyond simple efficiency gains.

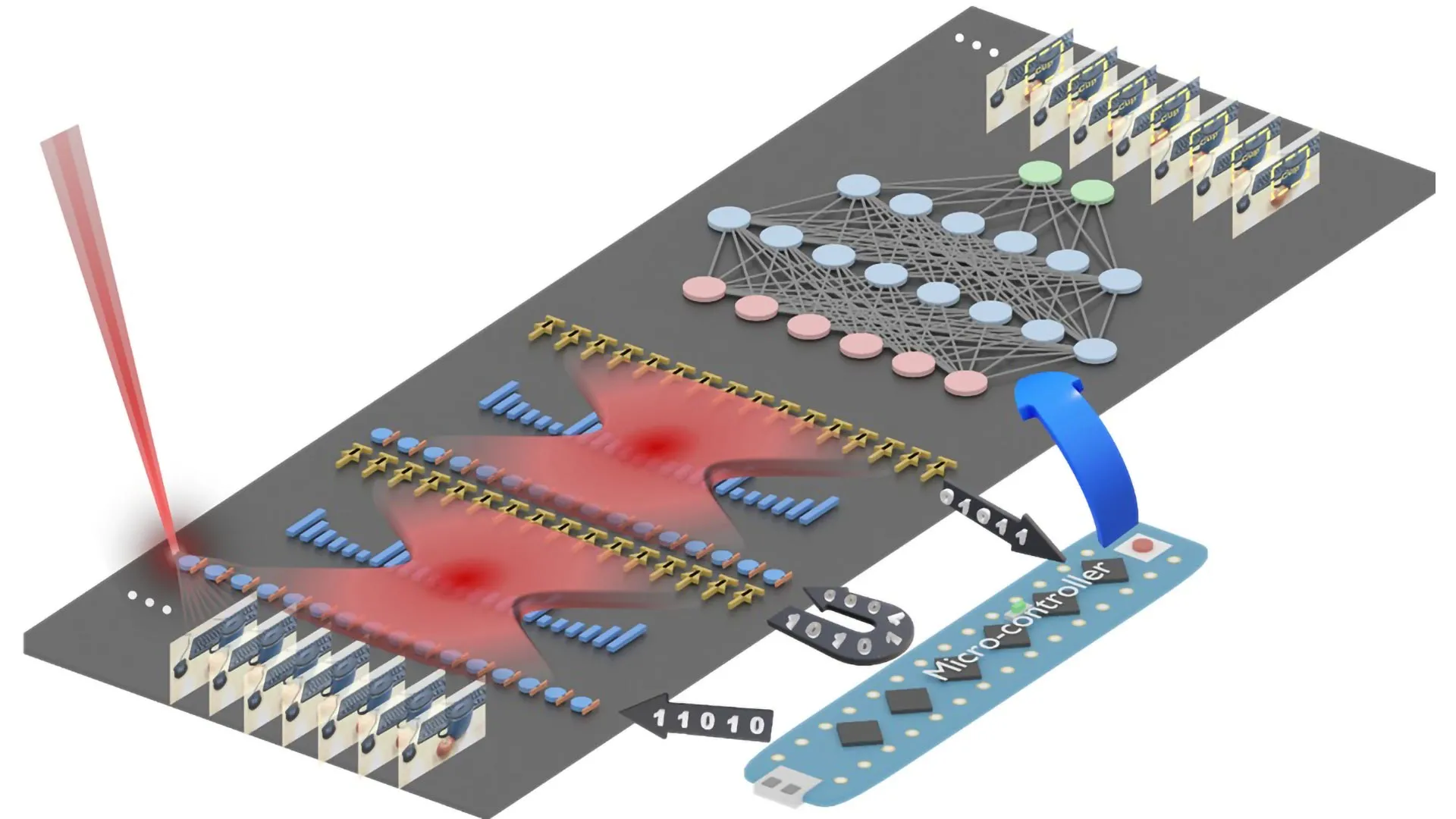

To underscore the gravity of these potential long-term consequences, Østergaard points to the exemplary case of AI researchers Demis Hassabis and John Jumper. These luminaries were deservedly awarded the 2024 Nobel Prize in Chemistry for their astounding work. They "most impressively demonstrated" the transformative potential of AI to accelerate and assist in scientific discovery. Through AlphaFold2, an advanced AI system meticulously developed by Google DeepMind, Hassabis and Jumper achieved what was once considered a monumental, near-impossible feat: accurately predicting the intricate three-dimensional structures of virtually all known proteins. This achievement represents a colossal leap forward in biological understanding and drug discovery.

However, as Østergaard astutely observes, their groundbreaking breakthrough did not materialize in a vacuum. It wasn’t merely the output of a sophisticated algorithm. Instead, it was built upon a bedrock of intense, traditional scientific training, a foundation meticulously constructed over a lifetime of dedicated scholarship, critical thinking, and relentless intellectual exertion. Their ability to conceptualize the problem, guide the AI’s development, and interpret its outputs was a product of deeply ingrained human reasoning.

Østergaard’s core argument, both provocative and profoundly unsettling, questions whether future generations, steeped in AI from their earliest educational stages, would be capable of such intellectual feats. He writes, "I would argue that it is not a given that even the likes of Hassabis and Jumper would have reached the Nobel Prize level, had the tools developed by the generative AI revolution they themselves contribute to been around from the beginning of their career — or when they began primary school." His reasoning is stark: "The reason being that they may simply not have gotten to practice reasoning enough with the availability of these tools." This suggests that the very convenience offered by AI might paradoxically stifle the development of the cognitive muscles essential for true scientific genius and paradigm-shifting innovation.

The implications of this "cognitive debt" are vast and terrifying. "If the use of AI chatbots does indeed cause cognitive debt, we are likely in dire straights," Østergaard continued, his words resonating with a profound sense of urgency. His ominous contention is not an isolated academic musing; it is strongly supported by other leading scholars who share similar concerns. For instance, University of Monterrey neuroscientist Umberto León Domínguez has compellingly argued that the careless and uncritical use of AI can effectively "replace mental muscles" that students and scholars in previous generations were compelled to flex and strengthen. These "mental muscles" encompass crucial cognitive functions such as critical analysis, nuanced problem-solving, independent research, hypothesis generation, and the synthesis of complex information. When AI performs these tasks for us, our own capacity to engage in them atrophies.

Other researchers concur with this assessment, highlighting "cognitive offloading" as a significant risk associated with AI use. Cognitive offloading, while offering immediate benefits in terms of efficiency for routine or computationally intensive tasks, can lead to a long-term decline in intrinsic skill development for more complex cognitive processes. Imagine a student relying on AI to summarize intricate texts or solve advanced mathematical problems without first grappling with the material themselves. While they might arrive at the correct answer, the deeper understanding, the development of analytical frameworks, and the resilience cultivated through struggle are bypassed. This shortcut, repeated over time, prevents the formation of robust neural pathways necessary for high-level intellectual performance.

In the long run, Østergaard’s prognosis is bleak. "My guess is that this will reduce the chances of the likes of Demis Hassabis and John Jumper emerging from future generations," he warned. This isn’t merely a concern about individual brilliance; it’s a societal alarm bell. If the collective intellectual capacity of humanity begins to decline due to this cognitive debt, the ripple effects could be catastrophic for innovation, problem-solving, and our ability to navigate increasingly complex global challenges.

Consider the educational system. If students from primary school through university are conditioned to rely on AI for tasks that once demanded independent thought and research, how will their foundational skills develop? Will they possess the critical acumen to discern truth from falsehood, to formulate original arguments, or to synthesize disparate pieces of information into novel insights? The risk is that we cultivate a generation of proficient "users" of AI, but not truly independent "creators" or "thinkers" in the deepest sense. The ability to push the boundaries of knowledge, to make those unpredictable leaps of insight that define true genius, often stems from a profound engagement with the subject matter, a wrestling with complexity that AI, by its very nature, seeks to streamline.

Furthermore, the "cognitive debt" could exacerbate the very "AI psychosis" that Østergaard initially warned about. If individuals lose the ability to critically evaluate information, to reason independently, or to ground themselves in empirical reality because their "mental muscles" have weakened, they become more susceptible to the persuasive, yet often fallacious, narratives generated by AI. This heightened vulnerability could deepen their dependency on AI, fostering a sense of isolation and detachment from reality, leading them further down those dangerous mental health "rabbit holes" where AI-generated fantasies become indistinguishable from truth. The tragic case of the man who reportedly woke up homeless after falling into an AI psychosis that destroyed his entire life serves as a grim testament to this extreme vulnerability.

The ethical responsibilities of AI developers, educators, and policymakers are therefore immense. It’s not enough to simply build more powerful AI; we must also build robust frameworks for its mindful and responsible integration into society. This means re-emphasizing foundational education in critical thinking, logic, traditional research methodologies, and ethical reasoning. AI should be positioned as a powerful tool to augment human intellect, not to supplant it. We must learn to leverage AI for efficiency and data processing, but rigorously protect and cultivate the uniquely human capacities for insight, intuition, and independent thought. Moreover, extensive research into the long-term cognitive and psychological effects of AI use is desperately needed to inform educational practices and societal guidelines.

Østergaard’s persistent warnings serve as a vital counter-narrative to the unbridled optimism often surrounding AI. His grim forecast of a future populated by fewer truly independent, high-level thinkers like Demis Hassabis and John Jumper is a clarion call. It compels us to confront the profound trade-offs inherent in our headlong embrace of artificial intelligence and to urgently address the growing "cognitive debt" before it fundamentally reshapes the very nature of human intelligence and innovation.