To be human is, fundamentally, to be a forecaster, and sometimes, a remarkably accurate one. Our innate drive to peer into the future, whether by drawing on past experiences or applying the logic of cause and effect, has been crucial for our survival – guiding us in hunting, avoiding predators, cultivating crops, forging social bonds, and generally navigating a world that rarely prioritizes our well-being. As the methods of divination have evolved over millennia, from interpreting tea leaves to analyzing vast data sets, so too has our conviction that the future is knowable, and therefore, controllable.

Today, we are inundated by an overwhelming and ceaseless torrent of predictions, so pervasive that most of us scarcely notice them. As this sentence is being composed, algorithms on distant servers are already attempting to anticipate the next word based on the ones already typed. If you’re reading this online, a separate set of algorithms has likely already curated and displayed an advertisement tailored to your predicted interests, aiming for maximum click-through. For those dedicated readers perusing this article in its printed form, congratulations are in order – you have, for the moment at least, escaped the algorithmic gaze.

If the thought of a ubiquitous, largely invisible predictive layer, surreptitiously woven into the fabric of your life by profit-driven corporations, evokes a sense of unease, then know that this sentiment is shared.

The question arises: how did we arrive at this juncture? While humanity’s inherent desire for reliable forecasting is understandable, few, if any, actively consented to an omnipresent algorithmic oracle dictating every facet of their existence. A trio of recent books delves into this future-centric world, exploring its origins and implications. While each offers distinct strategies for navigating this new landscape, a core consensus emerges: predictions are fundamentally instruments of power and control.

In The Means of Prediction: How AI Really Works (and Who Benefits), Oxford economist Maximilian Kasy illuminates how the majority of predictions shaping our lives are derived from the statistical analysis of patterns within extensive, labeled datasets – a process known in artificial intelligence circles as supervised learning. Once "trained" on such datasets, these algorithms can process novel information and generate their most probable estimation of a specific future outcome. Will you default on your parole, successfully repay your mortgage, secure a promotion if hired, excel in your college examinations, or be present in your home when it is targeted by an airstrike? Increasingly, our lives are being shaped, and at times, tragically curtailed, by the answers provided by machines to these critical questions.

The prospect of a pervasive, largely unseen predictive infrastructure, subtly embedded into our lives by corporations driven by profit motives, undoubtedly breeds discomfort. This pervasive system, according to Kasy, is actively contributing to a world that is becoming harsher, more uniform, and increasingly instrumentalized. In this environment, life’s inherent possibilities are narrowed, long-standing prejudices are reinforced, and cognitive faculties appear to be gradually eroding. Kasy argues that this outcome, far from being accidental, is an entirely predictable consequence of the system’s design.

While proponents of AI might categorize these detrimental effects as "unintended consequences" or mere "optimization and alignment problems," Kasy contends that they are, in fact, indicative of the system functioning precisely as intended. He posits that if an algorithm designed to curate social media feeds amplifies outrage to maximize engagement and ad revenue, this is a direct result of outrage being a profitable driver of ad sales. Similarly, algorithms that disqualify job candidates deemed likely to have significant family-care responsibilities outside of work, or those that preemptively screen out individuals predicted to develop chronic health conditions or disabilities, are operating according to their profit-driven mandates. What benefits a company’s bottom line may not align with an individual’s prospects for employment or their longevity.

Kasy’s distinct contribution lies in his assertion that striving for less biased and more equitable algorithms will not resolve these fundamental issues. He argues that attempts to rebalance these scales are insufficient to counteract the inherent reliance of predictive algorithms on historical data, which is frequently tainted by racism, sexism, and a multitude of other biases. Furthermore, he contends that the pursuit of profit will invariably supersede efforts to mitigate harm. The only viable recourse, in Kasy’s view, is to establish broad democratic control over what he terms "the means of prediction" – encompassing data, computational infrastructure, technical expertise, and energy resources.

A substantial portion of The Means of Prediction is dedicated to outlining potential mechanisms for achieving this control, including the establishment of "data trusts" – public bodies tasked with managing and utilizing data on behalf of their contributors – and the implementation of corporate taxation schemes designed to account for the societal harms inflicted by AI. While Kasy’s rigorous, systematic approach to building new public-serving institutions is commendable, it emerges at a time when public trust in such institutions has reached historic lows. Moreover, the persistent concern regarding the "brain goo problem" remains.

To his credit, Kasy acknowledges the inherent difficulties in implementing his proposals, recognizing that they will neither be quick nor easy to realize. The concluding question of his book is a stark one: do we possess the necessary time to enact these changes?

Examining Kasy’s blueprint for reclaiming control over the mechanisms of prediction prompts a further critical inquiry: how did we arrive at a point where machine-mediated prediction is virtually unavoidable? A succinct Marxist response might be "capitalism," but this explanation fails to elucidate why the same algorithms employed in climate change modeling are also determining eligibility for vital medical resources like kidney transplants or influencing decisions on car loans.

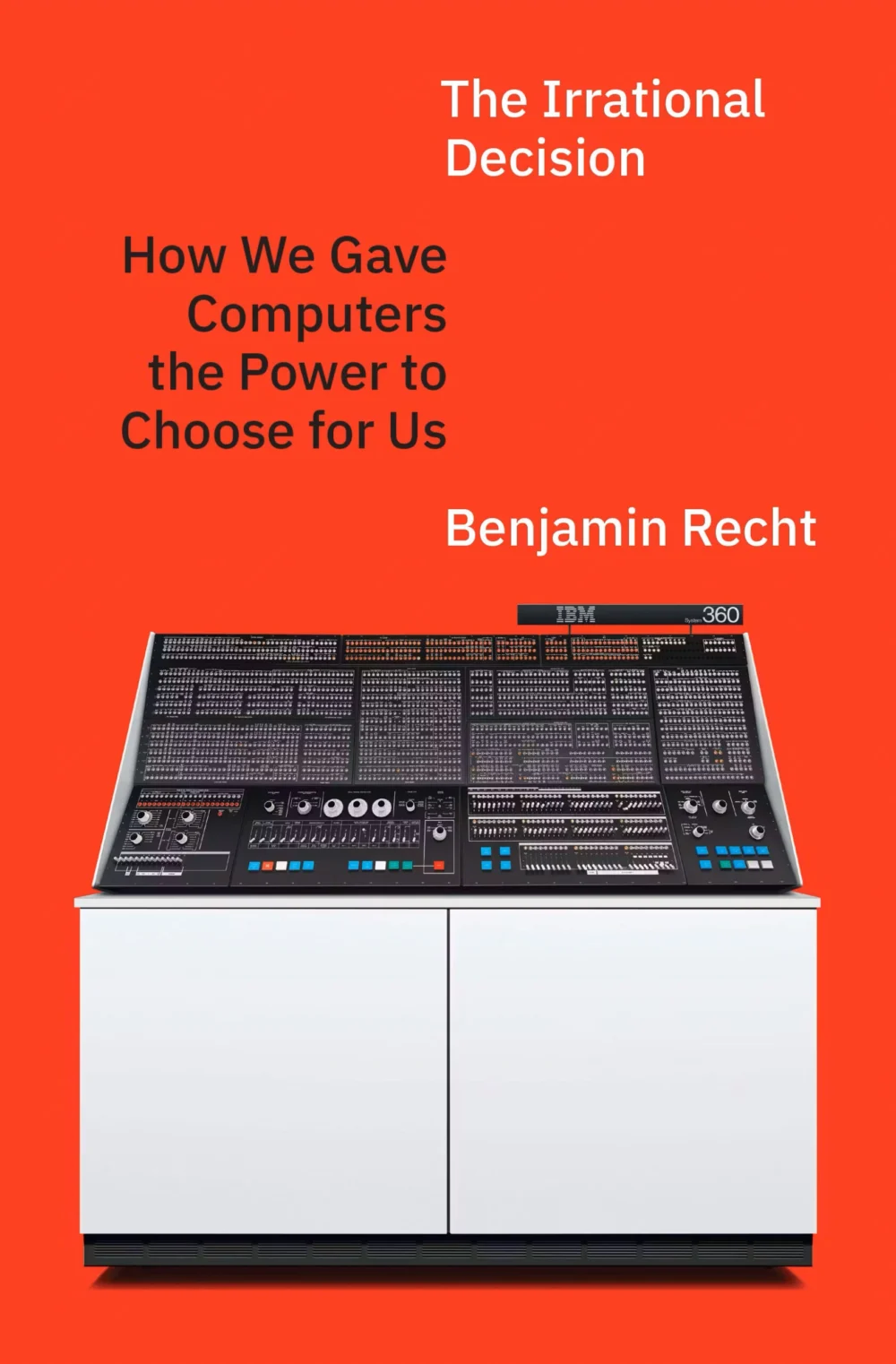

According to Benjamin Recht, author of The Irrational Decision: How We Gave Computers the Power to Choose for Us, our current predicament is deeply intertwined with the concept and ideology of decision theory, particularly as espoused by economists in the form of rational choice theory. Recht, a distinguished professor in UC Berkeley’s Department of Electrical Engineering and Computer Science, prefers the term "mathematical rationality" to describe the narrow, statistical framework that fueled the development of computers and shaped their capabilities.

This belief system, with roots tracing back to the Enlightenment, gained significant traction in the latter half of World War II. The exigencies of war, with its emphasis on risk assessment and rapid decision-making, fostered the development of mathematical models that proved instrumental in the Allied victory. This success convinced a select group of scientists and statisticians that these models could serve as a foundational basis for designing the first computers, thus birthing the concept of the computer as an ideal rational agent – a machine capable of making optimal decisions by quantifying uncertainty and maximizing utility.

Intuition, experience, and judgment, Recht argues, were supplanted by optimization, game theory, and statistical prediction. He writes, "The core algorithms developed in this period drive the automated decisions of our modern world, whether it be in managing supply chains, scheduling flight times, or placing advertisements on your social media feeds." In this optimization-driven reality, "every life decision is posed as if it were a round at an imaginary casino, and every argument can be reduced to costs and benefits, means and ends."

Recht contends that mathematical rationality, in its human manifestation, is epitomized by figures like pollster Nate Silver, psychologist Steven Pinker, and various Silicon Valley magnates. These individuals fundamentally believe that the world would be a better place if more people adopted their analytical mindset, embracing cost-benefit analyses, risk estimations, and optimal planning – in essence, making decisions like computers.

How might we demonstrate that (unquantifiable) human intuition, morality, and judgment offer superior approaches to tackling some of the world’s most significant and complex challenges?

Recht views this proposition as inherently flawed for numerous reasons. Notably, he points out that humans were capable of making evidence-based decisions long before the advent of widespread automation. He highlights that advances in sanitation, antibiotics, and public health dramatically increased life expectancy from under 40 in the 1850s to 70 by 1950. Furthermore, the period between the late 1800s and early 1900s witnessed groundbreaking scientific discoveries in physics, including new theories of thermodynamics, quantum mechanics, and relativity. Humanity also managed to develop automobiles and aircraft without recourse to a formal system of rationality, and societal innovations like modern democracy emerged without the aid of optimal decision theory.

Therefore, how can we persuade proponents of mathematical rationality that most life decisions are not merely grist for its relentless mill? More importantly, how can we demonstrate that unquantifiable human intuition, morality, and judgment might offer more effective solutions to some of the world’s most pressing and intricate problems?

One starting point could be to remind those who champion rationalism that any prediction, whether computational or otherwise, is essentially a wish – albeit one with a potent capacity for self-fulfillment. This concept lies at the heart of Carissa Vélez’s expansive and compelling polemic, Prophecy: Prediction, Power, and the Fight for the Future, from Ancient Oracles to AI.

Vélez, a philosopher at the University of Oxford, views a prediction as "a magnet that bends reality toward itself." She elaborates, "When the force of the magnet is strong enough, the prediction becomes the cause of its becoming true."

Consider Gordon Moore. While not directly featured in Prophecy, he plays a significant role in Recht’s historical account of mathematical rationality. Moore, a co-founder of the tech giant Intel, is renowned for his 1965 prediction that the density of transistors on integrated circuits would double every two years. This phenomenon, known as "Moore’s Law," has held true for decades, though it is now approaching its physical limitations due to the size constraints of silicon atoms.

One interpretation of Moore’s Law is that Gordon Moore was simply a prescient individual, extrapolating from existing computing trends to forecast the future of the semiconductor industry in his influential 1965 article, "Cramming More Components onto Integrated Circuits."

However, Vélez might offer an alternative perspective: Moore articulated a well-informed prediction, and the entire industry collectively invested in making it a reality. As Recht makes clear, there were and continue to be substantial financial incentives for companies to produce faster and smaller computer chips. While the industry has undoubtedly expended billions of dollars in its efforts to sustain Moore’s Law, the profits derived from it have likely been even greater. Moore’s Law, in this context, functioned as an exceptionally powerful magnet.

Predictions, Vélez argues, not only possess a tendency to manifest themselves but can also divert our attention from the immediate challenges we face. When proponents of artificial general intelligence (AGI) proclaim that AGI will be the ultimate solution to humanity’s problems, this not only shapes our perception of AI’s role but also shifts our focus away from present-day issues – issues that, in many instances, are exacerbated by AI itself.

In this light, the questions surrounding predictions – who is making them, and who has the authority to do so – are fundamentally questions of power. Vélez posits that it is no coincidence that societies that rely most heavily on prediction often exhibit tendencies towards oppression and authoritarianism. She asserts that predictions are "veiled prescriptive assertions – they tell us how to act." They are, in philosophical terms, "speech acts." When we accept a prediction and act accordingly, it is akin to obeying a command.

Despite the aspirations of technology companies, technology is not an immutable destiny. It is conceived and shaped by humans, who then choose how to utilize it – or refrain from using it. Perhaps the most fitting, and inherently human, response to the uninvited daily predictions that permeate our lives is simply to defy them.

Bryan Gardiner is a writer based in Oakland, California.