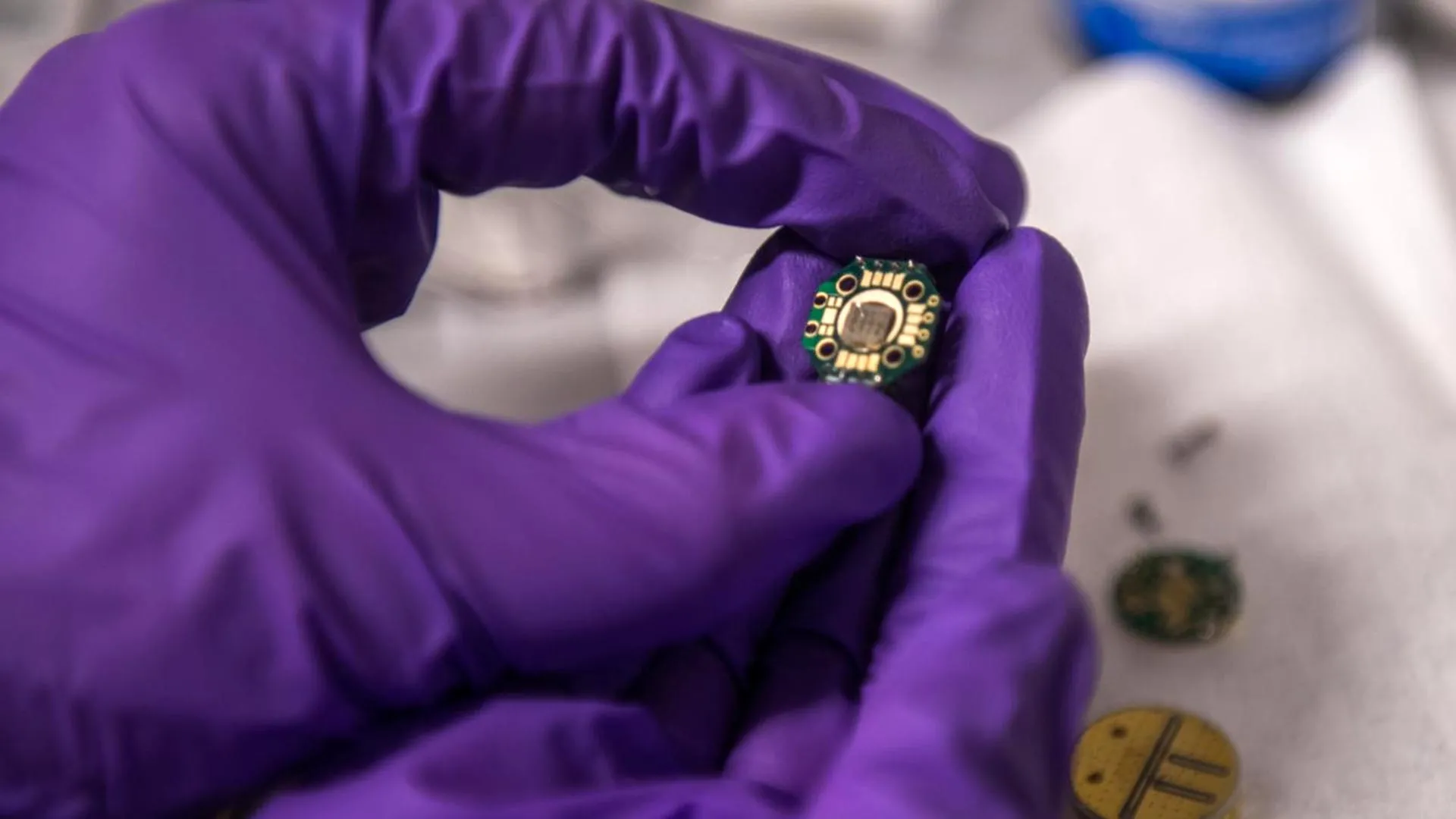

The paper’s lead author, David Awschalom, a distinguished figure and the Liew Family Professor of Molecular Engineering and Physics at the University of Chicago, who also helms the Chicago Quantum Exchange and the Chicago Quantum Institute, eloquently articulated the profound significance of this moment. "This transformative moment in quantum technology is reminiscent of the transistor’s earliest days," he stated, drawing a powerful parallel to a historical technological inflection point. "The foundational physics concepts are established, functional systems exist, and now we must nurture the partnerships and coordinated efforts necessary to achieve the technology’s full, utility-scale potential. How will we meet the challenges of scaling and modular quantum architectures?" This question underscores the critical juncture the field now faces, moving beyond theoretical underpinnings to the intricate engineering required for widespread adoption.

Over the past decade, quantum technologies have undergone a remarkable metamorphosis, evolving from mere proofs-of-concept in highly controlled laboratory settings to sophisticated systems capable of supporting nascent applications across communication, sensing, and computation. The authors of the Science paper attribute this accelerated progress to a symbiotic relationship, a crucial element that fueled the maturation of microelectronics in the 20th century: the synergistic collaboration between academic institutions, government agencies, and private industry. This multifaceted approach, fostering an environment of shared knowledge and resources, has been instrumental in pushing the boundaries of what is currently possible in the quantum realm.

To provide a comprehensive and objective assessment of the progress made by various quantum hardware platforms, the researchers embarked on an ambitious comparative analysis. Their study meticulously reviewed six leading contenders: superconducting qubits, trapped ions, spin defects, semiconductor quantum dots, neutral atoms, and optical photonic qubits. To quantify their advancement across the critical domains of quantum computing, quantum simulation, quantum networking, and quantum sensing, the team employed sophisticated large language AI models, including industry giants like ChatGPT and Gemini. These advanced AI tools were tasked with estimating the Technology Readiness Levels (TRLs) for each platform, offering a data-driven perspective on their maturity.

The TRL framework, a widely recognized metric, quantifies the maturity of a technology on a scale ranging from 1, representing basic principles observed in a laboratory environment, to 9, signifying proven functionality in an operational setting. It is crucial to understand that a higher TRL does not automatically equate to immediate widespread applicability. Instead, it signifies a more complete demonstration of system functionality, indicating that the technology has moved beyond theoretical conception and into tangible, albeit often still limited, operational capabilities.

The comprehensive analysis presented in the paper offers a valuable snapshot of the quantum technology landscape as it stands today. While certain advanced prototypes have indeed achieved the status of full operational systems and are even accessible through public cloud platforms, their overall performance characteristics remain constrained. The realization of many high-impact applications, particularly those involving intricate large-scale quantum chemistry simulations, is anticipated to demand millions of physical qubits. Furthermore, achieving the necessary error rates for these demanding computations far surpasses the capabilities of current technological frontiers. This highlights the significant gap that still exists between present-day achievements and the ultimate aspirations for quantum technology.

William D. Oliver, a coauthor of the paper and a distinguished professor at MIT, where he holds the Henry Ellis Warren (1894) Professorship in Electrical Engineering and Computer Science, and also serves as a professor of physics and director of the Center for Quantum Engineering, emphasized the critical importance of historical context when evaluating technological readiness. He cautioned against misinterpretations that can arise from assessing readiness in a vacuum. "While semiconductor chips in the 1970s were TRL-9 for that time, they could do very little compared with today’s advanced integrated circuits," he explained, drawing a poignant analogy to the evolution of classical computing. "Similarly, a high TRL for quantum technologies today does not indicate that the end goal has been achieved, nor does it indicate that the science is done and only engineering remains. Rather, it reflects a significant, yet relatively modest, system-level demonstration has been achieved — one that still must be substantially improved and scaled to realize the full promise." This perspective is vital for setting realistic expectations and understanding the long road ahead.

The study also identified specific strengths and weaknesses among the leading platforms. In the realm of quantum computing, superconducting qubits emerged as the highest-scoring platform. For quantum simulation, neutral atoms took the lead. Photonic qubits demonstrated the most advanced capabilities for quantum networking, while spin defects excelled in quantum sensing applications. This differentiation is crucial for guiding future research and development efforts, allowing for targeted investments in platforms best suited for specific technological goals.

The researchers meticulously detailed several formidable hurdles that must be surmounted to enable the effective scaling of quantum systems. Paramount among these are advances in materials science and fabrication techniques. The development of consistent, high-quality quantum devices that can be reliably manufactured at scale is an indispensable prerequisite. Furthermore, wiring and signal delivery present substantial engineering challenges. The majority of current quantum platforms still rely on individual control lines for each qubit. As systems approach the requirement of millions of qubits, this approach becomes logistically untenable. This problem echoes the "tyranny of numbers" that computer engineers grappled with in the 1960s, where the sheer complexity of interconnections threatened to halt progress. Beyond wiring, critical issues such as power management, precise temperature control, automated calibration processes, and sophisticated system-level coordination all represent escalating challenges that will intensify as quantum systems grow in complexity and sophistication.

The paper compellingly draws parallels between the current trajectory of quantum technology and the protracted development timeline of classical electronics. It highlights that many of the transformative breakthroughs that underpinned the microelectronics revolution, including advancements in lithography techniques and the discovery of novel transistor materials, required years, and in some cases, even decades, to transition from the confines of research laboratories into widespread industrial production. The authors posit that quantum technology is poised to follow a similar evolutionary path. Consequently, they strongly advocate for a strategic, top-down approach to system design, fostering an environment of open scientific collaboration to preempt premature fragmentation of the field, and maintaining realistic expectations regarding timelines.

The concluding remarks of the paper offer a crucial piece of wisdom gleaned from historical technological advancements: "Patience has been a key element in many landmark developments," the authors write, "and points to the importance of tempering timeline expectations in quantum technologies." This sentiment serves as a powerful reminder that the journey towards unlocking the full potential of quantum technology will be a marathon, not a sprint, demanding sustained dedication, collaborative spirit, and a clear-eyed understanding of the challenges and opportunities that lie ahead.