This profound dilemma is precisely what a recent, groundbreaking study from Swinburne University endeavors to resolve. The research, spearheaded by Postdoctoral Research Fellow Alexander Dellios from Swinburne’s Centre for Quantum Science and Technology Theory, introduces novel methodologies that directly address the validation challenge inherent in quantum computing. Dellios eloquently articulates the core of the problem: "There exists a range of problems that even the world’s fastest supercomputer cannot solve, unless one is willing to wait millions, or even billions, of years for an answer." This stark reality underscores the necessity for innovative verification strategies. He further elaborates, "Therefore, in order to validate quantum computers, methods are needed to compare theory and result without waiting years for a supercomputer to perform the same task."

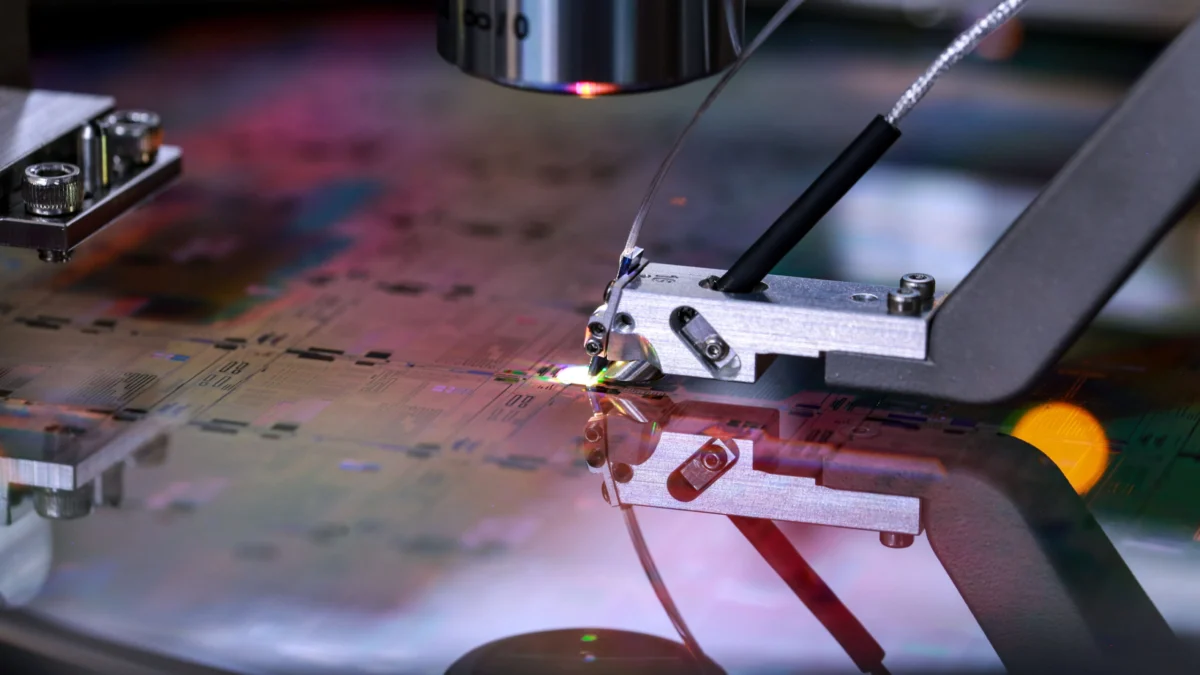

The Swinburne University research team has risen to this challenge by developing entirely new techniques specifically designed to confirm the accuracy of a particular class of quantum devices known as Gaussian Boson Samplers (GBS). GBS machines operate by harnessing the quantum properties of photons, the fundamental particles of light, to perform intricate probability calculations. The computational power required for even a single GBS to generate these probability distributions is so immense that it would take the fastest classical supercomputers thousands of years to replicate. This immense disparity in computational time is precisely why validating their outputs has been such a formidable hurdle.

The significance of the developed techniques lies in their remarkable efficiency and effectiveness. "In just a few minutes on a laptop, the methods developed allow us to determine whether a GBS experiment is outputting the correct answer and what errors, if any, are present," Dellios states, highlighting the dramatic contrast in computational time compared to classical verification methods. This capability is not merely theoretical; the researchers have already put their approach to the test by applying it to a recently published GBS experiment. This experiment, in its original analysis, would have demanded an astonishing 9,000 years for current supercomputers to reproduce. The rigorous analysis performed by the Swinburne team revealed a critical discrepancy: the resulting probability distribution generated by the experiment did not align with the intended theoretical target. Furthermore, their novel methods uncovered additional sources of "noise" within the experiment—subtle imperfections and unintended quantum interactions—that had not been previously identified or quantified.

The implications of these findings are far-reaching. The ability to swiftly identify and characterize errors in quantum experiments is paramount for building reliable quantum hardware. The immediate next step for the researchers involves a deeper investigation into the nature of the observed deviations. Specifically, they aim to determine whether the unexpected probability distribution itself is computationally challenging to reproduce classically, which could indicate a subtle but significant quantum phenomenon, or if the identified errors are responsible for the device’s deviation from its intended quantum behavior, potentially leading to a loss of its crucial "quantumness." Understanding this distinction is vital for guiding future hardware development and error correction strategies.

The ultimate goal of this research is to pave the way for the creation of large-scale, error-free quantum computers that are suitable for widespread commercial adoption. Alexander Dellios expresses a clear vision for his role in this endeavor: "Developing large-scale, error-free quantum computers is a herculean task that, if achieved, will revolutionize fields such as drug development, AI, cyber security, and allow us to deepen our understanding of the physical universe." He emphasizes the critical importance of scalable validation methods in achieving this ambitious goal. "A vital component of this task is scalable methods of validating quantum computers," he asserts, "which increase our understanding of what errors are affecting these systems and how to correct for them, ensuring they retain their ‘quantumness’."

The term "quantumness" itself refers to the unique quantum phenomena, such as superposition and entanglement, that give quantum computers their power. Errors can degrade or destroy these fragile quantum states, rendering the computer less effective or entirely useless for quantum computations. Therefore, precisely identifying and mitigating these errors is not just a matter of improving performance, but of ensuring the very foundation of quantum computation remains intact.

The techniques developed by the Swinburne team offer a tangible pathway towards this essential goal. By providing a rapid and accurate means of assessing the fidelity of quantum experiments, these methods can significantly accelerate the iterative process of quantum hardware design and optimization. Researchers can now more quickly identify problematic components, refine experimental parameters, and develop more robust error-correction protocols. This accelerated feedback loop is crucial for overcoming the numerous engineering and scientific challenges that lie in the path of building fault-tolerant quantum computers.

The potential applications of such machines are vast and transformative. In drug development, quantum computers could simulate molecular interactions with unprecedented accuracy, leading to the discovery of new medicines and therapies. In artificial intelligence, they could power more sophisticated machine learning algorithms, enabling advances in areas like pattern recognition, natural language processing, and autonomous systems. In cybersecurity, quantum computers pose a threat to current encryption methods but also offer the promise of developing new, quantum-resistant cryptographic techniques. Furthermore, their ability to model complex quantum systems could unlock new insights into the fundamental laws of the universe, from the behavior of subatomic particles to the mysteries of black holes and cosmology.

The research from Swinburne University, by providing a crucial tool for verifying quantum computations, directly addresses a bottleneck that has long hindered progress in the field. The ability to distinguish between a correct, albeit complex, quantum answer and an erroneous one due to hardware imperfections is fundamental to building trust and confidence in quantum technologies. As Dellios and his colleagues continue to refine and expand upon their work, they are not only contributing to the scientific understanding of quantum mechanics but also laying the groundwork for a future where quantum computers are not just theoretical marvels but reliable, powerful tools that reshape our world. This breakthrough signifies a tangible step forward in transforming the promise of quantum computing into a tangible reality, bringing us closer to unlocking its full revolutionary potential.