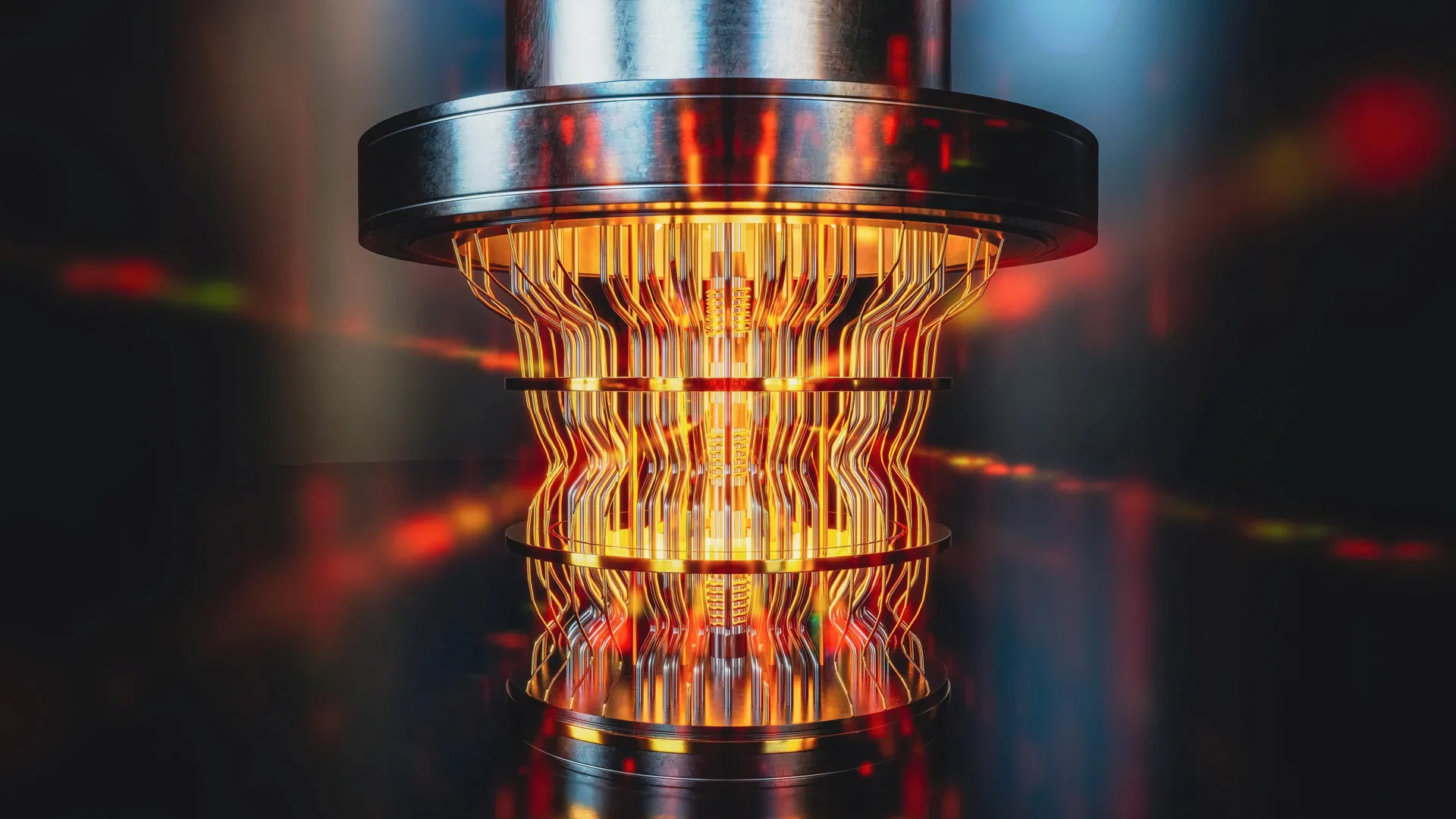

However, as the global race intensifies to construct the first truly reliable and commercially viable large-scale quantum computer, a critical and increasingly unavoidable issue has emerged: how can we definitively confirm the correctness of the answers produced by these devices, especially when they tackle problems deemed computationally intractable for classical machines? This is precisely the dilemma that a recent, pivotal study from Swinburne University has set out to address, offering a crucial piece of the puzzle for the future of quantum computing.

The inherent difficulty in verifying quantum computer outputs stems from the very nature of the problems they are designed to solve. As lead author Alexander Dellios, a Postdoctoral Research Fellow from Swinburne’s Centre for Quantum Science and Technology Theory, eloquently explains, "There exists a range of problems that even the world’s fastest supercomputer cannot solve, unless one is willing to wait millions, or even billions, of years for an answer." This stark reality highlights the fundamental challenge: if a quantum computer provides a solution to such a problem in a feasible timeframe, how can we possibly cross-check that solution without resorting to an equally, if not more, time-prohibitive computation on a classical system? This necessity underscores the urgent need for innovative validation methods. "Therefore, in order to validate quantum computers, methods are needed to compare theory and result without waiting years for a supercomputer to perform the same task," Dellios emphasizes.

The Swinburne University research team has responded to this imperative by developing novel techniques specifically designed to confirm the accuracy of a particular type of quantum device known as a Gaussian Boson Sampler (GBS). GBS machines operate on a fundamentally different principle, leveraging photons – the elementary particles of light – to perform complex probability calculations. These calculations are so intricate that even the most powerful classical supercomputers would require thousands of years to complete them. The new validation methods developed by Dellios and his colleagues offer a dramatic departure from this temporal constraint.

These innovative tools provide an unprecedented ability to scrutinize the performance of GBS experiments. "In just a few minutes on a laptop, the methods developed allow us to determine whether a GBS experiment is outputting the correct answer and what errors, if any, are present," Dellios states, underscoring the remarkable efficiency and accessibility of their breakthrough. This is a stark contrast to the millennia-long computation required for classical verification.

To put their groundbreaking approach to the test, the researchers applied it to a recently published GBS experiment. This particular experiment was estimated to require at least 9,000 years for current supercomputers to reproduce. The analysis performed by the Swinburne team, using their novel methods, yielded significant and revealing insights. Their findings indicated that the probability distribution generated by the experiment did not align with the intended theoretical target. Furthermore, their analysis uncovered the presence of additional noise within the experiment that had not been previously identified or accounted for. This discovery is critical because it suggests that subtle, previously undetected imperfections can significantly impact the accuracy of quantum computations, even in sophisticated GBS setups.

The implications of this finding are far-reaching. The next crucial step in this line of research involves determining whether the unexpected probability distribution observed is itself computationally difficult to reproduce using classical means. If it is, it could point towards a new class of problems that quantum computers can solve and that are also challenging to verify classically. Alternatively, if the observed errors are found to be the cause of the deviation, it raises the vital question of whether these errors are so significant that they lead the device to lose its essential "quantumness" – the delicate quantum properties that enable its computational power. Understanding this distinction is paramount for building robust and reliable quantum machines.

The successful outcome of this investigation is poised to significantly shape the future development of large-scale, error-free quantum computers that are suitable for widespread commercial adoption. This is a vision that Dellios is personally invested in and hopes to contribute to leading. "Developing large-scale, error-free quantum computers is a herculean task that, if achieved, will revolutionize fields such as drug development, AI, cyber security, and allow us to deepen our understanding of the physical universe," he asserts with conviction.

The quest for such advanced quantum computing capabilities is intrinsically linked to the ability to reliably verify their performance. "A vital component of this task is scalable methods of validating quantum computers," Dellios explains, highlighting the indispensable role of his team’s research. These scalable validation methods not only confirm the correctness of quantum computations but also enhance our understanding of the types of errors that can affect these systems. This deeper comprehension is essential for developing effective strategies to mitigate and correct these errors, thereby ensuring that quantum computers retain their crucial "quantumness" and operate with the high degree of fidelity required for practical applications. In essence, the ability to detect and correct errors is the bedrock upon which the entire edifice of reliable quantum computing will be built. This breakthrough from Swinburne University represents a significant stride in that direction, bringing the transformative potential of quantum computing closer to reality.