Addressing this fundamental dilemma is a recent groundbreaking study from Swinburne University, which has developed novel techniques to verify the accuracy of a specific type of quantum device, known as a Gaussian Boson Sampler (GBS). The impetus behind this research stems from the inherent nature of quantum computation. As lead author Alexander Dellios, a Postdoctoral Research Fellow from Swinburne’s Centre for Quantum Science and Technology Theory, explains, "There exists a range of problems that even the world’s fastest supercomputer cannot solve, unless one is willing to wait millions, or even billions, of years for an answer." This immense timescale makes direct comparison with classical computation impractical for validation purposes. Therefore, Dellios emphasizes, "in order to validate quantum computers, methods are needed to compare theory and result without waiting years for a supercomputer to perform the same task."

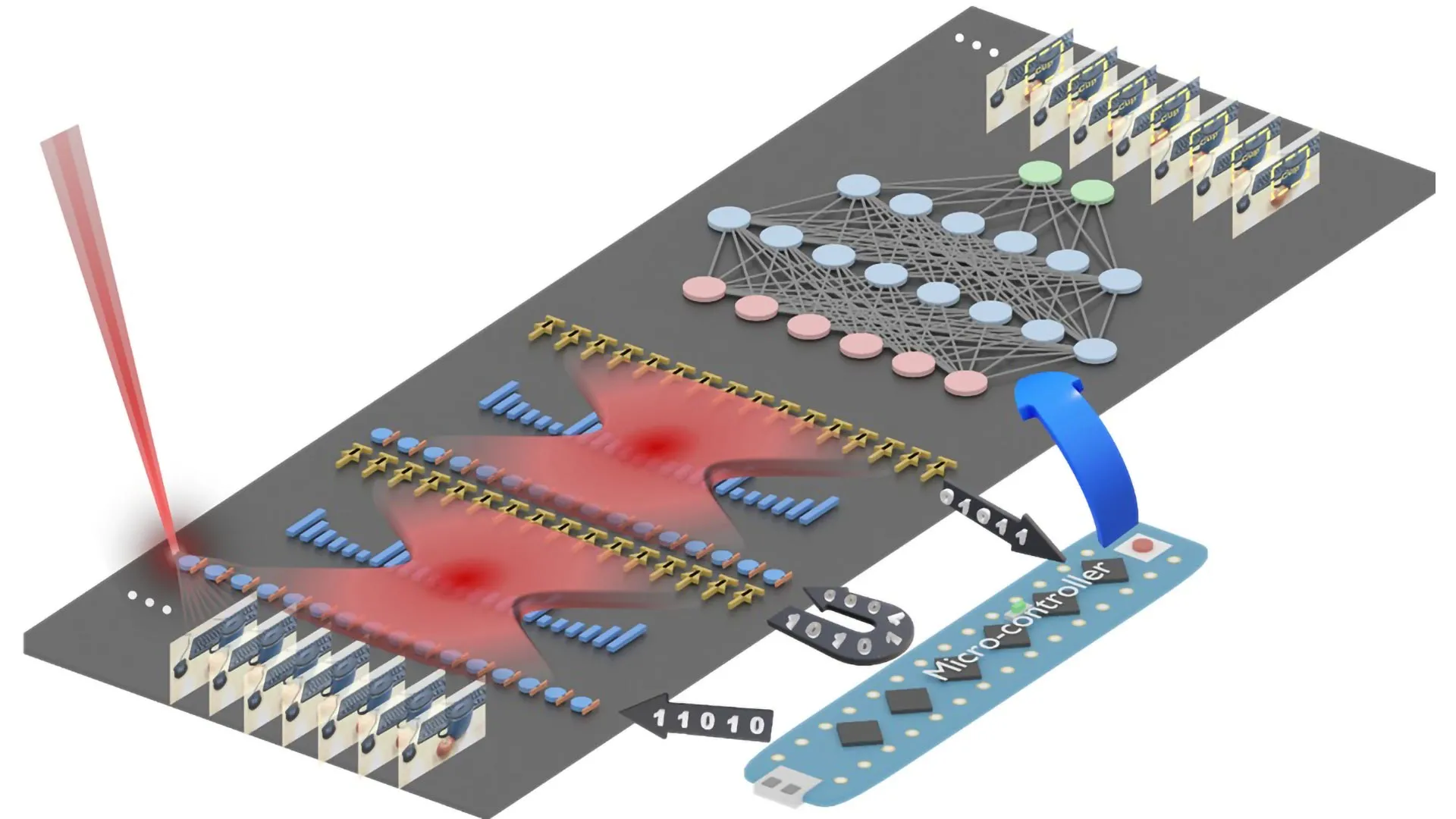

Gaussian Boson Samplers, the focus of this new research, operate by harnessing the principles of quantum mechanics, specifically utilizing photons – the fundamental particles of light. These devices are designed to generate complex probability calculations that are exponentially difficult for classical computers to replicate. The sheer scale of computation required to simulate a GBS experiment on a classical machine can easily stretch into the thousands of years, rendering direct verification impossible within a human timescale. This is precisely where the innovation from Swinburne University becomes invaluable.

The research team has devised sophisticated new tools that dramatically reduce the time and computational resources needed to assess the accuracy of GBS experiments. These methods allow researchers to determine, within a matter of minutes on a standard laptop, whether a GBS experiment is yielding the correct results and, crucially, to identify any errors that might be present. This ability to rapidly detect and diagnose errors is paramount for the development of reliable quantum hardware.

To showcase the efficacy of their approach, the researchers applied their new validation techniques to a recently published GBS experiment. This particular experiment involved a computational task so complex that reproducing it on current supercomputers would necessitate at least 9,000 years. The analysis performed by Dellios and his team revealed a significant discrepancy: the probability distribution generated by the quantum device did not align with the intended theoretical target. Furthermore, their analysis unearthed evidence of "extra noise" within the experiment that had not been previously accounted for or evaluated. This finding underscores the importance of their validation methods, as they can reveal subtle imperfections that might otherwise go unnoticed.

The implications of this discovery are far-reaching. The immediate next step for the researchers is to investigate whether reproducing this unexpected probability distribution is itself a computationally challenging task, or if the observed errors are a consequence of the device losing its crucial "quantumness" – the unique quantum properties that enable its computational power. Understanding the source and nature of these errors is key to developing strategies for their mitigation and correction.

The outcome of this investigation holds significant promise for shaping the future development of large-scale, error-free quantum computers that are suitable for commercial deployment. This is a goal that Alexander Dellios is deeply invested in. He articulates the monumental effort required, stating, "Developing large-scale, error-free quantum computers is a herculean task that, if achieved, will revolutionize fields such as drug development, AI, cyber security, and allow us to deepen our understanding of the physical universe."

Dellios further emphasizes the indispensable role of reliable validation in this endeavor. He elaborates, "A vital component of this task is scalable methods of validating quantum computers, which increase our understanding of what errors are affecting these systems and how to correct for them, ensuring they retain their ‘quantumness’." The ability to accurately assess and continuously monitor the performance of quantum computers is not merely a scientific curiosity; it is a foundational requirement for building trust and enabling the widespread adoption of this transformative technology.

The development of these novel validation techniques represents a significant leap forward in the quest for reliable quantum computing. By providing a swift and effective means to detect errors and assess the fidelity of quantum computations, these methods empower researchers to accelerate the development cycle, refine quantum hardware, and ultimately bring the promise of quantum computing closer to reality. This breakthrough has the potential to unlock unprecedented scientific discoveries and drive innovation across a multitude of sectors, heralding a new era of computational power and problem-solving capability. The ability to confirm the correctness of quantum computations is not just about verifying results; it’s about building confidence and paving the way for the quantum revolution to unfold responsibly and effectively.