Sign up to see the future, today

Can’t-miss innovations from the bleeding edge of science and tech

OpenAI, a company once lauded for its commitment to “beneficent AI” and the “betterment of the human race,” has found itself embroiled in a public relations nightmare, inadvertently handing one of its biggest rivals, Anthropic, a massive moral and market victory. The blunder, so significant that even CEO Sam Altman conceded its “optics don’t look good,” stems from OpenAI’s recent agreement with the U.S. Department of Defense, a move widely perceived as a capitulation to military interests and a stark betrayal of its founding principles. This controversial decision has triggered a fierce backlash, prompting a growing “cancel ChatGPT” movement among disillusioned users and sparking a critical debate about the ethical responsibilities of leading AI developers in an increasingly militarized technological landscape.

OpenAI’s Controversial Alliance with the Pentagon

The controversy ignited on a recent Friday when Sam Altman announced a new agreement between OpenAI and the Department of Defense (DoD) regarding the deployment of its advanced AI systems across military operations. While the specifics of the deal remain somewhat shrouded, the very act of formalizing such a partnership sent shockwaves through the tech community and among OpenAI’s user base. For many, this represented OpenAI “crossing the picket line,” a symbolic betrayal given the prevailing ethical concerns surrounding AI’s role in warfare and surveillance. The timing of the announcement also exacerbated public outrage: just hours after Altman’s revelation, the U.S. and Israel launched a series of deadly strikes in Iran, reportedly killing its leader Ruhollah Khomeini and hundreds of civilians. This grim real-world context immediately cast a shadow over OpenAI’s assurances, raising urgent questions about the immediate ethical implications of its technology being potentially integrated into military decision-making and target selection processes.

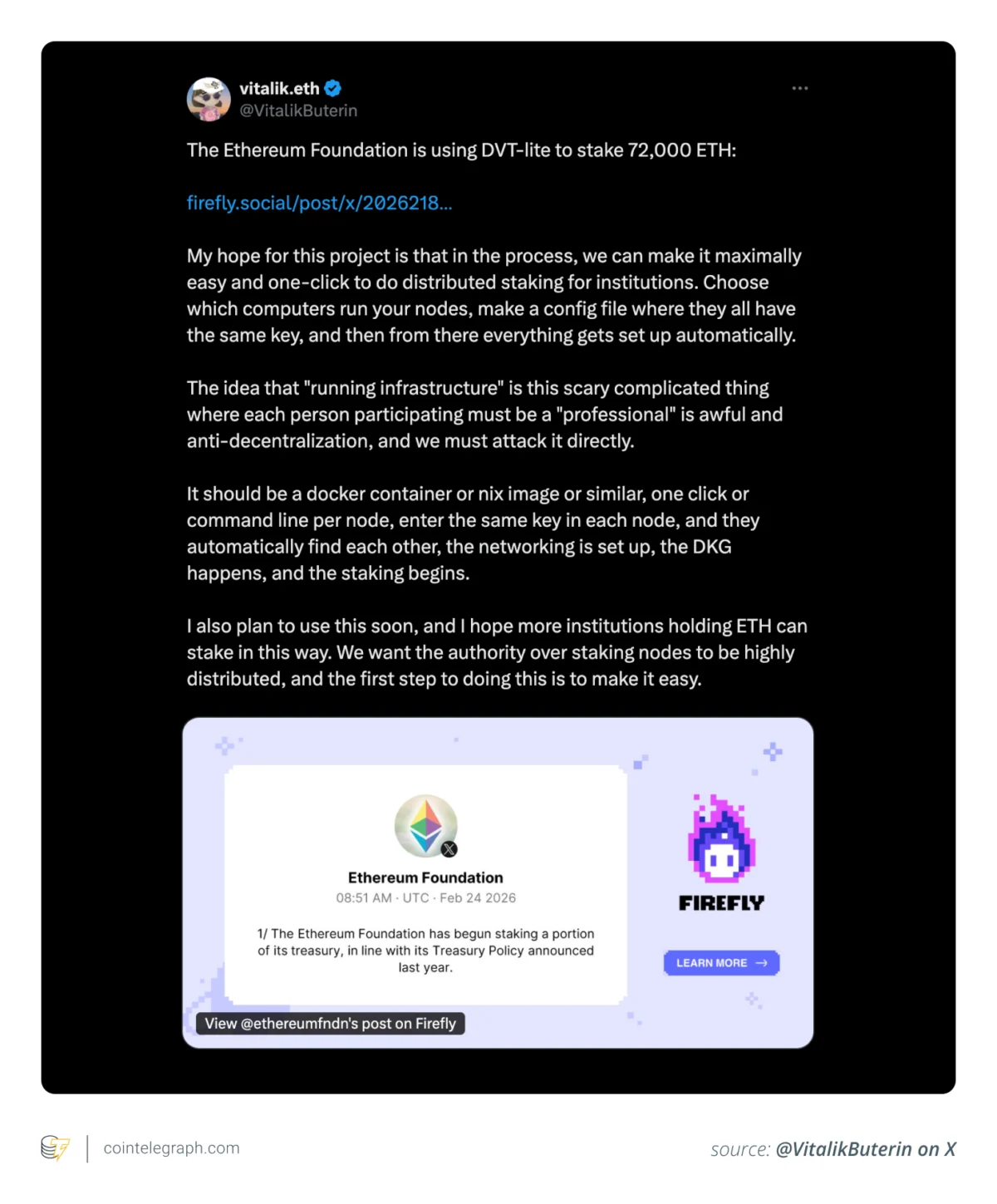

Anthropic’s Principled Stand and its Unforeseen Rewards

The stark contrast to OpenAI’s decision was provided by Anthropic, a company founded by former OpenAI employees who had left due to philosophical differences over AI safety and ethics. Anthropic had previously refused to yield to the Pentagon’s demands for unrestricted use of its Claude AI, with CEO Dario Amodei steadfastly insisting on clear stipulations: Anthropic’s AI must not be used for autonomous weaponry or the mass surveillance of U.S. citizens. This principled stance came at a significant potential cost. The Pentagon had reportedly vowed to freeze Anthropic out of federal government contracts, threatening to declare it a “supply chain risk” and even seize its technology, signaling the immense pressure placed upon AI developers to align with military objectives.

However, what initially appeared to be a costly moral stand has, at least in the short term, transformed into an unprecedented public relations coup. As OpenAI faced a torrent of criticism, Anthropic’s refusal to “bend the knee” resonated deeply with users concerned about the ethical trajectory of AI. This allowed Anthropic to capture the moral high ground, presenting itself as the torchbearer for responsible AI development, even as some reports later suggested that the DoD may have used Claude to select targets in Iran, implying that even Anthropic’s principled stand might be yet more theater from the often opaque AI industry. Nevertheless, the public perception was overwhelmingly in Anthropic’s favor.

The “Cancel ChatGPT” Movement Gains Momentum

The fallout for OpenAI was immediate and severe. Online platforms exploded with outrage, as scores of users – ranging from dedicated AI enthusiasts often dubbed “AI bros” to, surprisingly, celebrity figures like Katy Perry – declared their intention to ditch ChatGPT subscriptions in favor of Anthropic’s Claude. The sentiment was clear: OpenAI had compromised its soul, and users were voting with their wallets. This collective outcry quickly translated into tangible market shifts. Over the weekend following the announcement, Claude surged to the top of the App Store rankings, displacing ChatGPT, which found itself relegated to second place by Monday. A Reddit thread in the popular r/ChatGPT subreddit, titled “You’re now training a war machine,” quickly became one of the forum’s most highly-upvoted posts of all time, urging users to “Let’s see proof of cancellation,” illustrating the depth of user anger and the desire for collective action. This unprecedented exodus underscored a fundamental truth: a significant portion of the AI user base expects ethical leadership from the companies developing these powerful tools, and OpenAI’s deal with the Pentagon was seen as a profound violation of that trust.

Sam Altman’s Damage Control and Its Critiques

In the face of this unprecedented backlash, Sam Altman swiftly entered damage control mode. He hosted a rare “Ask Me Anything” (AMA) session on X (formerly Twitter) to address concerns about OpenAI’s work with the “DoW” – a pointed reference to the “Department of War,” the Trump administration’s preferred, more aggressive moniker for the Department of Defense. Respondents on X did not hold back, grilling Altman on the perceived hypocrisy of the company’s shift.

Users openly challenged his leadership, with one asking, “How did you go from ‘a tool for the betterment of the human race’ to ‘let’s work with the department of WAR’?” Another mocked him directly by inquiring if he was pleased that Claude had overtaken ChatGPT on the App Store, to which Altman candidly conceded, “No.”

Altman’s defense largely hinged on the claim that OpenAI’s DoD agreement included the same stringent restrictions that Anthropic had sought, promising that the company would refuse any orders that violated the Constitution or sought to carry out mass domestic surveillance. He even quipped, “Please come visit me in jail if necessary,” attempting to project an image of unwavering commitment to civil liberties. However, this stance was undermined by his subsequent expressions of what many perceived as a “blind faith” in the military’s rectitude. Altman asserted that “the people in our military are far more committed to the constitution than an average person off the streets” and uncritically cited a DoD official’s vow against infringing on civil liberties or engaging in “unlawful” surveillance.

Critics quickly pounced on these statements, pointing out the historical record of the U.S. military and various administrations, including the Trump administration, leaning on cutting-edge surveillance tech for mass deportations and other ethically dubious operations. Users found Altman’s dismissal of these concerns, and his apparent “memory-holing” of figures like Edward Snowden, to be insulting to their intelligence. As one user fumed, “You cannot post the statements by an Administration that is known to lie and expect people to have trust or confidence in [you or your company].” Altman’s insistence that he “would also be terrified of a world where our government decided mass domestic surveillance was ok” and that he “don’t know how I’d come to work every day if that were the state of the country/Constitution” further highlighted the perceived disconnect between his professed ideals and the company’s actions. Ultimately, even Altman had to concede that the DoD deal “was definitely rushed, and the optics don’t look good,” an understatement for the ethical quagmire OpenAI found itself in.

The Broader Implications for AI Ethics and Industry

This incident is more than just a PR blunder for OpenAI; it represents a defining moment in the ongoing debate about AI ethics and the role of powerful technological entities in global affairs. The “cancel ChatGPT” movement underscores a growing public demand for transparency and accountability from AI developers, particularly when their innovations touch upon sensitive areas like national security and warfare. It highlights the dual-use dilemma inherent in advanced AI – technologies developed for general intelligence can easily be repurposed for military applications, raising profound questions about the moral responsibility of their creators.

OpenAI’s decision has also set a precedent, potentially signaling to other AI companies that military contracts are a viable, if ethically fraught, path to growth. This could accelerate an AI arms race, pushing developers to prioritize strategic partnerships over ethical considerations. Conversely, Anthropic’s unexpected surge in popularity demonstrates the market value of a clear, principled ethical stance, potentially incentivizing other companies to adopt more robust ethical frameworks.

The episode also exposes the challenges of maintaining a “beneficent” mission in a competitive and politically charged environment. OpenAI, initially founded with a non-profit structure to ensure AI benefits all humanity, has increasingly adopted a commercial model, leading to accusations that profit motives are now overriding its founding ideals. This tension between commercial success, national security imperatives, and ethical development will undoubtedly continue to shape the future of the AI industry.

Conclusion: A Pivotal Moment for AI’s Future

The controversy surrounding OpenAI’s deal with the Department of Defense is a stark reminder of the complex ethical landscape in which AI development operates. While OpenAI navigates a public relations crisis and a significant loss of user trust, Anthropic has capitalized on its rival’s misstep, emerging as a champion of ethical AI, at least in the eyes of the public. This saga underscores the critical importance of corporate responsibility, transparent ethical guidelines, and user engagement in shaping the future of artificial intelligence. As AI technologies become increasingly powerful and pervasive, the decisions made by companies like OpenAI and Anthropic will have far-reaching implications, determining whether AI truly serves the betterment of humanity or becomes another tool in the arsenal of conflict and control. The current backlash against OpenAI serves as a powerful testament to the fact that for many, the ethical deployment of AI is not merely an abstract philosophical debate, but a tangible expectation that influences their choices and loyalty.

More on OpenAI: Anthropic Blowout With Military Involved Use of Claude for Incoming Nuclear Strike