When Amazon-owned Ring debuted its latest offering, "Search Party," during a high-profile Super Bowl commercial break, it presented a seemingly heartwarming solution to a common problem: finding lost pets. The advertisement enthusiastically declared that "one post of a dog’s photo in the Ring app starts outdoor cameras looking for a match to help families find lost dogs," painting a picture of a benevolent technological assistant harnessing its vast network for community good. Yet, beneath this veneer of altruism lies a profoundly unsettling revelation: Ring’s ubiquitous doorbell cameras are now explicitly capable of identifying and tracking living beings across entire neighborhoods, signaling a significant escalation in the scope of civilian surveillance and raising urgent questions about privacy, civil liberties, and the future of public space.

Ring, the Amazon subsidiary that has permeated suburban and urban landscapes with its internet-connected doorbell cameras, has long been a lightning rod for criticism regarding its privacy practices. These devices, perched on countless front porches, record virtually everyone who passes by, creating an unprecedented network of localized surveillance. Over the years, this network has drawn "widespread privacy criticisms," with concerns ranging from footage being accessed by law enforcement without warrants to vulnerabilities that allowed hackers to gain control of devices, and even instances of Ring employees accessing customer video feeds without authorization. The company’s partnerships with over 2,000 police departments across the United States have been particularly contentious, effectively creating a privatized, warrantless surveillance apparatus that bypasses traditional legal safeguards. Police can request footage directly from Ring users, often without needing a warrant, blurring the lines between voluntary neighborhood watch and state-sponsored monitoring.

Adding another layer of apprehension, Ring’s recent "data sharing agreement" with Flock Safety, a company specializing in automated license plate recognition (ALPR) technology, has further inflamed activists and privacy advocates. Flock Safety operates a rapidly expanding network of thousands of fixed and mobile cameras that scan and record license plates, feeding this data into a centralized database accessible by law enforcement. Unlike Ring, Flock has no qualms about its close collaboration with "federal agencies" such as Immigration and Customs Enforcement (ICE), the Secret Service, and the Navy, granting them access to a nationwide network of surveillance. The convergence of Ring’s person-tracking capabilities with Flock’s vehicle-tracking infrastructure paints a picture of a truly comprehensive, pervasive surveillance grid, where not just faces but also movements and affiliations can be meticulously documented and analyzed. This partnership raises the chilling prospect that a person’s presence at a particular location, identified by a Ring camera, could be cross-referenced with their vehicle data from a Flock camera, constructing an increasingly detailed digital footprint of their daily life.

Given this backdrop of escalating privacy concerns and deepening ties with law enforcement, the decision to launch "Search Party" and highlight its dog-finding capabilities is a masterstroke of public relations. Who, after all, could object to the heartwarming endeavor of reuniting lost puppies with their distraught families? The emotional resonance of a lost pet serves as a powerful distraction, deftly sidestepping the far more profound implications of the technology powering this seemingly benign service. It allows Ring to showcase its network’s formidable capabilities in a non-threatening, even endearing, light, while simultaneously normalizing the underlying infrastructure of constant, algorithmic vigilance. This strategic PR move attempts to reframe the company from a controversial surveillance provider into a benevolent community helper, leveraging universal empathy to mask the expansion of its monitoring potential.

However, despite the warm glow of reuniting pets, the new "Search Party" function represents a troubling and arguably irreversible development in the evolution of mass surveillance. The original article rightly points out that while Ring’s "Fire Watch system" is designed to index neighborhood devices to look for inanimate signs of emergencies like fires, "Search Party" explicitly means the company is now "capable of tracking living things as well." This distinction is critical. Fire detection involves identifying a specific, non-sentient event. Tracking living beings, however, opens a "Pandora’s box" of possibilities, raising questions about identity, movement, and autonomy.

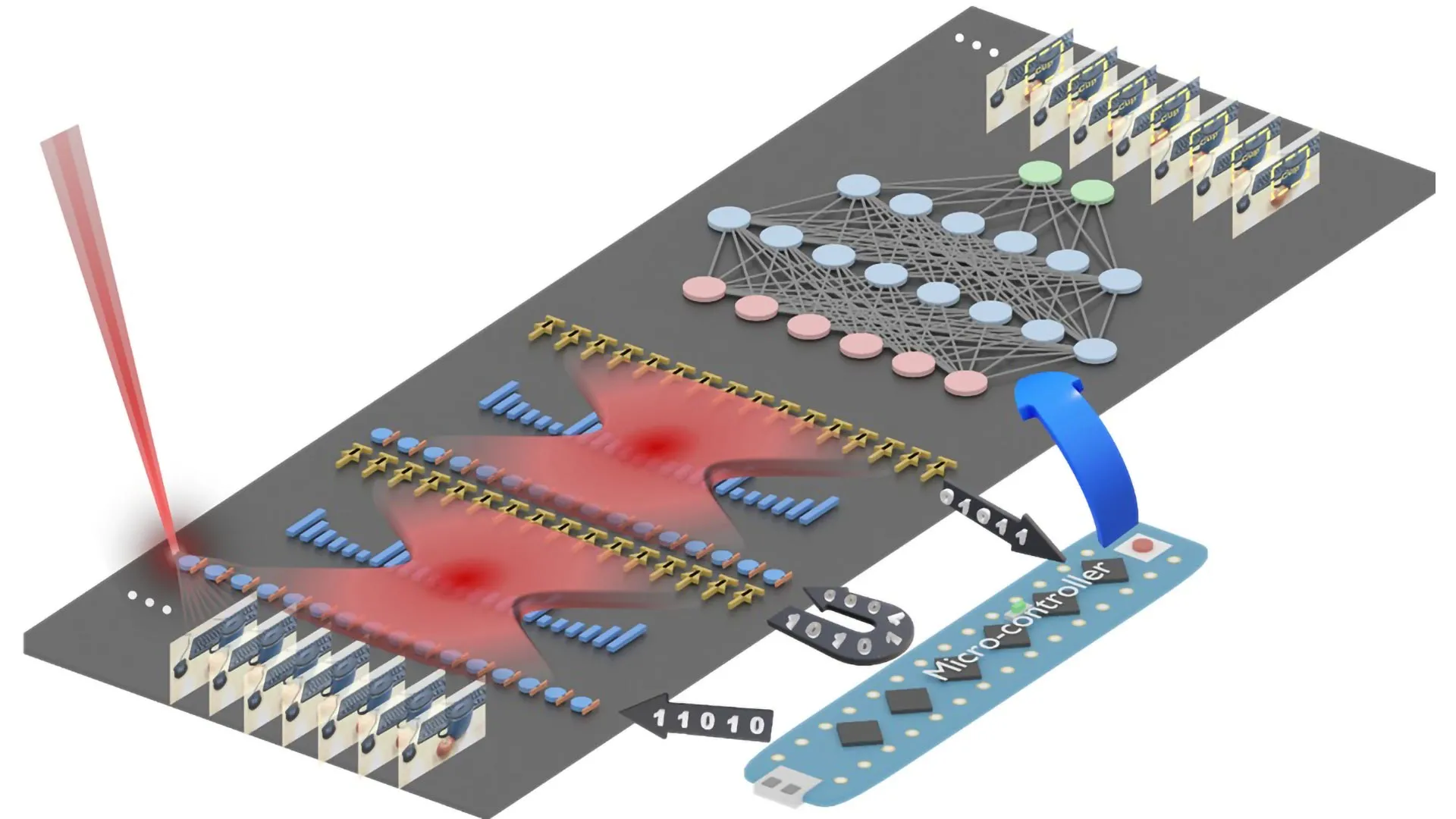

Once Ring’s network is calibrated to identify and track dogs, the technological leap to identifying and tracking humans – children, adults, "persons of interest," or even political protesters – becomes not just plausible but highly probable, if not already operational in a latent form. Who decides when and how to deploy this immense power? What are the criteria for initiating a "search" for a living being, and who has the authority to request it? The company’s statement emphasizes that "camera owners choose on a case-by-case basis whether they want to share videos with a pet owner," implying user control. Yet, the very existence of the underlying capability — the ability for Ring’s AI to sift through countless hours of footage across an entire network to identify a specific entity — is the game-changer. This capability, once developed and refined for pets, can be easily repurposed.

As "MS Now columnist" Hayes Brown presciently observed, "there’s no world in which finding lost dogs is the final end-use for this technology." This statement cuts to the core of the issue, highlighting the inherent "mission creep" that characterizes many surveillance technologies. What begins as a tool for public safety or convenience often expands to encompass broader, more intrusive applications. For instance, the same algorithms trained to recognize a specific dog breed could, with minor adjustments, be trained to identify human faces, specific clothing, or even gait patterns. This means Ring’s cameras could potentially be used to track individuals beyond the immediate context of a lost pet – perhaps to locate a missing child, a vulnerable adult, or, more controversially, a criminal suspect, or even someone who is merely deemed "suspicious" by an algorithm or an authority. The infrastructure for pervasive, real-time tracking of individuals within a neighborhood is now demonstrably in place, requiring only a shift in purpose or an upgrade in analytical capabilities to become a tool for extensive human surveillance.

Ring’s spokesperson, in their defense, stated that "Ring’s Search Party feature does what neighbors have done for generations — help reunite lost dogs with their families — just with better technology," and that they "built the feature with strong privacy protections from the start." They also claimed that "since launch, Search Party has helped bring home more than a dog a day." While the sentiment of neighborly assistance is appealing, equating a decentralized, voluntary human effort with an AI-powered, centralized surveillance network owned by a tech giant is disingenuous. Traditional neighborly help relies on trust, limited scope, and human discretion. Ring’s "better technology," conversely, centralizes immense data, processes it with opaque algorithms, and holds the potential for large-scale, automated tracking. The "strong privacy protections" are also questionable in light of Ring’s documented history of privacy failures and data-sharing agreements with entities like Flock Safety and numerous police departments, which have consistently eroded individual privacy. The very notion of "case-by-case" consent, while seemingly empowering, often relies on users understanding the full implications of the technology, which is frequently not the case.

The broader societal implications of such ubiquitous surveillance cannot be overstated. With devices like Ring proliferating, public and semi-public spaces – our streets, sidewalks, and even parts of our private property – are increasingly becoming zones of constant, automated monitoring. This creates a "surveillance society" where every movement, every interaction, and every presence is potentially logged, analyzed, and stored. The erosion of anonymity in public spaces has profound consequences for civil liberties, potentially chilling free assembly, protest, and even simple freedom of movement. When individuals know they are constantly being watched, whether by "Search Party" or future iterations of surveillance tech, it can subtly alter behavior, fostering a sense of self-censorship and conformity.

Furthermore, the integration of AI with these surveillance systems raises concerns about algorithmic bias and the potential for "false arrests," as highlighted by the article’s concluding reference. If AI systems are already causing wrongful arrests in other surveillance contexts, imagine the implications when an AI-powered network, designed to identify specific entities, is deployed on a massive scale across residential areas. Errors in identification, misinterpretations of behavior, or biases embedded in the algorithms could lead to significant injustices, disproportionately affecting certain communities or individuals.

The introduction of "Search Party" is more than just a new feature; it is a clear signal that the technological capability for widespread, granular surveillance of living beings in our communities is not only possible but is actively being developed and deployed by private corporations. It underscores the urgent need for robust regulatory frameworks, transparent corporate practices, and an informed public debate about the acceptable limits of technology in our pursuit of safety and convenience. Without these, the "Pandora’s box" opened by Ring could unleash a future where privacy is an antiquated concept, and every neighborhood becomes a seamlessly monitored digital panopticon.