Researchers at the Niels Bohr Institute, in collaboration with international partners, have achieved a groundbreaking advancement in quantum computing by developing a system capable of detecting and tracking rapid changes in delicate quantum states within qubits in real time. This breakthrough, which combines commercially available hardware with novel adaptive measurement techniques, allows scientists to observe shifts in qubit behavior that were previously imperceptible, offering unprecedented insights into the fundamental workings of quantum processors. The implications of this development are profound, promising to accelerate the journey towards building larger, more stable, and ultimately, more powerful quantum computers.

Qubits, the fundamental building blocks of quantum computers, are inherently fragile. Their ability to exist in multiple states simultaneously, a phenomenon known as superposition, is what grants quantum computers their potential to outperform classical machines for certain complex calculations. However, the materials used to construct these qubits are often riddled with microscopic defects. These imperfections, far from being static, can shift and move hundreds of times per second. Each movement alters the qubit’s environment, influencing how quickly it loses its precious quantum information through a process called relaxation. Until now, the tools available to measure this relaxation rate were too slow, taking up to a minute to provide an average, masking the dynamic and often erratic behavior of the qubit. This is akin to trying to navigate a complex obstacle course where the obstacles appear and disappear faster than a driver can react, rendering precise control impossible.

The research team, hailing from the Niels Bohr Institute’s Center for Quantum Devices and the Novo Nordisk Foundation Quantum Computing Programme, was led by postdoctoral researcher Dr. Fabrizio Berritta. Their innovative solution is a real-time adaptive measurement system that continuously monitors and updates its estimate of a qubit’s relaxation rate. This system operates at a speed that matches the natural cadence of the fluctuations themselves, a significant leap from previous methods that lagged far behind. The project also benefited from the expertise of scientists at the Norwegian University of Science and Technology, Leiden University, and Chalmers University, highlighting the collaborative nature of cutting-edge scientific endeavors.

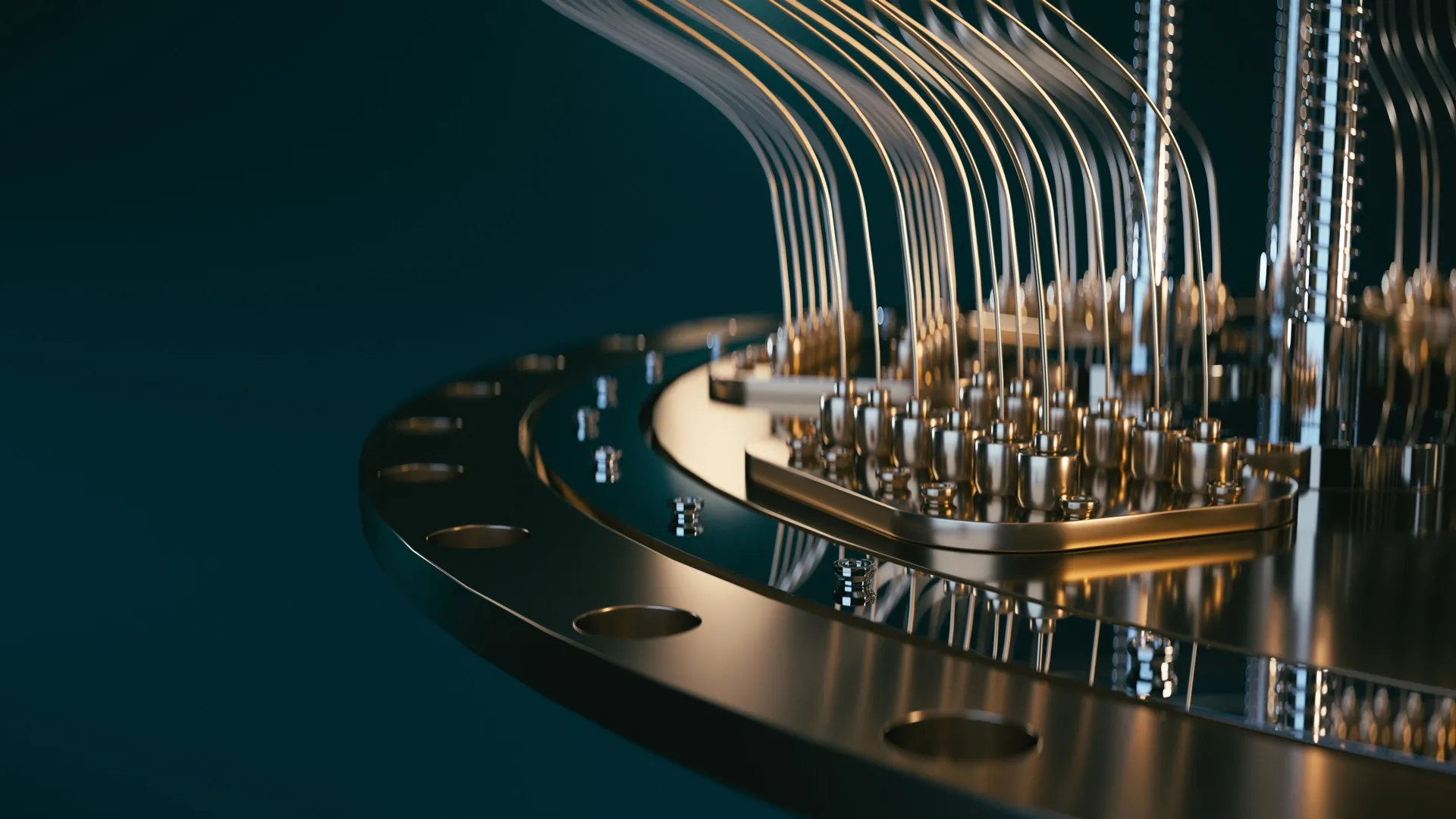

At the heart of this new approach lies a Field Programmable Gate Array (FPGA). Unlike traditional CPUs, FPGAs are specialized classical processors designed for extremely high-speed operations, making them ideal for real-time control and data processing. By executing the experimental control and measurement analysis directly on the FPGA, the researchers were able to drastically reduce latency. The system can generate an estimate of the qubit’s energy loss rate using just a handful of measurements, and crucially, it can update this estimate within milliseconds. This eliminates the bottleneck of transferring data to a separate, slower conventional computer for analysis.

The task of programming an FPGA for such a specialized and demanding application is far from trivial. However, the team successfully implemented a sophisticated Bayesian model within the FPGA’s controller. This model is updated after every single qubit measurement, allowing the system to continuously refine its understanding of the qubit’s state and its relaxation rate. This dynamic, real-time adaptation enables the controller to keep pace with the constantly changing environment of the qubit. Measurements and subsequent adjustments occur on a timescale that is nearly identical to the fluctuations themselves, resulting in a system that is approximately one hundred times faster than previous demonstrations. This unprecedented speed has yielded a significant scientific discovery: the precise speed at which fluctuations occur in superconducting qubits was previously unknown, and these experiments have now provided that crucial insight.

A key factor in the success of this project was the seamless integration of commercial quantum hardware with advanced classical control systems. The researchers utilized a commercially available FPGA-based controller, the OPX1000 from Quantum Machines. This device is programmed using a language similar to Python, a widely used programming language among physicists, which significantly enhances its accessibility for research groups worldwide. This democratization of advanced control technology is vital for accelerating progress in the field.

The close integration of this controller with advanced quantum hardware was facilitated by a strong partnership between the Niels Bohr Institute research group, led by Associate Professor Morten Kjærgaard, and Chalmers University, where the quantum processing unit itself was meticulously designed and fabricated. Associate Professor Kjærgaard emphasizes the critical role of the controller: "The controller enables very tight integration between logic, measurements and feedforward: these components made our experiment possible." This synergy between hardware design and advanced control is a testament to the power of interdisciplinary collaboration.

The significance of real-time calibration for the future of quantum computers cannot be overstated. While practical, large-scale quantum computers are still a work in progress, advancements like this represent major leaps forward. By uncovering these previously hidden qubit dynamics, the findings fundamentally reshape how scientists approach the testing and calibration of superconducting quantum processors. In the current landscape of quantum computing, where materials and manufacturing processes are still being refined, real-time monitoring and adjustment are becoming essential for improving the reliability of these sensitive devices. The success of this project also underscores the critical importance of strong partnerships between academic research institutions and industry, as well as the ingenuity required to creatively leverage available technologies.

Dr. Berritta elaborates on the practical implications: "Nowadays, in quantum processing units in general, the overall performance is not determined by the best qubits, but by the worst ones: those are the ones we need to focus on. The surprise from our work is that a ‘good’ qubit can turn into a ‘bad’ one in fractions of a second, rather than minutes or hours." This realization highlights the dynamic nature of qubit performance and the limitations of slow, average-based measurements.

"With our algorithm, the fast control hardware can pinpoint which qubit is ‘good’ or ‘bad’ basically in real time," Dr. Berritta continues. "We can also gather useful statistics on the ‘bad’ qubits in seconds instead of hours or days. This ability to rapidly identify and characterize problematic qubits is crucial for efficient debugging and optimization."

However, the journey towards fully understanding and controlling quantum systems is ongoing. "We still cannot explain a large fraction of the fluctuations we observe," Dr. Berritta admits. "Understanding and controlling the physics behind such fluctuations in qubit properties will be necessary for scaling quantum processors to a useful size." This acknowledgment points to the next frontier in quantum research: delving deeper into the fundamental physics that governs qubit behavior and developing strategies to mitigate or even harness these fluctuations. This breakthrough, therefore, is not an end in itself, but a powerful new tool that opens up new avenues of investigation and brings the promise of fault-tolerant quantum computing closer to reality.