Researchers at the Niels Bohr Institute have achieved a significant leap forward in quantum computing by dramatically accelerating the detection of changes within delicate quantum states residing in qubits. This groundbreaking advancement, born from the ingenious fusion of commercially available hardware and novel adaptive measurement techniques, now empowers scientists to observe rapid shifts in qubit behavior that were previously shrouded in mystery, operating at speeds far beyond the reach of conventional methods.

Qubits, the fundamental building blocks of quantum computers, hold the immense promise of revolutionizing computation, potentially surpassing the capabilities of even today’s most powerful supercomputers. However, the inherent nature of qubits makes them exquisitely sensitive to their environment. The very materials engineered to construct these quantum bits often harbor microscopic defects, subtle imperfections whose precise behavior remains a subject of ongoing scientific investigation. These minute irregularities can dynamically shift their positions at astonishing rates, sometimes hundreds of times per second. As these defects migrate, they exert a profound influence on the qubit’s energy dissipation rate, leading to a swift loss of invaluable quantum information.

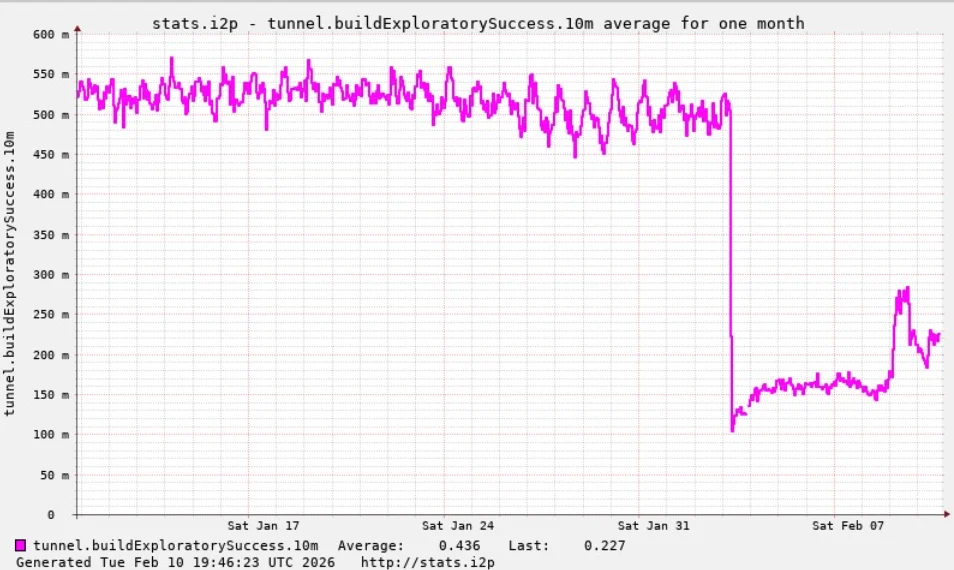

Historically, the standard methodologies employed to assess qubit performance were painstakingly slow, often requiring up to a minute to complete a single measurement. This glacial pace rendered them utterly incapable of capturing the fleeting, high-frequency fluctuations that characterize qubit behavior. Consequently, scientists were confined to determining only an averaged energy loss rate, effectively masking the true, and often volatile, dynamics of the qubit. This limitation can be aptly analogized to tasking a powerful workhorse with pulling a plow while an unseen force constantly introduces obstacles into its path with a speed that defies human reaction. The animal might possess immense strength and capability, but these unpredictable disruptions cripple its efficiency and render the task immensely more arduous.

FPGA-Powered Real-Time Qubit Control: A Paradigm Shift in Measurement

At the forefront of this transformative research stands a team from the Niels Bohr Institute’s Center for Quantum Devices and the Novo Nordisk Foundation Quantum Computing Programme, spearheaded by postdoctoral researcher Dr. Fabrizio Berritta. This collaborative effort, which also involved esteemed scientists from the Norwegian University of Science and Technology, Leiden University, and Chalmers University, has yielded a revolutionary real-time adaptive measurement system. This system possesses the remarkable ability to meticulously track changes in a qubit’s energy loss, or relaxation, rate as they unfold, in essence, capturing the quantum dance in real-time.

The core of this innovative approach lies in a sophisticated, high-speed classical controller that dynamically updates its estimation of a qubit’s relaxation rate within a mere fraction of a second – specifically, milliseconds. This operational speed is meticulously calibrated to align with the intrinsic tempo of the fluctuations themselves, a stark departure from older methodologies that lagged behind by seconds or even minutes, offering only a retrospective glimpse.

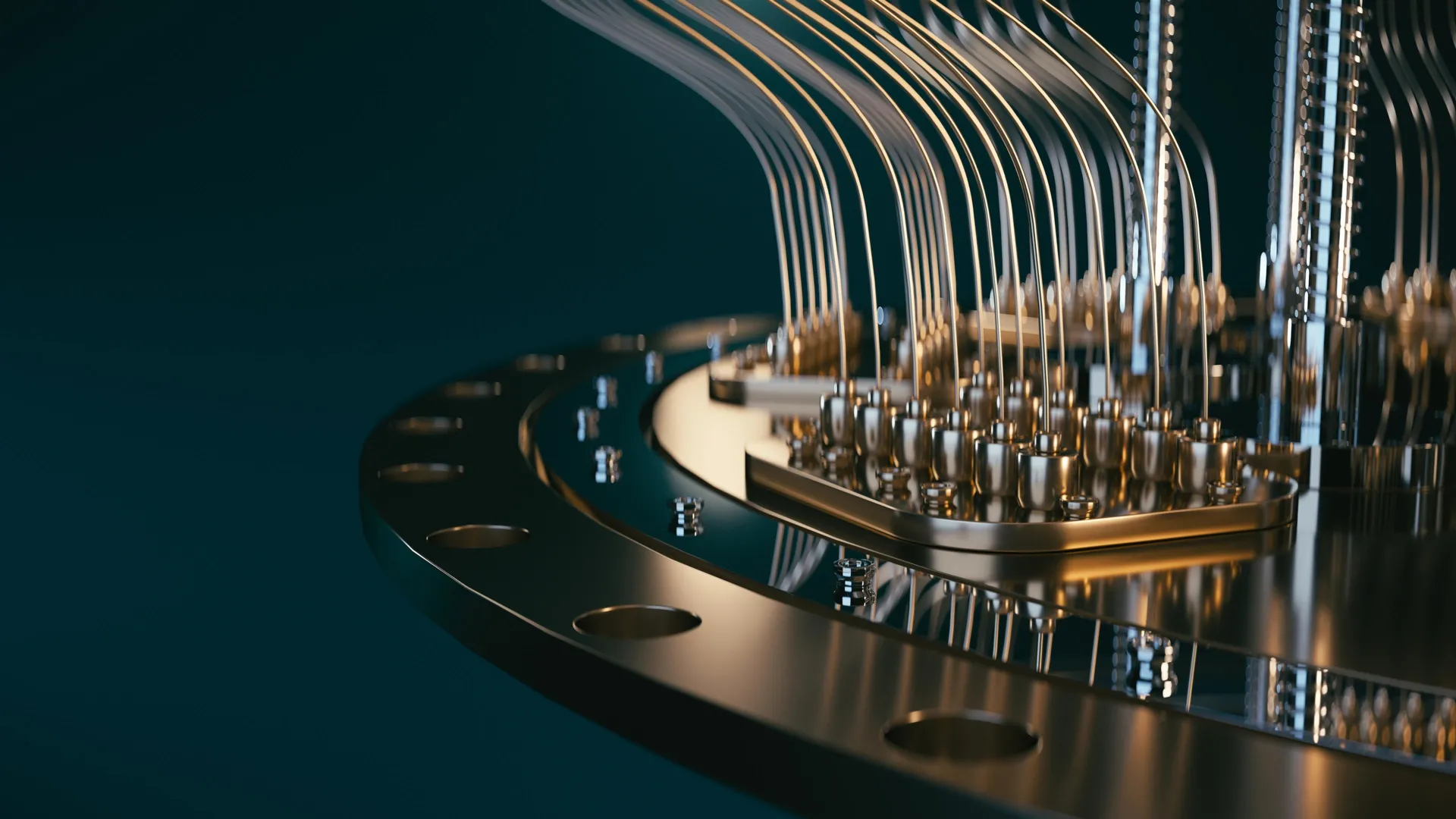

To achieve this unprecedented responsiveness, the research team ingeniously integrated a Field Programmable Gate Array (FPGA). FPGAs are a specialized class of classical processors engineered for exceptionally rapid computational operations, making them ideally suited for tasks demanding millisecond-level responsiveness. By conducting the experimental procedures directly on the FPGA, the team could swiftly generate a "best guess" of the qubit’s energy loss rate using an economized set of measurements. This ingenious strategy effectively circumvented the cumbersome and time-consuming process of transferring data to a conventional computer for analysis, thereby eliminating a significant bottleneck.

While the programming of FPGAs for such highly specialized and demanding tasks can present considerable challenges, the researchers demonstrated remarkable ingenuity by successfully implementing an adaptive Bayesian model within the controller. This model is continuously updated after every single qubit measurement. This iterative refinement process allows the system to perpetually hone its understanding of the qubit’s current state and its susceptibility to environmental influences, all in real-time.

The direct consequence of this sophisticated approach is that the controller now operates in lockstep with the qubit’s ever-changing environment. Measurements are taken, and adjustments are made on a timescale that closely mirrors the duration of the fluctuations themselves. This synchronization results in a system that is approximately one hundred times faster than any previously demonstrated method for tracking such dynamic qubit behavior. Furthermore, this work has yielded a significant scientific revelation: prior to these experiments, the precise speed at which fluctuations occur in superconducting qubits was largely unknown. These pioneering experiments have now definitively illuminated this critical aspect of quantum system dynamics.

Commercial Quantum Hardware Meets Advanced Control: Accessibility and Integration

The strategic deployment of FPGAs, a technology with a well-established presence in various scientific and engineering domains, has been a key enabler of this breakthrough. For this specific application, the researchers utilized a commercially available FPGA-based controller, the OPX1000, manufactured by Quantum Machines. A crucial aspect of this controller’s design is its programming interface, which employs a language remarkably similar to Python, a widely adopted programming language among physicists. This familiar interface significantly enhances the accessibility of this advanced technology to research groups worldwide, democratizing access to cutting-edge quantum control capabilities.

The seamless integration of this sophisticated controller with advanced quantum hardware was facilitated by a close and synergistic collaboration. The research group at the Niels Bohr Institute, under the leadership of Associate Professor Morten Kjaergaard, worked in tandem with Chalmers University, where the quantum processing unit itself was meticulously designed and fabricated. "The controller enables a very tight integration between logic, measurements, and feedforward," states Morten Kjaergaard. "These components were absolutely essential in making our experiment possible." This symbiotic relationship between hardware development and advanced control systems underscores the interdisciplinary nature of modern quantum research.

Why Real-Time Calibration Matters for Quantum Computers: Paving the Way for Scalability

The promise of quantum technologies is vast, offering entirely new paradigms for computation and problem-solving. However, the realization of practical, large-scale quantum computers remains an active area of development, with progress often characterized by incremental advancements interspersed with occasional, transformative leaps forward.

By uncovering these previously hidden, rapid dynamics within qubits, the findings of this research fundamentally reshape how scientists approach the testing and calibration of superconducting quantum processors. In the current landscape, where the limitations of materials and manufacturing processes are still being addressed, the adoption of real-time monitoring and adjustment strategies appears to be an indispensable prerequisite for enhancing the reliability and stability of quantum systems. Moreover, these results serve as a compelling testament to the profound importance of fostering robust partnerships between academic research institutions and industry, as well as the power of creative and innovative utilization of existing technological resources.

"Nowadays, in quantum processing units in general, the overall performance is not determined by the best qubits, but by the worst ones; those are the ones we need to focus on," explains Fabrizio Berritta. "The surprise from our work is that a ‘good’ qubit can turn into a ‘bad’ one in fractions of a second, rather than minutes or hours." This observation highlights the dynamic and often unpredictable nature of qubit quality.

"With our algorithm, the fast control hardware can pinpoint which qubit is ‘good’ or ‘bad’ basically in real time," Berritta continues. "We can also gather useful statistics on the ‘bad’ qubits in seconds instead of hours or days. This dramatically accelerates the diagnostic process, allowing for more rapid iteration and improvement."

Despite these remarkable advancements, a significant portion of the observed fluctuations still eludes complete explanation. "We still cannot explain a large fraction of the fluctuations we observe," acknowledges Berritta. "Understanding and controlling the physics behind such fluctuations in qubit properties will be necessary for scaling quantum processors to a useful size. This is the next frontier." This ongoing quest for deeper understanding and control over the fundamental physics governing qubit behavior is paramount for the eventual construction of fault-tolerant, large-scale quantum computers that can tackle humanity’s most challenging problems.