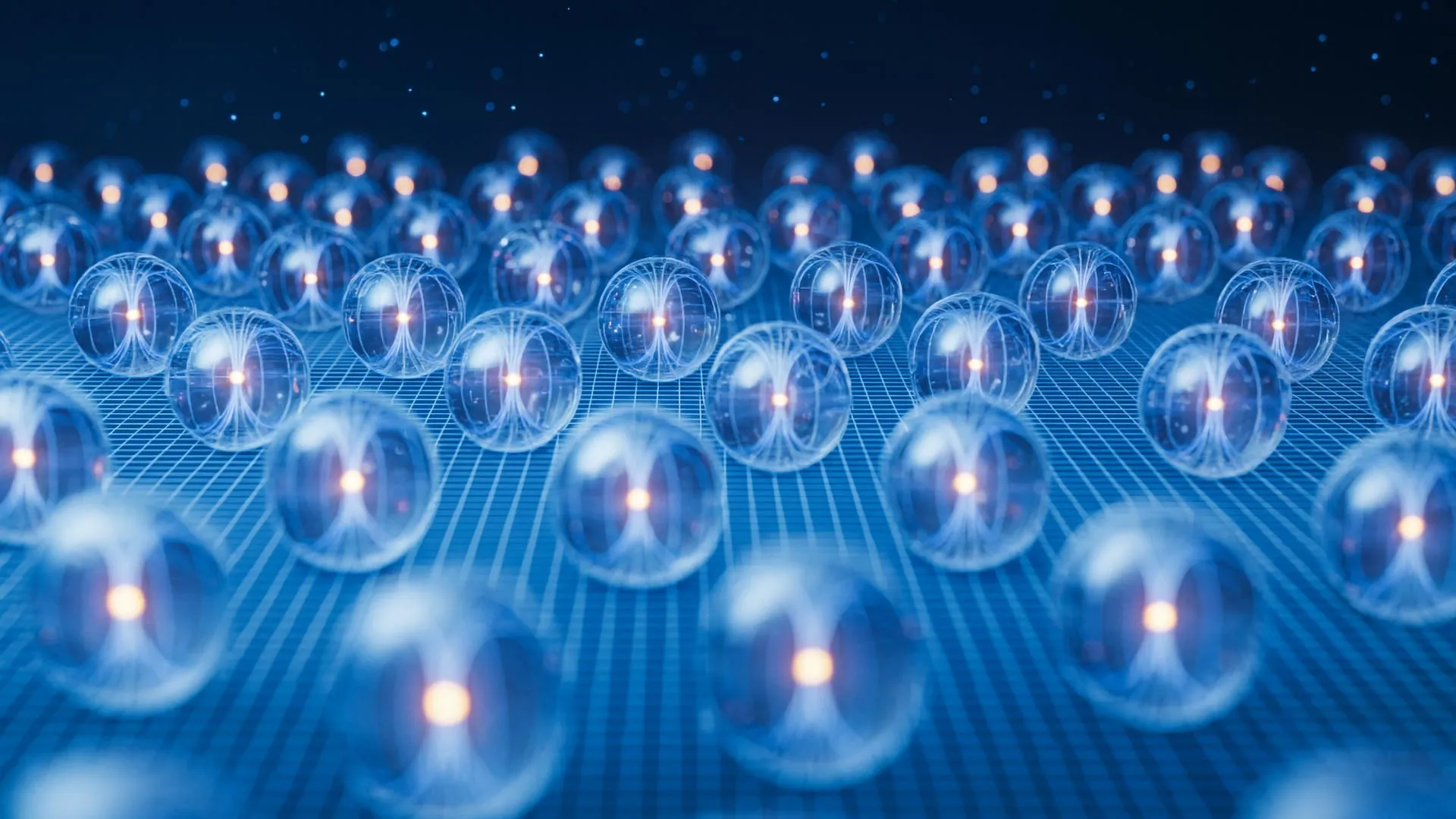

The persistent challenge in realizing useful quantum computers today lies in the ephemeral nature of qubits, the fundamental units of quantum information. As explained by Andrew Houck, a leading figure in quantum research, dean of engineering at Princeton, and co-principal investigator on the pivotal study, "The real challenge, the thing that stops us from having useful quantum computers today, is that you build a qubit and the information just doesn’t last very long." This latest advancement, detailed in a Nov. 5 publication in the prestigious journal Nature, represents a critical stride forward, promising to overcome this fundamental limitation.

The Princeton team’s innovative qubit has demonstrated an impressive coherence time exceeding 1 millisecond. This duration is triple the longest coherence time previously documented in laboratory experiments and nearly fifteen times greater than the standard employed in industrial quantum processors. Crucially, the researchers didn’t stop at a theoretical promise; they constructed a functional quantum chip based on their new qubit design. This empirical validation proved that their approach not only enhances qubit stability but also possesses the inherent capability to support error correction and can be scaled up for larger, more complex quantum systems.

A key aspect of this breakthrough is the compatibility of Princeton’s qubit with the architectural frameworks favored by industry giants like Google and IBM. The team’s analysis indicates that replacing existing components in Google’s sophisticated Willow processor with their novel design could yield a staggering thousandfold increase in performance. Houck further elaborated that the advantages conferred by this design escalate dramatically as the number of interconnected qubits in a quantum system grows, underscoring its potential for exponential gains in computational power.

The Paramount Importance of Enhanced Qubit Durability for Quantum Computing

The profound promise of quantum computers lies in their capacity to tackle problems that are intractable for even the most powerful classical computers. However, their current capabilities are significantly hampered by the inherent instability of qubits, which lose their quantum information before complex calculations can be completed. Extending qubit coherence time is therefore not merely an incremental improvement but an indispensable requirement for the realization of practical and impactful quantum hardware. Princeton’s recent achievement marks the most substantial single gain in coherence time observed in over a decade, a testament to its significance.

While numerous research institutions are exploring diverse qubit technologies, Princeton’s breakthrough builds upon the widely adopted and well-understood transmon qubit architecture. Transmons, which function as superconducting circuits maintained at extremely low temperatures, are lauded for their resilience against environmental noise and their amenability to integration with contemporary manufacturing processes.

Despite these inherent strengths, achieving significant improvements in the coherence times of transmon qubits has presented a formidable engineering challenge. Recent findings from Google, for instance, have highlighted material defects as the primary impediment to enhancing their latest generation of quantum processors.

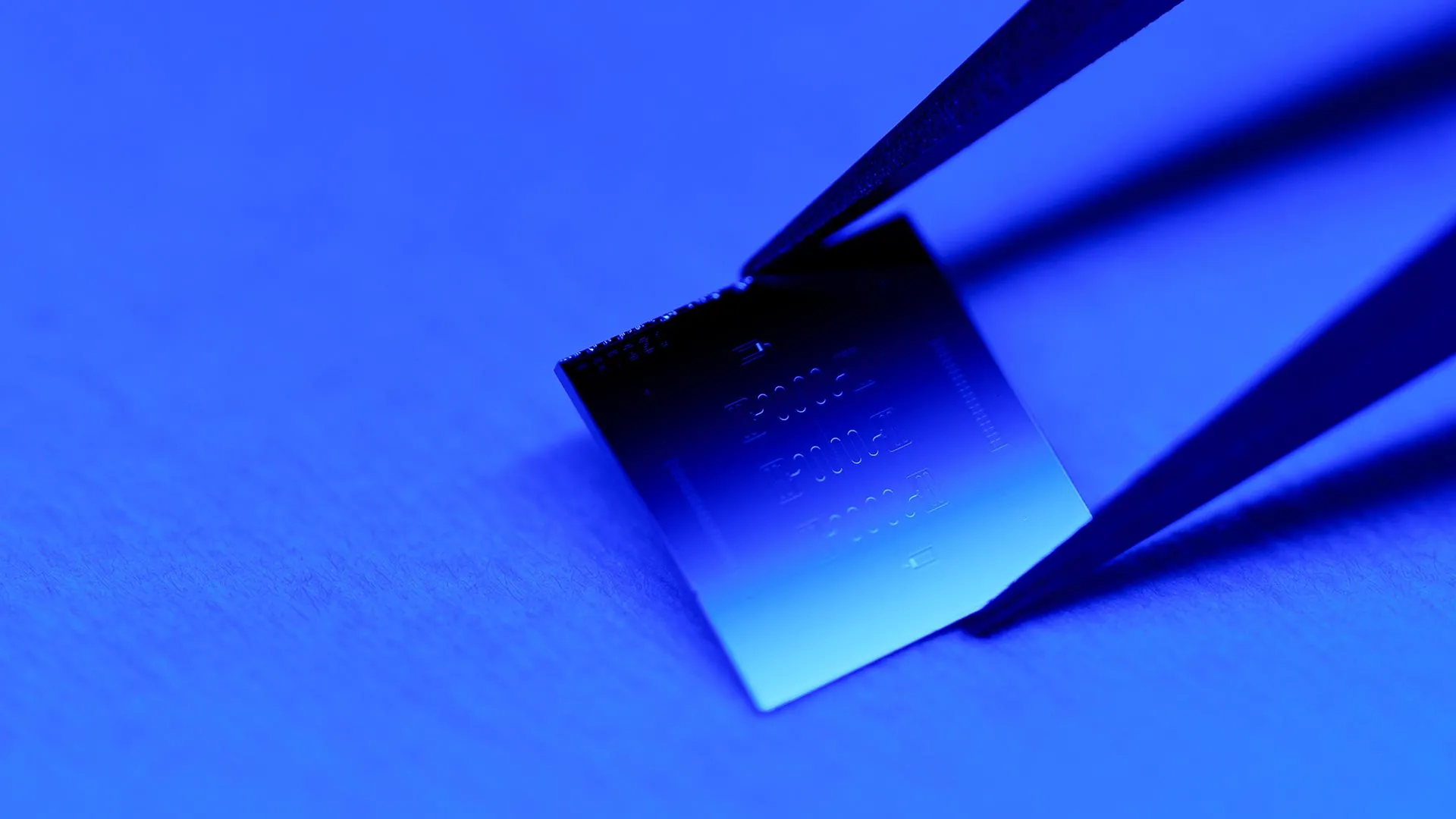

A Novel Materials Strategy: The Synergistic Power of Tantalum and Silicon

The Princeton team’s ingenious solution to these material-induced limitations involved a two-pronged strategy. The first element was the incorporation of tantalum, a robust metal known for its efficacy in preserving energy within sensitive circuits. The second, equally crucial step, was the substitution of the conventional sapphire substrate with high-purity silicon, a material that forms the bedrock of the global semiconductor industry. The direct deposition of tantalum onto silicon presented a complex set of technical hurdles, primarily concerning the intricate interactions between these two dissimilar materials. However, the researchers successfully navigated these challenges, uncovering substantial benefits in the process.

Nathalie de Leon, co-director of Princeton’s Quantum Initiative and a key co-principal investigator on the project, emphasized that their tantalum-silicon design not only surpasses previous approaches in performance but also offers a simpler manufacturing pathway amenable to large-scale production. "Our results are really pushing the state of the art," she declared, underscoring the groundbreaking nature of their findings.

Michel Devoret, chief scientist for hardware at Google Quantum AI, a significant contributor to the project’s funding, vividly described the arduous journey of extending the operational lifespan of quantum circuits. He characterized the pursuit of this goal as a "graveyard" of abandoned attempts. Devoret lauded de Leon’s tenacity, stating, "Nathalie really had the guts to pursue this strategy and make it work," a sentiment echoed by his recognition as the 2025 Nobel Prize winner in physics.

The project received primary financial backing from the U.S. Department of Energy National Quantum Information Science Research Centers and the Co-design Center for Quantum Advantage (C2QA). Houck, who directed C2QA from 2021 to 2025 and now serves as its chief scientist, played a pivotal role in securing this funding. The research paper itself lists postdoctoral researcher Faranak Bahrami and graduate student Matthew P. Bland as co-lead authors, highlighting their substantial contributions.

Unveiling the Mechanism of Tantalum’s Qubit Stabilization

Houck, also the Anthony H.P. Lee ’79 P11 P14 Professor of Electrical and Computer Engineering, elucidated the fundamental factors governing a quantum computer’s efficacy. These are twofold: the total number of qubits that can be interconnected and the number of operations each qubit can execute before accumulating unacceptable levels of error. Enhancing the resilience of individual qubits directly bolsters both these critical metrics. Extended coherence times are indispensable for both scaling quantum systems and implementing robust error correction protocols.

Energy dissipation represents the most prevalent cause of failure in these highly sensitive quantum systems. Microscopic imperfections on the surface of metallic components can trap residual energy, thereby disrupting the qubit’s state during computational processes. These disruptions are amplified as more qubits are integrated into the system. Tantalum’s superiority in this regard stems from its inherent characteristic of possessing significantly fewer such defects compared to commonly used metals like aluminum. A reduced defect density translates directly into fewer errors and a simplified process for correcting those that inevitably arise.

Houck and de Leon initially introduced tantalum for superconducting chip applications in 2021, a development facilitated by the expertise of Princeton chemist Robert Cava, the Russell Wellman Moore Professor of Chemistry. Cava, a renowned specialist in superconducting materials, became intrigued by the challenge after attending one of de Leon’s presentations. Their subsequent discussions led him to propose tantalum as a highly promising candidate material. "Then she went and did it," Cava remarked, emphasizing the remarkable execution of the idea. "That’s the amazing part."

This collaborative insight propelled researchers across three distinct laboratories to implement a tantalum-based superconducting circuit on a sapphire substrate. The initial results demonstrated a marked improvement in coherence time, bringing it tantalizingly close to the existing world record.

Bahrami further highlighted tantalum’s exceptional qualities, noting its extreme durability and its ability to withstand the rigorous cleaning procedures necessary to eliminate contaminants during the fabrication process. "You can put tantalum in acid, and still the properties don’t change," she asserted, underscoring its robustness.

Upon meticulous removal of contaminants, the team proceeded to quantify the remaining sources of energy loss. They identified the sapphire substrate as the principal culprit for most of the residual energy dissipation. The decisive shift to a high-purity silicon substrate effectively eliminated this significant source of loss. The synergistic combination of tantalum and silicon, coupled with refined fabrication techniques, ultimately culminated in one of the most substantial advancements ever recorded for a transmon qubit. Houck aptly described the outcome as "a major breakthrough on the path to enabling useful quantum computing."

Extending this point, Houck emphasized that the benefits derived from this design escalate exponentially with system size. Consequently, replacing current industry-leading qubits with Princeton’s innovative version could potentially endow a theoretical 1,000-qubit computer with an operational effectiveness approximately one billion times greater.

A Silicon-Based Design Architected for Industry-Scale Proliferation

The success of this ambitious project is a direct result of the synergistic integration of expertise from three distinct scientific domains. Houck’s group spearheaded the design and meticulous optimization of the superconducting circuits. De Leon’s laboratory specialized in quantum metrology and the intricate materials and fabrication methodologies that dictate qubit performance. Cava’s group, with decades of dedicated research in superconducting materials, provided crucial foundational knowledge. By harmonizing their collective strengths, the team achieved results that would have been unattainable by any single group working in isolation. This groundbreaking achievement has already garnered significant attention from the broader quantum industry.

Devoret underscored the indispensable role of collaborations between academic institutions and industrial partners in propelling advanced technologies forward. "There is a rather harmonious relationship between industry and academic research," he observed. This dynamic allows university researchers to explore the fundamental boundaries of quantum performance, while industry collaborators can then translate these fundamental discoveries into practical, large-scale systems.

"We’ve shown that it’s possible in silicon," de Leon stated with confidence. "The fact that we’ve shown what the critical steps are, and the important underlying characteristics that will enable these kinds of coherence times, now makes it pretty easy for anyone who’s working on scaled processors to adopt." This statement signifies not just a scientific achievement but a clear roadmap for future industrial implementation.

The seminal paper, titled "Millisecond lifetimes and coherence times in 2D transmon qubits," was officially published in Nature on November 5th. The extensive author list includes not only de Leon, Houck, Cava, Bahrami, and Bland but also Jeronimo G.C. Martinez, Paal H. Prestegaard, Basil M. Smitham, Atharv Joshi, Elizabeth Hedrick, Alex Pakpour-Tabrizi, Shashwat Kumar, Apoorv Jindal, Ray D. Chang, Ambrose Yang, Guangming Cheng, and Nan Yao. The research received primary funding from the U.S. Department of Energy, Office of Science, National Quantum Information Science Research Centers, and the Co-design Center for Quantum Advantage (C2QA), with supplementary support provided by Google Quantum AI, underscoring the collaborative and well-supported nature of this transformative endeavor.