In a dramatic turn of events that has sent shockwaves through the burgeoning artificial intelligence sector, OpenAI has secured a landmark agreement with the United States Department of Defense to deploy its cutting-edge AI models within highly sensitive, classified military networks. This pivotal deal was announced on a late Friday, coming mere hours after the White House issued an unprecedented directive ordering all federal agencies to cease using technology developed by OpenAI’s primary rival, Anthropic, citing national security concerns. The rapid succession of these announcements underscores the escalating geopolitical stakes in the global AI race and the profound ethical dilemmas confronting tech giants as their innovations become central to national defense strategies.

Sam Altman, the charismatic CEO of OpenAI, took to X (formerly Twitter) to share the news of the company’s significant foray into military applications. In his post, Altman confirmed that OpenAI would be integrating its advanced models directly into the Pentagon’s “classified network.” He highlighted that the Department of Defense had demonstrated “deep respect for safety” and a commendable willingness to operate within OpenAI’s established ethical and operational limits. This statement immediately drew scrutiny, given the circumstances surrounding Anthropic’s recent ostracization from government contracts, raising questions about the true extent and interpretation of these “limits.”

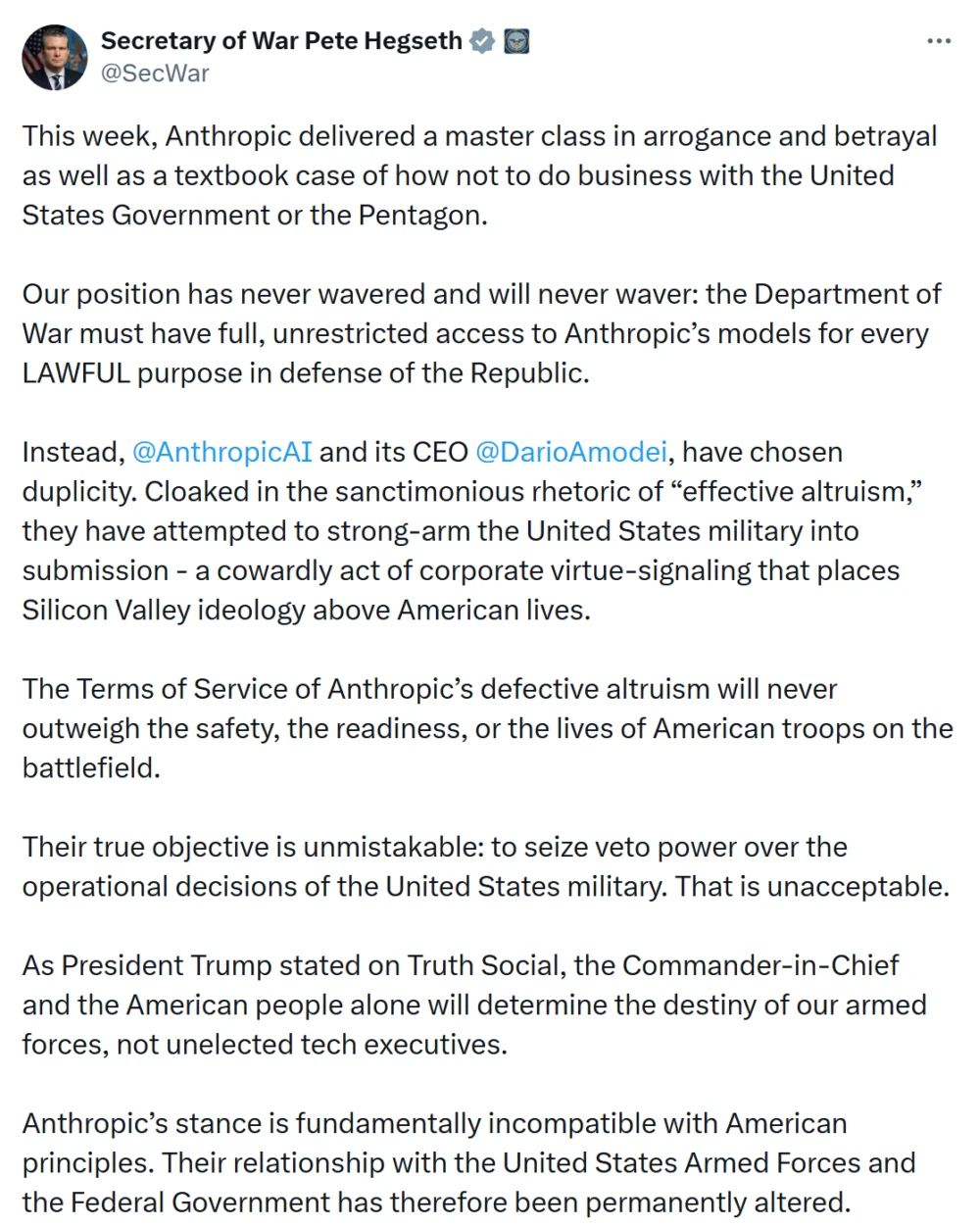

The week leading up to these announcements was nothing short of turbulent for the AI industry, marked by a series of events that redefined the boundaries of tech-government partnerships. Earlier on the same fateful Friday, Defense Secretary Pete Hegseth had publicly labeled Anthropic a “Supply-Chain Risk to National Security.” This designation, typically reserved for foreign entities or those with demonstrable ties to adversarial nations, is a severe blow, effectively blacklisting Anthropic from future defense contracts and requiring all existing defense contractors to certify that they are not utilizing the company’s models. Secretary Hegseth, a figure known for his hawkish stance on national security and technology, emphasized the critical importance of vetting AI partners to protect sensitive government operations from potential vulnerabilities or foreign exploitation.

Concurrently, and perhaps even more impactful, President Donald Trump issued a sweeping directive via Truth Social, commanding every U.S. federal agency to immediately halt the use of any Anthropic technology. While a six-month transition period was granted for agencies already heavily reliant on Anthropic’s systems to mitigate disruption, the message was unequivocally clear: Anthropic was out. This presidential mandate, rarely invoked for domestic technology firms, signaled an extraordinary level of concern from the highest echelons of government regarding Anthropic’s offerings. The twin blows from the Defense Department and the White House painted a stark picture of Anthropic’s sudden and dramatic fall from grace within the federal contracting sphere.

Before this dramatic reversal, Anthropic had been at the forefront of AI integration within the Pentagon. The company was the first AI lab to successfully deploy its models across the Pentagon’s highly secure, classified environment, a significant achievement secured through an initial $200 million contract signed in July. This earlier success had positioned Anthropic as a key partner in modernizing military intelligence and operational capabilities. However, negotiations for further expansion ultimately collapsed due to insurmountable differences over the ethical deployment of AI.

Anthropic, founded by former OpenAI researchers who left over concerns about the company’s direction and safety protocols, has long championed an "AI safety-first" approach, developing what it calls "Constitutional AI" to imbue its models with ethical guardrails. During its discussions with the Department of Defense, Anthropic reportedly sought ironclad guarantees that its sophisticated software would not be repurposed for autonomous weapons systems capable of making life-or-death decisions without human oversight, nor for domestic mass surveillance applications that could infringe upon civil liberties. These demands reflected the core ethical principles upon which Anthropic was built.

However, the Defense Department, operating under a mandate to leverage all available technological advantages for national security, reportedly insisted that the technology must be available for all lawful military purposes. This fundamental divergence in philosophy – Anthropic’s principled stance on restricted use versus the DoD’s demand for broad operational flexibility – proved to be an unbridgeable chasm, leading to the abrupt termination of negotiations and Anthropic’s subsequent blacklisting. In a public statement, Anthropic expressed its profound disappointment, stating it was “deeply saddened” by the designation and vowing to challenge the decision in court. The company further warned that such a move could establish a dangerous precedent, potentially chilling future collaborations between American technology firms and government agencies, particularly as political and ethical scrutiny of AI partnerships continues to intensify globally.

Intriguingly, Sam Altman of OpenAI claimed that his company maintains “similar restrictions” to those sought by Anthropic, and crucially, that these stipulations were explicitly written into the new agreement with the Department of Defense. According to Altman, OpenAI’s policy explicitly prohibits domestic mass surveillance and mandates human responsibility in all decisions involving the use of force, including the operation of automated weapons systems. This assertion, however, immediately raised eyebrows across the industry and among the public. Given the DoD’s firm stance against Anthropic’s identical demands, many questioned how OpenAI managed to secure these concessions or if there were subtle, yet significant, differences in the wording or interpretation of these “restrictions” that allowed the deal to proceed. Critics pondered whether OpenAI’s ethical lines were truly as unyielding as Anthropic’s, or if a more pragmatic, perhaps less stringent, interpretation had been adopted to facilitate the agreement.

The news of OpenAI’s contract with the Pentagon, juxtaposed against Anthropic’s dramatic fall, ignited a fierce backlash and skepticism among some users on social media platforms, particularly X. Christopher Hale, a prominent American Democratic politician, publicly declared, “I just canceled ChatGPT and bought Claude Pro Max.” He added, in a scathing indictment of OpenAI’s perceived compromise, “One stands up for the God-given rights of the American people. The other folds to tyrants.” This sentiment resonated with many who viewed OpenAI’s move as a betrayal of its foundational ethos.

Another crypto user articulated this disillusionment even more sharply, writing, “2019 OpenAI: we will never help build weapons or surveillance tools. 2026 OpenAI: department of War, hold my classified cloud instance. Integrity arc go brrrrrrr.” This comment succinctly captured the perceived ideological shift within OpenAI, which began as a non-profit dedicated to open-source AI for humanity’s benefit, but has increasingly embraced commercial and government partnerships, leading to accusations of compromising its original principles. The user’s reference to "integrity arc go brrrrrrr" satirically highlighted the rapid, perceived erosion of the company’s ethical stance in pursuit of strategic advantage and profit. These reactions underscore a broader societal anxiety about the militarization of AI and the ethical responsibilities of the companies developing these powerful technologies.

The implications of these developments are far-reaching, extending beyond the immediate fortunes of OpenAI and Anthropic. This episode is a stark illustration of the intense “AI arms race” currently underway, with global powers vying for technological supremacy in a domain increasingly critical to national security. The U.S. government’s decisive action against Anthropic, and its immediate embrace of OpenAI, sends a clear signal about the preferred characteristics of its AI partners: a willingness to integrate deeply with military objectives, albeit with carefully negotiated safeguards.

This situation also sets a crucial precedent for how American technology firms will negotiate with government agencies in the future. Anthropic’s warning about a chilling effect on tech-government partnerships rings true; companies will now be forced to weigh their ethical commitments against the immense financial and strategic benefits of lucrative defense contracts. The “dual-use” dilemma of AI – its potential for both immense societal benefit and devastating military application – has never been more apparent. This contractual shift will likely accelerate calls for clearer, more robust AI regulation, especially concerning military applications and the oversight of autonomous weapons systems.

Moreover, the competitive landscape of the AI industry is irrevocably altered. OpenAI has gained a significant advantage in the lucrative government contracting space, potentially positioning it as the primary AI provider for federal agencies. Anthropic, despite its strong technology and ethical stance, faces a daunting challenge to recover its standing and reputation within the U.S. government. This dynamic will undoubtedly influence the strategies of other major AI players like Google, Microsoft (which has significant investments in OpenAI), and Amazon, as they navigate the complex interplay of innovation, ethics, and national security in an increasingly scrutinized sector. The long-term vision of the Department of Defense clearly involves deeply integrating advanced AI into its operations, and OpenAI, for now, appears to be its chosen vanguard, walking a precarious ethical tightrope between powerful capabilities and professed safety.