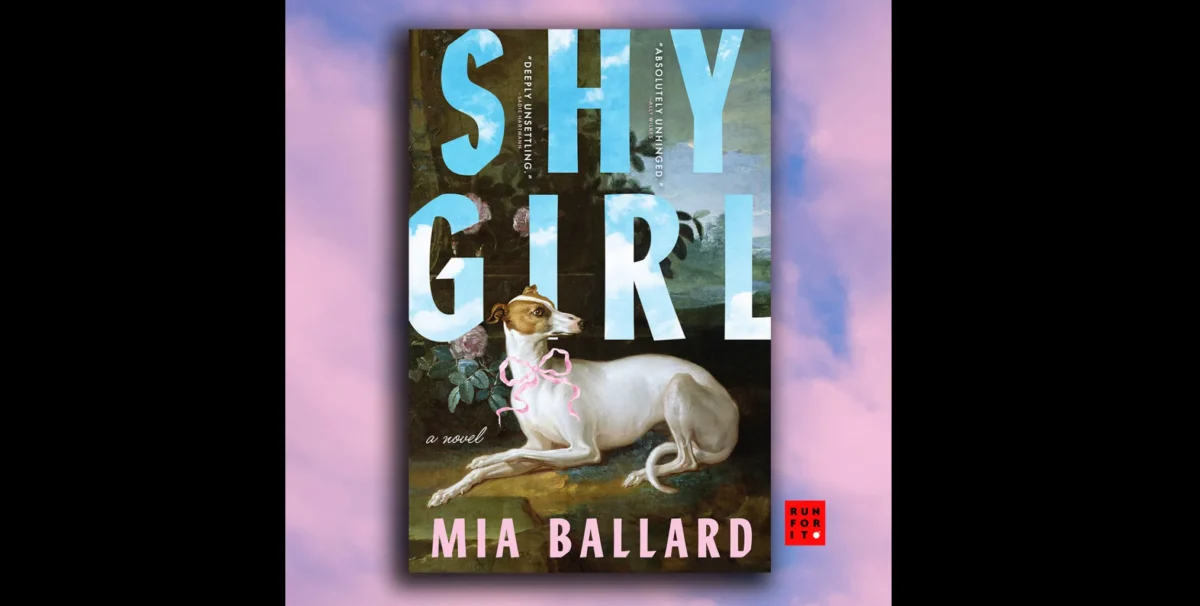

A prominent publisher is discontinuing a horror novel and canceling its US release after the author faced widespread accusations of using artificial intelligence to generate significant portions of the book, marking a pivotal moment in the ongoing struggle between traditional creative integrity and the rapid advancement of generative AI. Hachette Book Group, a formidable presence among the largest publishing houses in the United States, officially announced its decision to cease sales of "Shy Girl" by Mia Ballard in the UK, where it had been released the previous fall and managed to sell approximately 1,800 print copies, according to reported data. Furthermore, the planned US edition, slated for release this spring through its Orbit imprint, has been decisively canceled, signaling a clear stance from the publisher against undisclosed AI involvement. A Hachette spokeswoman articulated the company’s position, stating, "Hachette remains committed to protecting original creative expression and storytelling," and underscored that the publishing house mandates all submissions to be the original work of the authors, explicitly requiring disclosure if AI tools are employed during the writing process. This requirement suggests that Hachette views alleged AI usage not merely as an affront to long-held creative principles, but as a direct violation of contractual terms, a significant legal and ethical dimension to the controversy. This contrasts sharply with a handful of other publishers who have, in fact, released books whose marketing materials or author statements explicitly acknowledged or even celebrated experimentation with AI, showcasing a varied and still-developing industry response to the technology. The Hachette spokeswoman further elaborated in a statement to the Wall Street Journal that both its US and UK imprints had conducted a "lengthy investigation in recent weeks" before the ultimate decision to halt publication was made, indicating a thorough internal review process.

Author Mia Ballard, however, staunchly denies personally utilizing AI in the creation of "Shy Girl," instead asserting that an editor she had independently hired to review the manuscript during its initial self-published phase was responsible for integrating AI-generated content. In a statement to The New York Times, Ballard conveyed the profound personal toll of the controversy, lamenting, "This controversy has changed my life in many ways and my mental health is at an all time low and my name is ruined for something I didn’t even personally do." She indicated her inability to provide more specific details regarding how the book was edited with AI, citing ongoing legal action she is pursuing, which adds a layer of complexity and potential future litigation to the unfolding narrative. Her defense, while offering an alternative explanation, places the onus on a third party, raising questions about accountability in the age of AI and the vetting processes for professional services in the self-publishing world.

The cancellation of "Shy Girl" stands as a stark illustration of the burgeoning anti-AI sentiment that has taken root across creative sectors, particularly within the arts, and represents one of the earliest and most high-profile instances of a major publisher withdrawing a book deal due to concrete accusations of AI usage. The novel’s trajectory from burgeoning indie success to industry pariah began when it gained considerable traction as a self-published hit last year, finding an enthusiastic readership on platforms like TikTok, where books often go viral through community recommendations. However, this initial wave of glowing praise soon gave way to a tide of suspicion as discerning readers and literary professionals began noticing unusual stylistic patterns within the prose. The turning point arrived in January with a viral Reddit post from a user identifying as a book editor, who meticulously flagged "Shy Girl’s" narrative and linguistic characteristics as bearing the unmistakable hallmarks of a large language model. This post ignited significant discussion within the horror literature community, with some participants even accusing Ballard of employing AI to craft her responses in subsequent written interviews, further fueling the skepticism.

The evidentiary trail expanded dramatically with a YouTube video essay that has since garnered over 1.2 million views, in which a reviewer embarked on a nearly three-hour, exhaustive dissection of the novel, systematically demonstrating how the book appeared to be AI-written. Titled "i’m pretty sure this book is ai slop," the video meticulously presented textual examples of repetitive phrasing, generic descriptions, awkward sentence structures, and an overall lack of distinct authorial voice—all common characteristics attributed to generative AI. Further cementing the accusations, Max Spero, the founder and CEO of the AI detection software Pangram, conducted an independent analysis, reporting compelling evidence that an astounding 78 percent of the book’s content was AI-generated. While the efficacy and absolute infallibility of AI detection software remain subjects of ongoing debate, such a high percentage from a specialized tool adds considerable weight to the claims, pushing the discussion beyond mere stylistic critique into the realm of quantifiable analysis.

This incident, where both a major publisher and a significant segment of the readership seem to concur on the presence of AI-generated content, raises profound and challenging questions about how the publishing industry will navigate AI’s increasingly rapid and often surreptitious invasion into the literary world. The self-publishing sphere, which major publishers are increasingly scrutinizing to discover social-media-ready "diamonds in the rough," is already known to be rife with low-effort, AI-generated "dreck"—from formulaic romance novels to bland non-fiction. Given the widespread popularity and accessibility of AI technology, it is an undeniable inevitability that a growing number of authors, whether intentionally or inadvertently, will incorporate AI into their writing processes. The central dilemma for publishers then becomes whether it is a sustainable, or even desirable, long-term strategy to respond in such a dramatic fashion each time evidence of AI usage surfaces. This raises critical questions: Will AI become entirely off-limits for authors seeking major book deals, or will the industry pivot towards encouraging authors to be transparent and upfront about their use of tools like ChatGPT? Years into the generative AI boom, the literary world, like many other creative sectors, is only just beginning to grapple with these complex ethical, creative, and commercial problems, defining the future of authorship and publishing one controversial case at a time.