OpenAI, once lauded as a pioneer in artificial intelligence and a champion of democratizing advanced AI, is currently facing an unprecedented public relations crisis and a significant user exodus following its CEO Sam Altman’s announcement of a new partnership with the United States Department of Defense. This controversial deal, unveiled just last week, has ignited a firestorm of criticism across the AI community, prompting droves of previously loyal users to vow their departure from the ChatGPT platform. The immediate repercussions are not merely anecdotal; new data reported by TechCrunch, leveraging insights from market intelligence provider Sensor Tower, paints a stark picture of the widespread discontent. On a single Saturday, uninstalls of the ChatGPT mobile application skyrocketed by an astonishing 295 percent compared to the preceding day. This surge is particularly striking when juxtaposed against the AI chatbot’s typical day-over-day uninstall rate, which has averaged a mere nine percent over the past month, underscoring the severity of the backlash.

The core of the controversy lies in the nature of the partnership: integrating OpenAI’s cutting-edge AI technologies with the military apparatus of the United States. While the specifics of the deal remain somewhat shrouded, the perception among a significant portion of the user base is that OpenAI has crossed an ethical line, moving from a mission of "AI for all" to becoming a component of the "war machine." For many early adopters and enthusiasts, the promise of AI was one of innovation for societal good, education, and advancement, not for military applications that could potentially lead to autonomous weapons systems or enhanced surveillance capabilities. The ethical considerations surrounding AI’s dual-use nature – its potential for both immense benefit and profound harm – have long been debated, and this deal has brought those abstract discussions into sharp, immediate focus, challenging the very trust users had placed in OpenAI’s stated commitment to responsible AI development.

In the wake of this dramatic shift in sentiment, a significant number of disaffected users appear to be migrating to Anthropic’s Claude, a rival AI chatbot. Anthropic has, in stark contrast to OpenAI, publicly maintained a principled stance regarding military engagement. The company had previously refused to enter into a direct deal with what the original report characterizes as a "deeply unpopular administration" to grant the military unrestricted access to its AI technology. More importantly, Anthropic explicitly demanded that its AI would not be deployed in autonomous weapons systems or for the mass surveillance of US citizens, establishing clear ethical boundaries that resonated strongly with the privacy-conscious and ethically-minded segments of the AI community. This public commitment positioned Anthropic as a beacon of responsible AI, a counter-narrative to the perceived capitulation of OpenAI.

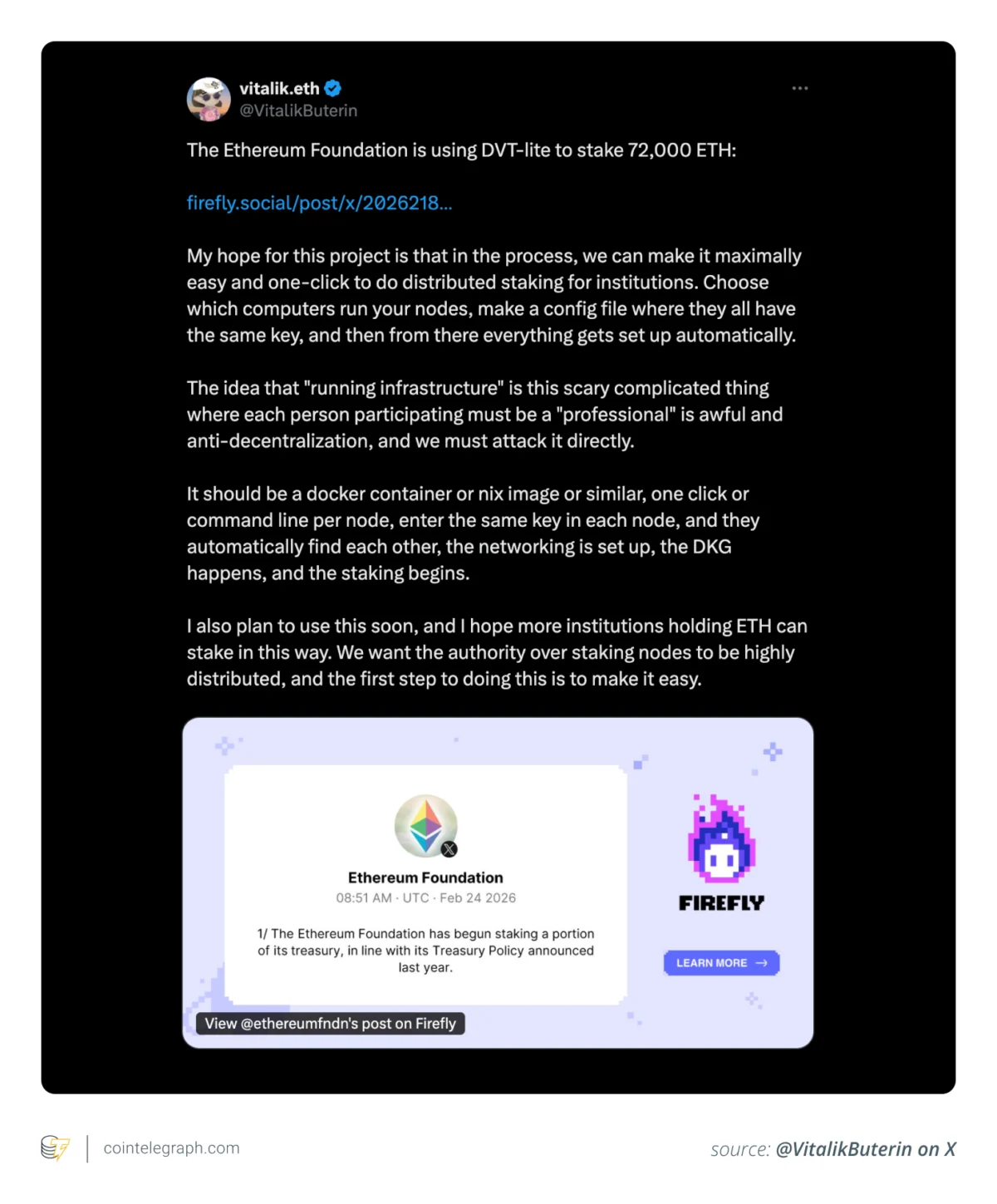

However, the narrative surrounding Anthropic is not without its own complexities and contradictions. Reports, including one from the Wall Street Journal, suggest a more nuanced reality: that the US military reportedly utilized Claude to assist in target selection for deadly missile strikes in Iran. These strikes tragically resulted in the death of Iran’s leader, Ali Khamenei, and hundreds of civilians, occurring just hours after a "Trump ban" was implied in the original context. This revelation introduces a critical ethical dilemma, potentially exposing a gap between Anthropic’s public posture and the actual deployment of its technology. Whether this constitutes a direct breach of their stated principles, a deployment through indirect channels, or a post-facto application, it complicates the perception of Anthropic as an unblemished alternative. Nevertheless, the immediate public reaction largely favored Anthropic’s perceived ethical stand, driving a surge in its user base. On Friday, the day after Anthropic’s public vow regarding its non-engagement with the Pentagon, installs of Claude’s app saw a 37 percent day-over-day increase, followed by an additional 51 percent surge on Saturday, demonstrating a clear preference among users seeking an ethically aligned AI provider.

The impact on OpenAI’s growth metrics extends beyond just increased uninstalls. The Pentagon deal also appears to have deterred potential new users. ChatGPT’s download growth experienced a 14 percent day-over-day drop on Saturday, with a further five percent decline recorded the following day. This contrasts sharply with the period immediately preceding the controversy, when ChatGPT’s growth had been robust, up by 13 percent. This dual blow – existing users abandoning the platform and new users shying away – presents a significant challenge to OpenAI’s market dominance and growth trajectory. The numbers suggest not just a temporary dip but a fundamental shift in user perception and loyalty that could have long-term implications for the company’s standing in the fiercely competitive AI landscape.

The outrage reverberating through AI circles is palpable and inescapable. On Reddit, the r/ChatGPT subreddit, a prominent community hub for users, saw one of its most upvoted posts of all time appear just days ago. The post directly challenged users to "show proof" of cancelling their ChatGPT subscriptions, declaring unequivocally, "You are training a war machine." This sentiment reflects a deep moral objection to the militarization of AI, tapping into fears about autonomous weaponry and the ethical responsibilities of tech giants. Furthermore, the community quickly mobilized to provide practical solutions for those abandoning OpenAI. Explainers and guides on "how to transfer 3 years of ChatGPT memory/context over to Claude" proliferated across various forums, demonstrating a collective effort to facilitate migration and maintain personal data continuity for users switching platforms.

In an attempt to mitigate the escalating damage, OpenAI CEO Sam Altman held an "Ask Me Anything" (AMA) session on X (formerly Twitter). However, far from assuaging concerns, the AMA reportedly intensified the backlash. Altman was barraged by a torrent of questions from outraged users, many of whom expressed deep disappointment and distrust. His responses, often perceived as evasive or insufficiently convincing, failed to address the core ethical concerns surrounding the military partnership. Users questioned the company’s commitment to its founding principles, the transparency of the deal, and the potential for misuse of their AI. The episode highlighted the difficulty of reconciling corporate decisions with the values of a passionate, ethically conscious user base, especially when those decisions touch upon profound societal implications like warfare and surveillance.

The collective action of users and the strategic positioning of Anthropic culminated in a significant market shift over the weekend. For the first time in its app history, Claude surged to the top of the US App Store, dethroning ChatGPT, which subsequently dropped to second place. Data cited by TechCrunch unequivocally confirms that, for this period, US downloads for Claude surpassed those of ChatGPT, marking a symbolic and tangible victory for the challenger. This event underscores the power of public opinion and ethical considerations in shaping the competitive dynamics of the rapidly evolving AI industry.

The long-term ramifications for OpenAI and the broader AI race remain uncertain, yet the immediate impact is undeniable. Public opinion has demonstrably swung against OpenAI, challenging its reputation as a leader in ethical AI development. This incident could force OpenAI to re-evaluate its public engagement strategies, its ethical guidelines, and its approach to military partnerships. For the wider AI industry, this backlash serves as a potent reminder of the importance of transparency, ethical considerations, and user trust. It highlights a growing demand from consumers for AI companies to align their corporate decisions with broader societal values, especially concerning sensitive applications like defense. Whether this marks a temporary blip or a fundamental reordering of the AI hierarchy, it is clear that the ethical dimension of AI development has become a critical battleground, capable of reshaping market leadership and public perception in profound ways.