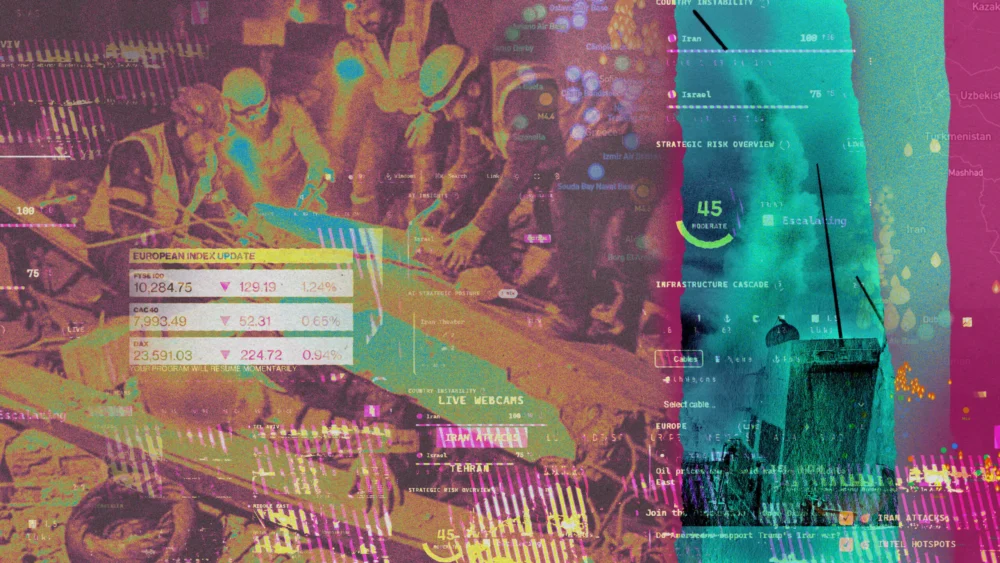

At the heart of this shift is the proliferation of online intelligence dashboards. One such example, developed by individuals associated with the venture capital firm Andreessen Horowitz, exemplifies this trend. This dashboard, launched in the wake of US-Israel strikes against Iran, ingeniously aggregates open-source intelligence, including satellite imagery and ship tracking data. It’s not merely a passive display; it integrates a chat function for commentary, real-time news feeds, and, crucially, links to prediction markets. These markets allow individuals to place wagers on a wide array of future events, from the successor to Iran’s Supreme Leader to the potential for nuclear detonations. The recent selection of Mojtaba Khamenei as Iran’s new Supreme Leader, for instance, left some bettors on platforms like Kalshi with a tangible payout, illustrating the tangible, albeit often speculative, financial dimension now intertwined with geopolitical events.

The speed and ease with which these dashboards are being constructed are remarkable. The author has reviewed over a dozen similar platforms in the past week alone, many of which were reportedly "vibe-coded" – assembled with remarkable rapidity – within a couple of days using AI tools. One such dashboard even garnered the attention of a founder of Palantir, a company integral to the US military’s deployment of AI models like Claude during active conflicts. While some of these dashboards predated the current Iran crisis, nearly all are being marketed as a superior alternative to traditional media, promising unfiltered truth and immediate insights. The sentiment is echoed by a LinkedIn commenter who stated they "learned more in 30 seconds watching this map than reading or watching any major news network," referring to a visualization of Iran’s airspace shutdown preceding the strikes.

While significant attention has rightly focused on the potential military applications of AI, such as the role of models like Claude in informing US strike decisions, these intelligence dashboards highlight a more pervasive and, at times, detrimental impact of AI in wartime: the mediation of information. This mediation often occurs at the expense of accuracy and depth, contributing to a distorted understanding of events.

Several converging factors are fueling this trend. The democratization of coding through AI tools has lowered the barrier to entry for assembling open-source intelligence. Concurrently, AI-powered chatbots offer swift, though not always reliable, analyses of this data. The pervasive rise of synthetic and manipulated content has amplified a public demand for raw, verifiable information, a void that these dashboards aim to fill, often mimicking the perceived exclusivity of intelligence agency data. Furthermore, the allure of prediction markets, with their promise of financial rewards for informed speculation, drives engagement. The US military’s use of Anthropic’s Claude, despite its "supply chain risk" designation, has also inadvertently signaled to observers that AI is the cutting-edge intelligence tool of choice for professionals. Together, these elements are coalescing into an AI-enabled wartime spectacle that can obscure as much as it illuminates.

As a journalist, I acknowledge the inherent promise within these intelligence tools. The ability to visualize real-time data on shipping routes or power outages in a consolidated format is undeniably powerful. However, the act of consuming this information while simultaneously placing bets transforms a grave conflict into a form of perverse entertainment. The underlying assumption that raw data feeds, easily assembled by AI, are inherently more informative than traditional analyses warrants critical examination.

Craig Silverman, a digital investigations expert, meticulously tracks these dashboards, having logged over twenty. He articulates a significant concern: "The concern is there’s an illusion of being on top of things and being in control, where all you’re really doing is just pulling in a ton of signals and not necessarily understanding what you’re seeing, or being able to pull out true insights from it." This illusion of comprehensive understanding, divorced from genuine analytical rigor, is a key byproduct of AI’s data aggregation capabilities.

A primary challenge lies in the quality and curation of the information presented. Many dashboards feature "intel feeds" that rely on AI-generated summaries of complex, dynamic events. These summaries can inadvertently introduce inaccuracies. The data itself is often presented without significant curation, leading to a juxtaposition of disparate information, such as a map of Iranian strike locations displayed alongside the fluctuating prices of obscure cryptocurrencies.

In stark contrast, intelligence agencies augment their data feeds with human expertise and historical context. They also possess access to proprietary information that remains beyond the reach of the open web. The implicit promise emanating from those who create and disseminate these information pipelines is that AI serves as a democratizing force, leveling the playing field by making previously exclusive information accessible to all. The logic suggests that the "secret feed" of intelligence, once the sole purview of elites, can now be leveraged by anyone, whether for personal enlightenment or for speculative gains on events like nuclear strikes. However, the sheer volume of information that AI excels at assembling does not automatically equate to accuracy or the contextual depth required for genuine comprehension. While intelligence agencies possess the internal capacity for such analysis, and credible journalism endeavors to provide it for the broader public, AI-driven aggregation alone falls short.

The intricate connection between these dashboards and betting markets cannot be overstated. The Andreessen Horowitz dashboard, for instance, prominently features a scrolling list of bets placed on Kalshi, a prediction platform in which the firm itself has invested. Other dashboards link to platforms like Polymarket, offering bets on a range of speculative outcomes, such as the likelihood of US strikes in Iraq or the timeline for Iran’s internet restoration.

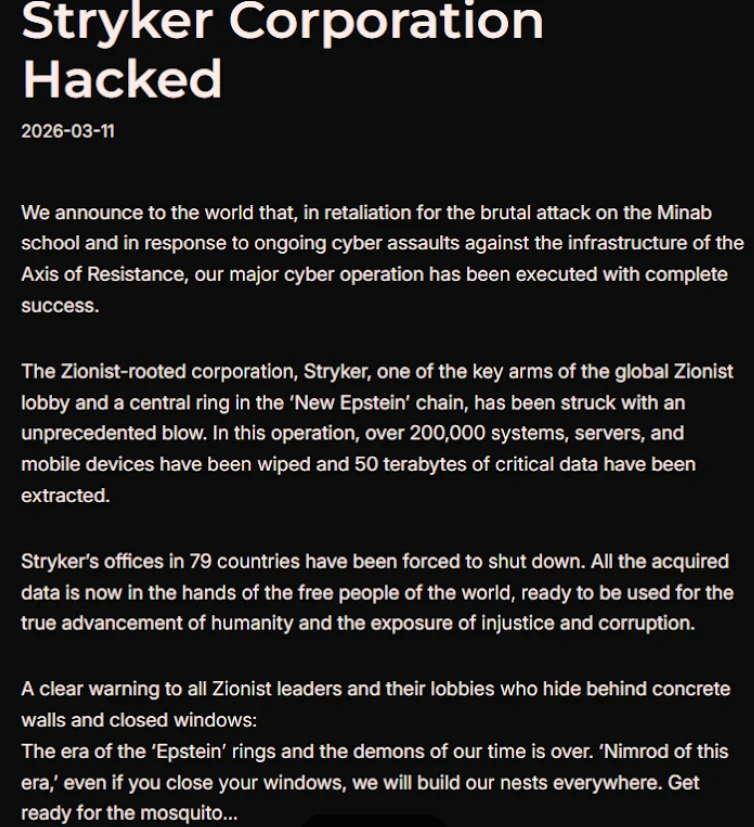

Compounding these issues is AI’s long-standing role in facilitating the creation and dissemination of fake content. The Iran conflict is a stark illustration of this problem, with the Financial Times recently uncovering a proliferation of AI-generated satellite imagery circulating online. Silverman expresses deep concern, noting, "The emergence of manipulated or outright fake satellite imagery is really concerning." The inherent trust placed in photographic evidence, particularly satellite imagery, makes the spread of such fakes a potent tool for eroding public confidence in verifiable information about the war.

The ultimate consequence is an overwhelming deluge of AI-enabled content – a chaotic amalgam of dashboards, speculative betting markets, and imagery that is both real and fabricated. This AI-driven information environment, rather than clarifying the complexities of the Iran conflict, paradoxically renders it more challenging, not less, to comprehend. The conflict, once a matter of geopolitical analysis and journalistic reporting, has morphed into a curated, gamified experience, where the line between information and entertainment has become dangerously blurred, and the pursuit of truth is increasingly obscured by the theater of data.