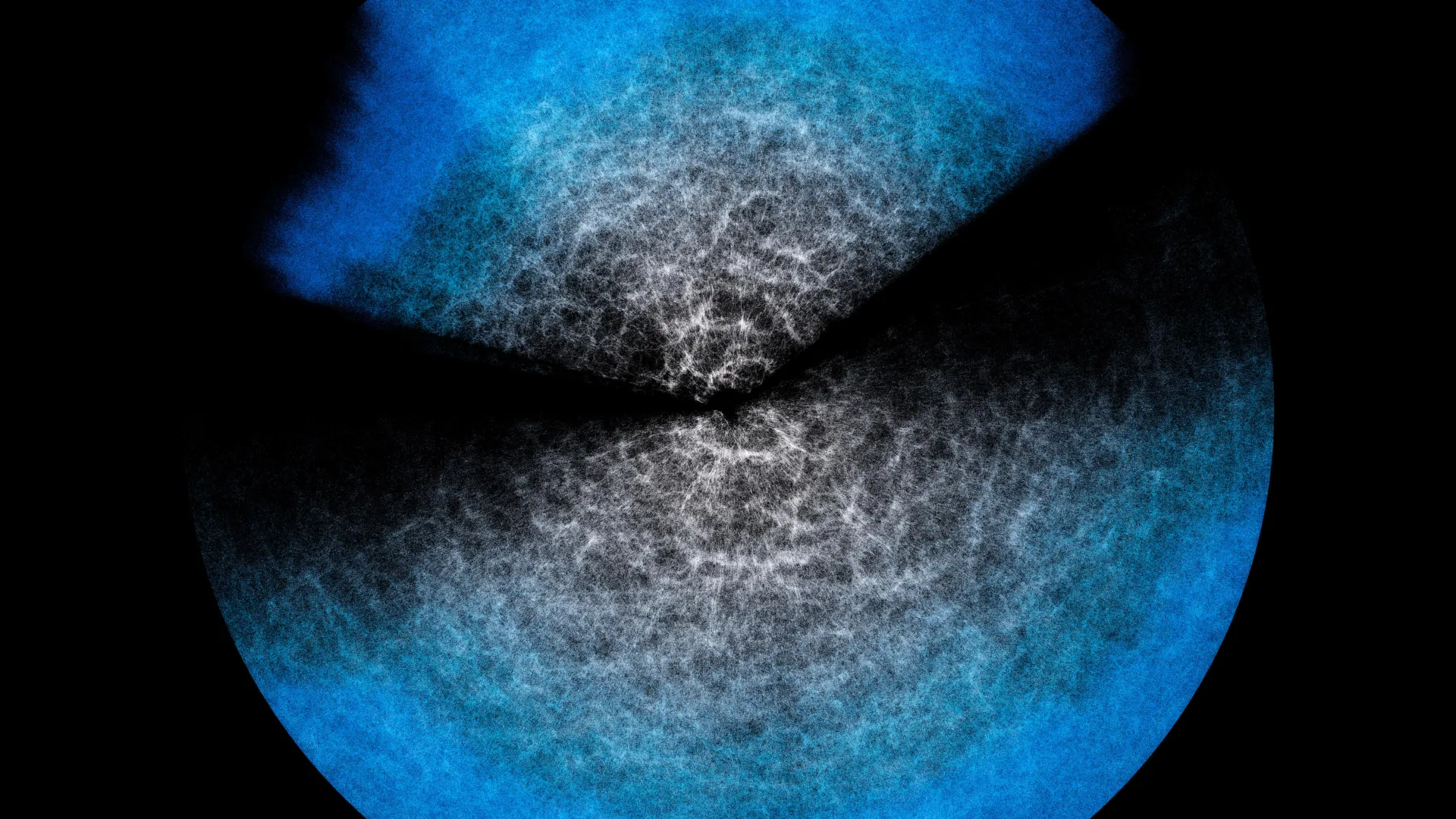

The endeavor to understand this cosmic tapestry is far from straightforward. Astronomers and cosmologists meticulously combine the fundamental laws of physics that govern the universe with a deluge of data meticulously collected by advanced astronomical instruments. This fusion of theory and observation forms the bedrock for building theoretical models, such as the sophisticated Effective Field Theory of Large-Scale Structure (EFTofLSS). These models, when infused with observational data, offer a statistical description of the "cosmic web," enabling researchers to estimate its key parameters and glean insights into its formation and growth. However, the computational demands of these models, like EFTofLSS, are immense, requiring significant processing power and extensive analysis time.

The exponential growth in astronomical datasets available to scientists presents a pressing need for more efficient analytical methods. Without ways to lighten the computational load without sacrificing precision, the analysis of ever-increasing amounts of data becomes a bottleneck, hindering scientific progress. This is precisely where emulators come into play. These ingenious tools are designed to "imitate" the behavior of complex physical models, offering a dramatically accelerated approach to obtaining results. They act as sophisticated shortcuts, learning the intricate relationships between input parameters and model outputs, thereby bypassing the need for computationally intensive simulations for every new scenario.

The crucial question surrounding the use of emulators revolves around the potential loss of accuracy. Can these "shortcuts" truly replicate the fidelity of full-fledged simulations, or do they introduce significant approximations that compromise the integrity of the scientific findings? An international collaborative effort, involving esteemed institutions such as INAF (Italy), The University of Parma (Italy), and The University of Waterloo (Canada), has recently published groundbreaking research in the prestigious Journal of Cosmology and Astroparticle Physics (JCAP). This study rigorously tested an emulator named Effort.jl, which was specifically designed by the team. The findings are remarkably encouraging: Effort.jl demonstrates an astonishing level of accuracy, delivering results that are essentially identical to the complex model it imitates. In some instances, it even reveals finer details that might be obscured or trimmed in the original model’s analysis due to computational constraints. The most striking aspect of this achievement is the drastic reduction in computational resources required; Effort.jl can execute these complex analyses in mere minutes on a standard laptop, a feat that previously necessitated the power of a supercomputer.

Marco Bonici, a researcher at The University of Waterloo and the lead author of the study, eloquently illustrates the concept behind effective field theories and emulators with a relatable analogy. "Imagine wanting to study the contents of a glass of water at the level of its microscopic components, the individual atoms, or even smaller," Bonici explains. "In theory, you can. But if we wanted to describe in detail what happens when the water moves, the explosive growth of the required calculations makes it practically impossible. However, you can encode certain properties at the microscopic level and see their effect at the macroscopic level, namely the movement of the fluid in the glass. This is what an effective field theory does, that is, a model like EFTofLSS, where the water in my example is the Universe on very large scales and the microscopic components are small-scale physical processes." This analogy effectively highlights how complex phenomena, such as the large-scale structure of the universe, can be understood by focusing on emergent properties derived from underlying physical processes, rather than simulating every single constituent particle.

The theoretical models, such as EFTofLSS, serve the vital purpose of statistically explaining the observed structure of the universe. Astronomical observations are fed into these computational codes, which then generate predictions about the cosmic web. However, as previously mentioned, this process is inherently time-consuming and computationally demanding. Given the sheer volume of data currently available from ongoing astronomical surveys – and the even greater volumes anticipated from upcoming projects like DESI, which has already begun releasing its initial data, and the ambitious Euclid mission – it is simply not practical to perform these exhaustive calculations for every new dataset or every slight variation in cosmological parameters. This is where the efficiency of emulators becomes indispensable.

"This is why we now turn to emulators like ours, which can drastically cut time and resources," Bonici continues, emphasizing the practical necessity of these tools. An emulator operates by essentially mimicking the behavior of the underlying physical model. At its core lies a sophisticated neural network, a type of artificial intelligence algorithm, which is trained to recognize and reproduce the relationship between input parameters (such as cosmological densities or expansion rates) and the model’s pre-computed predictions. The neural network learns by analyzing a vast array of outputs generated by the full model. Once trained, it can then generalize this knowledge to predict the model’s response for new combinations of parameters, even those it hasn’t explicitly encountered during training. Crucially, the emulator doesn’t possess an intrinsic understanding of the fundamental physics; instead, it becomes exceptionally adept at anticipating the theoretical model’s output based on the patterns it has learned.

The ingenuity of Effort.jl lies in its innovative approach to further optimize the training process. It actively incorporates existing scientific knowledge about how the model’s predictions change when its input parameters are subtly altered. Instead of forcing the neural network to "re-learn" these relationships from scratch, Effort.jl leverages these known sensitivities from the outset. This pre-built knowledge significantly reduces the amount of training data required, thereby cutting down on computational needs. Furthermore, Effort.jl utilizes gradients – a mathematical concept that quantifies "how much and in which direction" the model’s predictions shift when a parameter is slightly tweaked. This gradient information acts as another powerful tool, enabling the emulator to learn from far fewer examples. This efficiency gain is precisely what allows Effort.jl to operate effectively on smaller, more accessible computing platforms like standard laptops, rather than relying on the immense power of supercomputers.

Any scientific tool that offers such a significant computational advantage requires rigorous validation to ensure its reliability. The fundamental question is: if an emulator doesn’t "understand" the underlying physics, how can we be certain that its accelerated shortcut leads to accurate answers, i.e., results that are indistinguishable from those produced by the full, computationally intensive model? The recently published study directly addresses this critical concern. It provides compelling evidence that Effort.jl’s accuracy, when tested against both simulated cosmological data and real astronomical observations, is in close agreement with the predictions of the EFTofLSS model.

"And in some cases," Bonici concludes with palpable enthusiasm, "where with the model you have to trim part of the analysis to speed things up, with Effort.jl we were able to include those missing pieces as well." This suggests that in certain scenarios, the emulator not only matches the accuracy of the original model but can even provide a more complete analysis by incorporating elements that might have been sacrificed for speed in the traditional approach. Effort.jl therefore emerges not just as a computational accelerator, but as a valuable and powerful ally for cosmologists. Its capabilities are particularly well-suited for analyzing the massive upcoming data releases from pivotal experiments like DESI and Euclid. These surveys are poised to dramatically expand our understanding of the universe’s large-scale structure, and tools like Effort.jl will be instrumental in unlocking the profound scientific insights they promise to deliver, pushing the boundaries of our knowledge about the cosmos and our place within it.

The seminal study, titled "Effort.jl: a fast and differentiable emulator for the Effective Field Theory of the Large Scale Structure of the Universe," authored by Marco Bonici, Guido D’Amico, Julien Bel, and Carmelita Carbone, is readily accessible for further scientific exploration in the esteemed Journal of Cosmology and Astroparticle Physics (JCAP).