Researchers spearheaded by Keiya Hirashima at the RIKEN Center for Interdisciplinary Theoretical and Mathematical Sciences (iTHEMS) in Japan, in collaboration with esteemed partners from The University of Tokyo and Universitat de Barcelona in Spain, have achieved a groundbreaking feat: the creation of the first Milky Way simulation capable of meticulously tracking over 100 billion individual stars through 10,000 years of cosmic evolution. This monumental accomplishment was realized through the synergistic integration of artificial intelligence (AI) with sophisticated numerical simulation techniques. The resulting model boasts a star count 100 times greater than the most advanced previous simulations, and astonishingly, was generated more than 100 times faster, a testament to the transformative power of AI in scientific research.

The pioneering work, formally presented at the prestigious international supercomputing conference SC ’25, signifies a paradigm shift in astrophysics, high-performance computing, and the burgeoning field of AI-assisted modeling. The innovative strategy employed by Hirashima’s team holds immense promise, with the potential to be directly applied to large-scale Earth system studies, including the critical domains of climate and weather research, ushering in an era of unprecedented predictive accuracy and understanding.

The Enduring Challenge of Simulating Every Star

For decades, the astrophysical community has harbored a singular ambition: to construct Milky Way simulations so detailed that they could follow the trajectory and evolution of each individual star. Such comprehensive models would empower researchers to directly compare and validate their theories of galactic evolution, structure, and star formation against the rich tapestry of observational data. However, the inherent complexity of accurately simulating a galaxy presents formidable obstacles. It necessitates the intricate calculation of gravitational forces, fluid dynamics, the nucleosynthesis of chemical elements, and the cataclysmic events of supernova explosions, all spanning vast scales of time and space. This intricate interplay of physical processes renders the task extraordinarily computationally demanding.

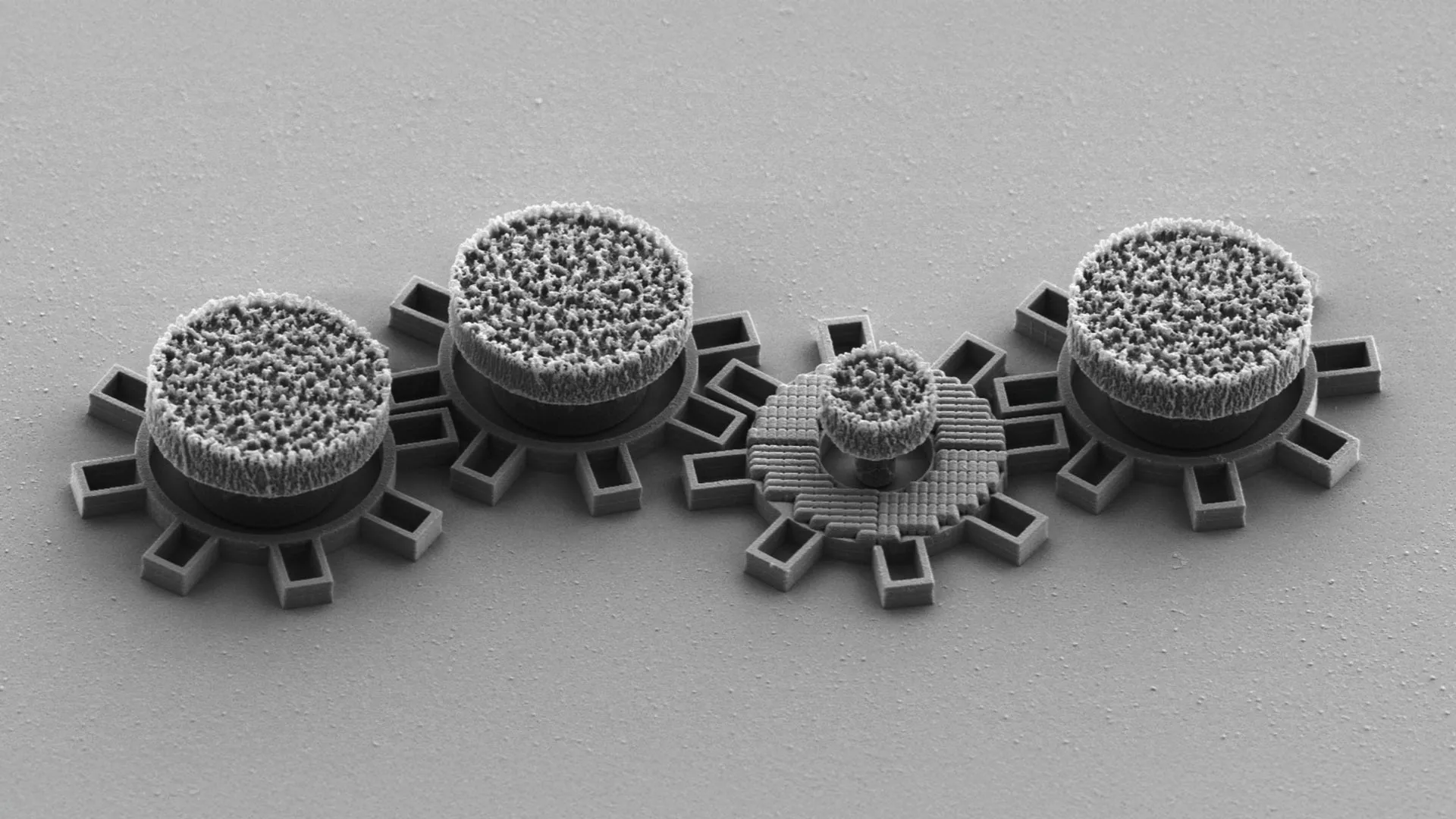

Historically, scientists have been constrained, unable to model a galaxy as expansive as the Milky Way while simultaneously preserving the fine-grained detail required to resolve individual stars. Current state-of-the-art simulations, while impressive, typically represent systems with a total mass equivalent to approximately one billion suns. This figure falls significantly short of the more than 100 billion stars that constitute our own galaxy. Consequently, the smallest discrete "particle" within these advanced models often represents a collective of roughly 100 stars. This averaging effect obscures the unique behavior of individual stars and inherently limits the accuracy of simulations at smaller scales. A significant portion of this challenge is intrinsically linked to the time step interval used in computations. To faithfully capture the ephemeral nature of rapid astrophysical events, such as the complex evolution of a supernova, simulations must advance in infinitesimally small increments of time.

The act of shrinking the time step, while crucial for accuracy, directly translates to a dramatic escalation in computational effort. Even with the most sophisticated physics-based models available today, simulating the Milky Way on a star-by-star basis would demand approximately 315 hours of processing time for every single million years of galactic evolution. At this prohibitive rate, generating just one billion years of simulated galactic activity would necessitate over 36 years of continuous computation in real time. Simply augmenting the number of supercomputer cores, a seemingly intuitive solution, proves to be impractical. As the number of cores increases, so too do energy consumption levels, and paradoxically, computational efficiency begins to diminish, rendering this approach unsustainable for such ambitious simulations.

A Novel Deep Learning Paradigm

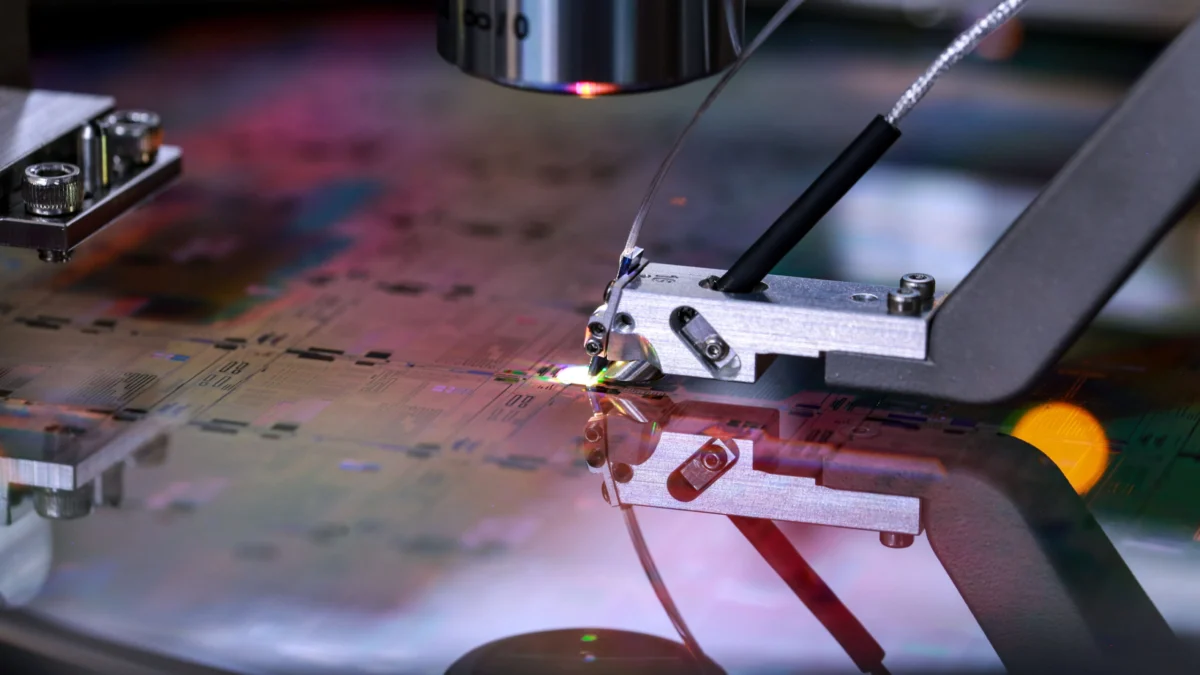

To surmount these formidable barriers, Hirashima and his dedicated team devised an ingenious methodology that artfully blends a deep learning surrogate model with conventional physical simulations. The surrogate model was meticulously trained on a dataset derived from high-resolution supernova simulations. Through this rigorous training process, it acquired the remarkable ability to predict the dispersion of gas in the aftermath of a supernova explosion over a period of 100,000 years, without imposing any additional computational burden on the primary simulation. This AI component proved instrumental, enabling the researchers to accurately capture the galaxy’s overarching behavior while concurrently modeling the intricate details of small-scale events, including the fine nuances of individual supernovae. To rigorously validate the efficacy and reliability of their innovative approach, the team conducted extensive comparisons of its results against large-scale simulations executed on RIKEN’s powerful Fugaku supercomputer and The University of Tokyo’s Miyabi Supercomputer System.

This groundbreaking method delivers true individual-star resolution for galaxies comprising over 100 billion stars, achieving this remarkable feat with an astonishing acceleration in speed. The simulation of one million years of galactic evolution, which previously would have taken an exorbitant amount of time, was now accomplished in a mere 2.78 hours. This translates to the astonishing realization that one billion years of galactic activity can now be simulated in approximately 115 days, a stark contrast to the previous 36-year timeframe. This dramatic reduction in computational time represents a quantum leap forward in our ability to explore galactic history.

Transformative Potential for Climate, Weather, and Oceanographic Modeling

The implications of this hybrid AI approach extend far beyond the realm of astrophysics. It possesses the potential to fundamentally reshape numerous disciplines within computational science that grapple with the intricate challenge of linking small-scale physical phenomena with large-scale behavior. Fields such as meteorology, oceanography, and climate modeling, which are inherently multi-scale and multi-physics in nature, stand to benefit immensely from the development of tools that can significantly accelerate complex simulations.

"I firmly believe that the integration of artificial intelligence with high-performance computing marks a fundamental and irreversible shift in how we approach and conquer multi-scale, multi-physics problems across the entirety of the computational sciences," states Hirashima, his voice resonating with conviction. "This achievement not only demonstrates the power of AI-accelerated simulations but also underscores their capacity to transcend mere pattern recognition and evolve into genuine engines of scientific discovery. They are now actively helping us to unravel the intricate processes by which the very elements essential for the emergence of life itself were forged within the crucible of our galaxy."