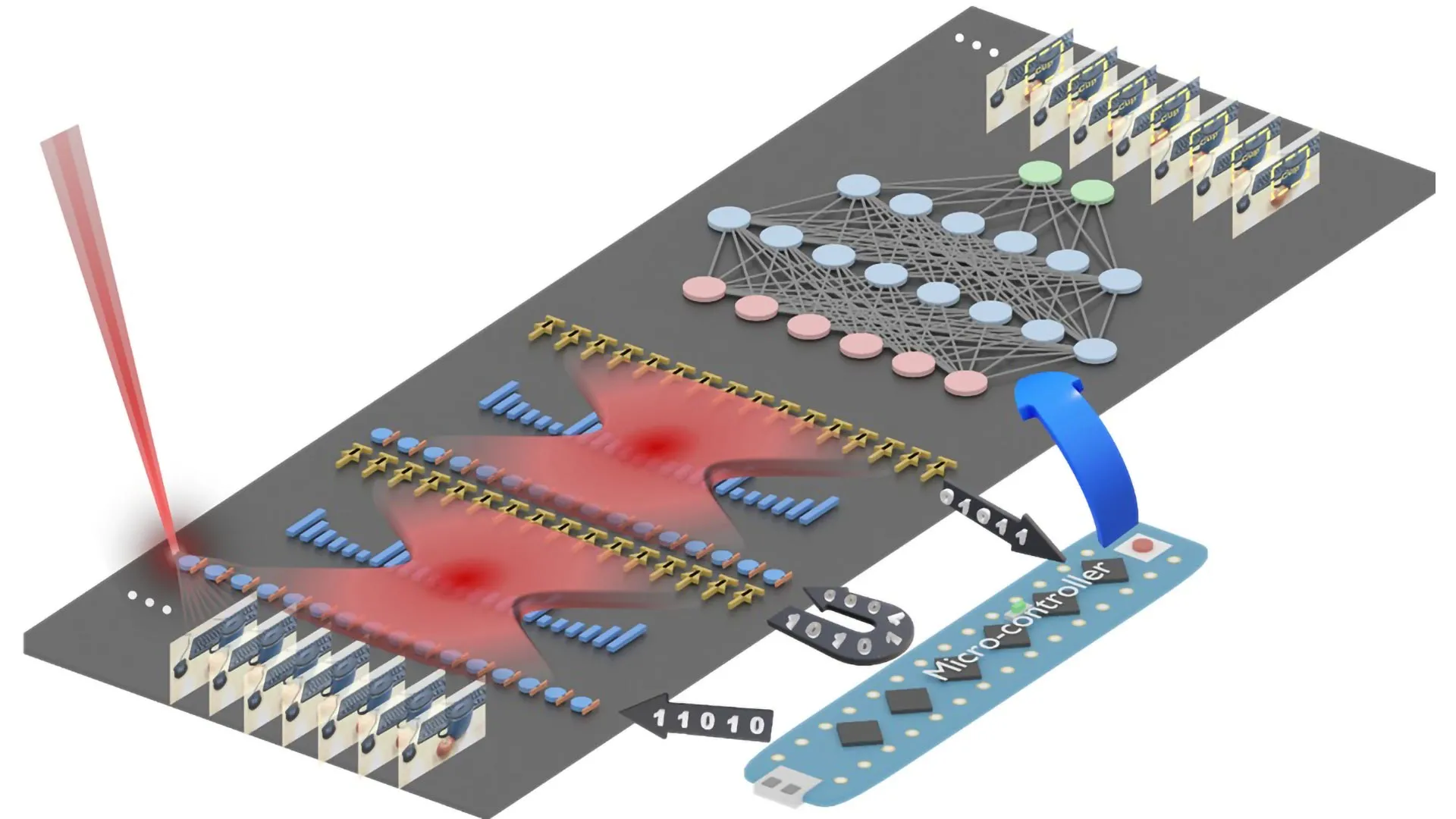

The ability to engage in internal dialogue, a hallmark of human consciousness often associated with introspection and self-awareness, is now proving to be a powerful catalyst for artificial intelligence. While humans use this "inner speech" to organize thoughts, evaluate decisions, and process emotions, new groundbreaking research from the Okinawa Institute of Science and Technology (OIST) reveals that a similar self-referential mechanism can significantly enhance how AI systems learn and adapt. Published in the esteemed journal Neural Computation, the study demonstrates that AI models trained to incorporate "inner speech" alongside a sophisticated short-term memory system exhibit superior performance across a wide spectrum of tasks, marking a pivotal advancement in the quest for more adaptable and intelligent machines.

The implications of this research extend far beyond mere algorithmic optimization. It underscores a profound understanding that the efficacy of AI learning is not solely dictated by its underlying architecture, but is equally shaped by the dynamic interactions it engages in with itself during the crucial training phase. Dr. Jeffrey Queiñer, Staff Scientist at OIST’s Cognitive Neurorobotics Research Unit and lead author of the study, eloquently articulates this paradigm shift: "This study highlights the importance of self-interactions in how we learn. By structuring training data in a way that teaches our system to talk to itself, we show that learning is shaped not only by the architecture of our AI systems, but by the interaction dynamics embedded within our training procedures." This perspective reframes AI training from a passive data ingestion process to an active, self-reflective journey, mirroring the developmental stages of human learning.

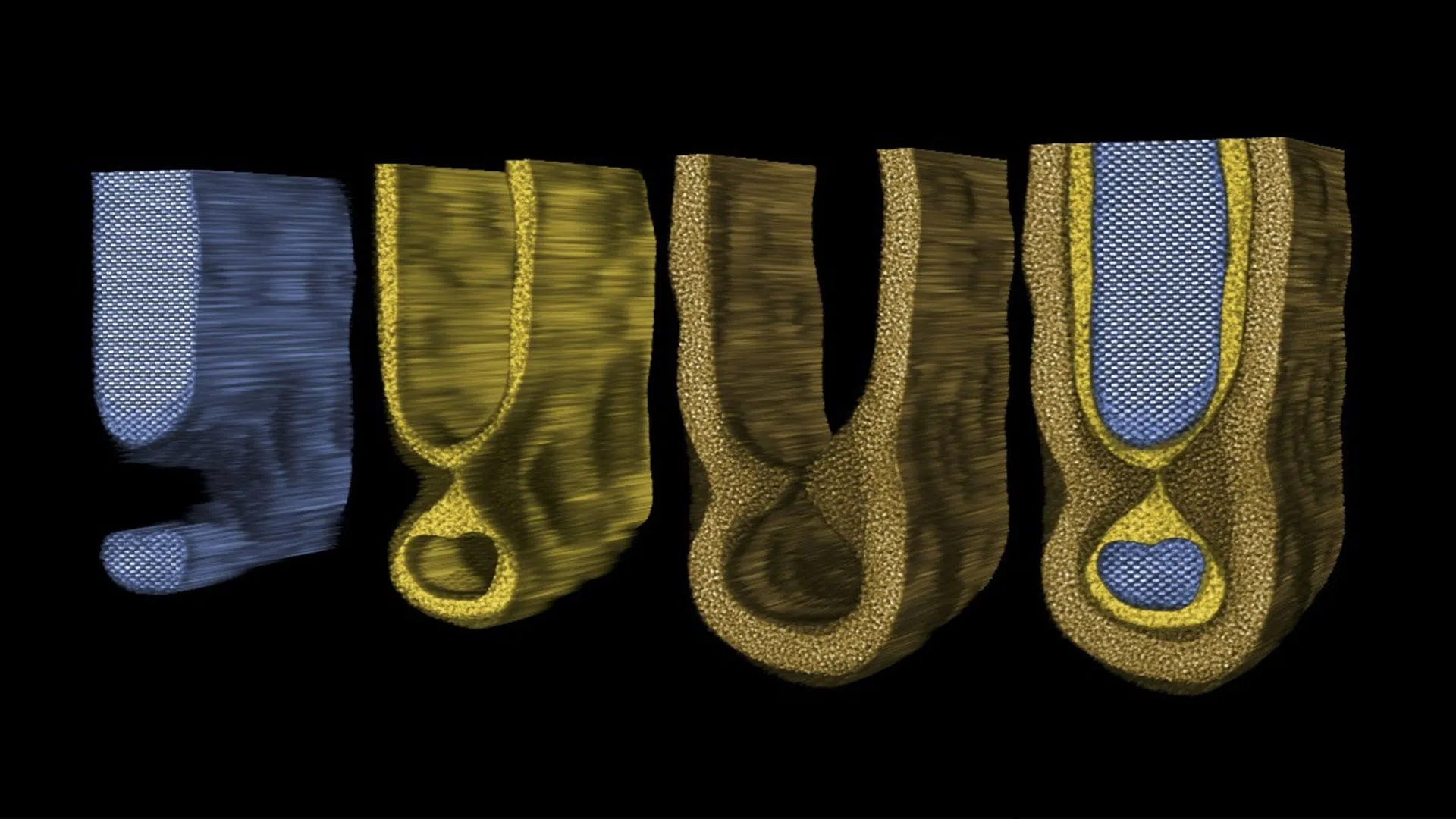

The core of the OIST team’s innovation lies in their ingenious fusion of self-directed internal speech, conceptualized as a quiet, simulated "mumbling," with a highly specialized working memory system. This synergistic approach empowers their AI models with unprecedented learning efficiency. They are not only capable of acquiring knowledge more rapidly but also demonstrate a remarkable aptitude for adjusting to novel and unforeseen circumstances, a critical hurdle for current AI. Furthermore, this combined system allows the AI to adeptly manage and execute multiple tasks concurrently, showcasing a significant leap in flexibility and overall performance when contrasted with conventional AI models that rely solely on memory recall.

A fundamental aspiration driving the OIST team’s work is the development of "content-agnostic information processing." This advanced capability refers to an AI’s ability to transcend the confines of its training data, applying learned skills and generalizable rules to entirely new situations that were not explicitly encountered during its education. This is the essence of true generalization – the capacity to infer and adapt, rather than simply memorize and regurgitate.

Dr. Queiñer elaborates on the challenges and motivations behind this pursuit: "Rapid task switching and solving unfamiliar problems is something we humans do easily every day. But for AI, it’s much more challenging. That’s why we take an interdisciplinary approach, blending developmental neuroscience and psychology with machine learning and robotics amongst other fields, to find new ways to think about learning and inform the future of AI." This interdisciplinary ethos is crucial, recognizing that the most complex problems in AI development can often be unlocked by drawing inspiration from the intricate mechanisms of human cognition and biological systems.

The investigation into working memory served as a foundational element of their research. The team meticulously examined how memory is designed within AI models, with a particular emphasis on working memory’s pivotal role in fostering generalization. Working memory, in both humans and AI, represents the ephemeral capacity to retain and actively manipulate information over short periods – essential for tasks ranging from comprehending spoken instructions to performing rapid mental arithmetic. By systematically evaluating tasks of varying complexity, the researchers were able to compare the effectiveness of different memory structures.

Their findings revealed a clear correlation: AI models equipped with multiple working memory slots – essentially temporary storage units for discrete pieces of information – exhibited superior performance on intricate problems. These included tasks that demanded the sequential reversal of data or the precise recreation of complex patterns. Such challenges necessitate the simultaneous holding and manipulation of several data points in a specific order, a feat that enhanced working memory structures facilitate.

The truly transformative element emerged when the researchers introduced specific training targets designed to encourage the AI system to engage in "self-talk" a predetermined number of times. This addition led to even more substantial performance improvements, particularly in the domains of multitasking and in tasks that involved extensive, multi-step processes. This suggests that the act of internally "rehearsing" or "thinking through" the steps, akin to human inner speech, provides a crucial meta-cognitive layer that optimizes complex problem-solving.

Dr. Queiñer further highlights the practical advantages of their combined system: "Our combined system is particularly exciting because it can work with sparse data instead of the extensive data sets usually required to train such models for generalization. It provides a complementary, lightweight alternative." This is a significant breakthrough, as the prohibitive cost and time associated with collecting and processing massive datasets has been a major bottleneck in developing highly generalized AI. Their approach offers a more efficient and accessible pathway.

Looking ahead, the OIST team is keen to move beyond the controlled environments of laboratory tests and explore the application of their findings in more realistic, dynamic settings. "In the real world, we’re making decisions and solving problems in complex, noisy, dynamic environments. To better mirror human developmental learning, we need to account for these external factors," states Dr. Queiñer. This commitment to real-world applicability underscores their ambition to create AI that can truly integrate into and assist humans in their everyday lives.

This research trajectory directly supports the team’s overarching objective: to deepen our understanding of the neural underpinnings of human learning. By dissecting phenomena such as inner speech and unraveling the intricate mechanisms that govern these processes, they are not only advancing the field of AI but also contributing fundamental insights into human biology and behavior. Dr. Queiñer concludes with a vision for the future: "We can also apply this knowledge, for example in developing household or agricultural robots which can function in our complex, dynamic worlds." The implications are vast, pointing towards a future where AI, imbued with the capacity for self-reflection and robust working memory, can operate with greater autonomy, intelligence, and adaptability, ultimately enriching human lives and augmenting our capabilities in unprecedented ways. This research heralds a new era of AI development, one that is not only about building smarter algorithms but also about understanding and replicating the very essence of intelligent thought.