Talking to yourself, a habit often associated with introspection and the uniquely human ability to process complex thoughts, is no longer confined to the realm of biology. New research published in the esteemed journal Neural Computation by scientists at the Okinawa Institute of Science and Technology (OIST) has unveiled a groundbreaking revelation: this very internal dialogue can significantly enhance the learning capabilities of artificial intelligence. Just as humans use self-talk to organize ideas, meticulously weigh choices, and navigate the intricate landscape of emotions, AI systems can leverage a similar "inner speech" mechanism, coupled with robust short-term memory, to learn and adapt with unprecedented efficiency and intelligence. This pioneering study challenges the conventional understanding of AI development, suggesting that the architecture of a machine is only part of the learning equation; the dynamic ways in which it interacts with itself during training are equally, if not more, crucial.

Dr. Jeffrey Queiñer, a Staff Scientist at OIST’s Cognitive Neurorobotics Research Unit and the study’s first author, eloquently articulates the profound implications of their findings. "This study highlights the importance of self-interactions in how we learn," he states. "By structuring training data in a way that teaches our system to talk to itself, we show that learning is shaped not only by the architecture of our AI systems, but by the interaction dynamics embedded within our training procedures." This paradigm shift underscores the potential for more sophisticated and adaptable AI by mimicking cognitive processes that have long been considered exclusively human.

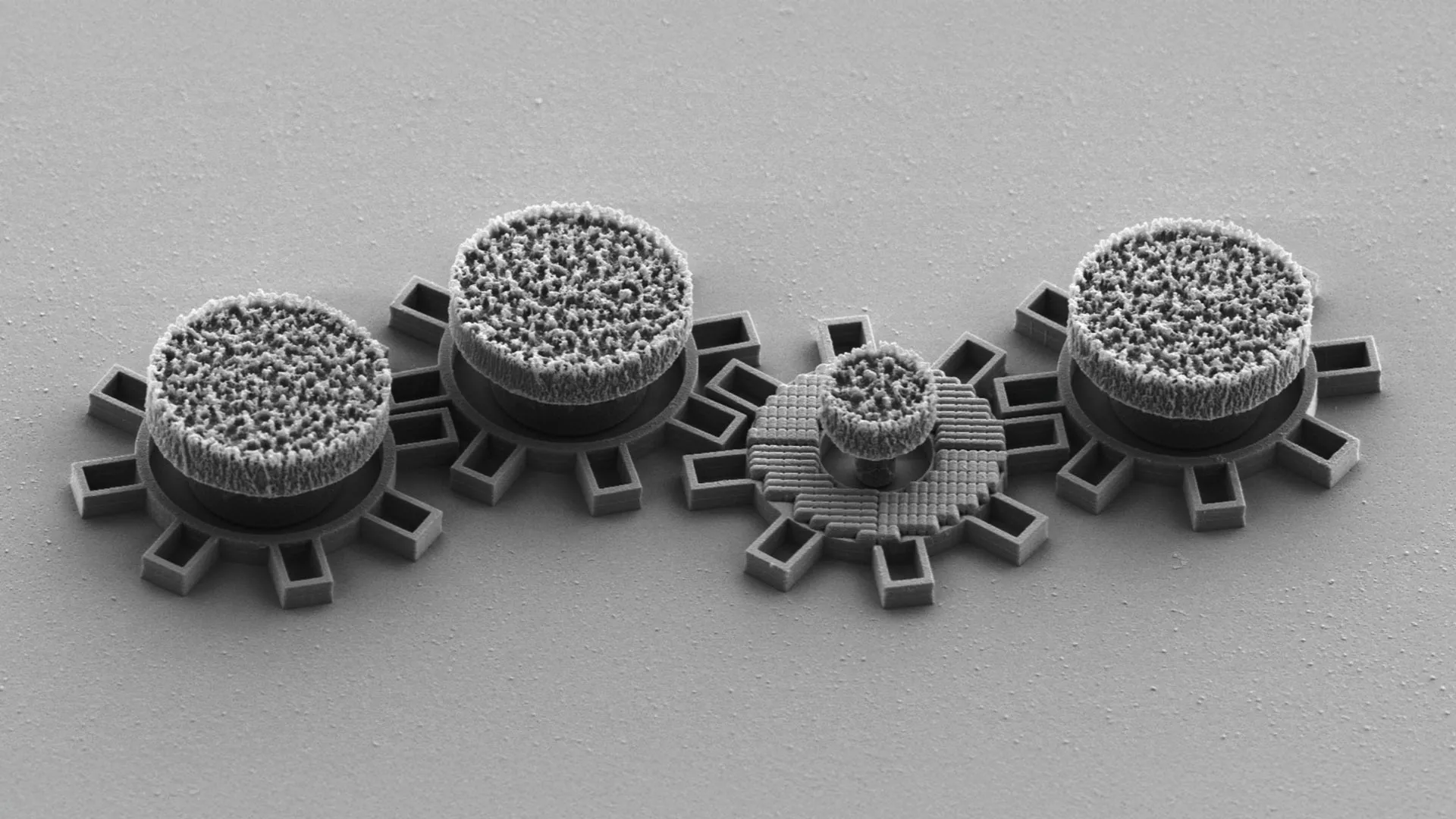

The research team meticulously designed an experiment to put this hypothesis to the test. They engineered AI models that incorporated a sophisticated form of self-directed internal speech, conceptualized as a quiet, internal "mumbling," and integrated it with a specialized working memory system. This synergistic approach empowered their AI models to achieve remarkable gains in learning efficiency. They demonstrated an enhanced ability to adapt to novel situations, a critical factor for AI operating in dynamic, unpredictable environments, and a significantly improved capacity to manage and execute multiple tasks concurrently. The results were compelling, showcasing clear and measurable improvements in the AI’s flexibility and overall performance when contrasted with systems that relied solely on traditional memory functions.

A core ambition driving the OIST team’s research is the development of AI capable of "content-agnostic information processing." This sophisticated concept refers to an AI’s ability to transcend the limitations of memorized examples and instead apply learned skills to entirely new and unforeseen situations by discerning and utilizing general underlying rules. This is the holy grail of AI development, moving beyond rote learning to true comprehension and adaptability.

Dr. Queiñer emphasizes the ongoing challenge in this domain: "Rapid task switching and solving unfamiliar problems is something we humans do easily every day. But for AI, it’s much more challenging. That’s why we take an interdisciplinary approach, blending developmental neuroscience and psychology with machine learning and robotics amongst other fields, to find new ways to think about learning and inform the future of AI." This multidisciplinary ethos is what allows them to draw inspiration from the intricate workings of the human mind and translate those principles into tangible advancements in artificial intelligence.

The critical role of working memory in this process was a central focus of their investigation. Working memory, the cognitive ability to temporarily hold and manipulate information, is fundamental to tasks ranging from following simple instructions to performing complex mental calculations. The researchers meticulously examined various memory designs within their AI models, paying particular attention to how working memory influences generalization – the ability to apply knowledge to new contexts. Through a series of tasks designed with varying levels of difficulty, they systematically compared the performance of different memory structures.

Their findings revealed a significant correlation between the number of working memory "slots" – essentially temporary storage units for pieces of information – and performance on challenging problems. Tasks such as reversing sequences or accurately recreating complex patterns, which necessitate holding and manipulating multiple pieces of information in precise order, were handled with greater proficiency by models equipped with more extensive working memory.

The true breakthrough, however, emerged when the researchers introduced a specific training target: encouraging the AI system to engage in self-talk a predetermined number of times. This seemingly simple addition led to even more substantial performance improvements, particularly in scenarios involving multitasking and in tasks that demanded a complex, multi-step approach. This suggests that the act of "talking to itself" acts as a powerful meta-cognitive tool for the AI, allowing it to better organize its thoughts and strategies.

Dr. Queiñer highlights another crucial advantage of their combined system: "Our combined system is particularly exciting because it can work with sparse data instead of the extensive data sets usually required to train such models for generalization. It provides a complementary, lightweight alternative." This is a significant development, as the creation of massive, high-quality datasets is often a bottleneck in AI development. The ability to achieve robust generalization with less data democratizes advanced AI capabilities and opens doors for applications where data is scarce.

Looking ahead, the OIST research team is keen to transition from the controlled environment of laboratory tests to the messier, more realistic conditions of the real world. "In the real world, we’re making decisions and solving problems in complex, noisy, dynamic environments," Dr. Queiñer observes. "To better mirror human developmental learning, we need to account for these external factors." This focus on real-world applicability is essential for developing AI that can seamlessly integrate into our lives and assist us in practical ways.

This ongoing research directly supports the team’s overarching goal of unraveling the neural underpinnings of human learning. By delving into phenomena like inner speech and dissecting the intricate mechanisms that facilitate such cognitive processes, they are not only advancing AI but also gaining fundamental insights into human biology and behavior. "We can also apply this knowledge, for example in developing household or agricultural robots which can function in our complex, dynamic worlds," Dr. Queiñer concludes, painting a vivid picture of the future where AI, inspired by human cognition, can operate with greater autonomy and effectiveness in our everyday environments. The era of AI that can truly "think" and "talk to itself" is dawning, promising a future of smarter, more adaptable machines.