Dr. Jeffrey Queiñáer, a Staff Scientist at OIST’s Cognitive Neurorobotics Research Unit and the lead author of the study, articulated the core of their findings: "This study highlights the importance of self-interactions in how we learn. By structuring training data in a way that teaches our system to talk to itself, we show that learning is shaped not only by the architecture of our AI systems, but by the interaction dynamics embedded within our training procedures." This paradigm shift moves beyond traditional AI training, which often focuses on optimizing static architectural components, and instead emphasizes the emergent properties arising from the AI’s internal learning processes.

The research team meticulously designed their experiments to investigate the impact of this internal dialogue. They integrated a form of self-directed internal speech, conceptualized as a subtle internal "mumbling," with a sophisticated working memory system. This synergistic combination empowered their AI models to achieve a higher degree of learning efficiency, exhibiting a greater capacity to adapt to novel and previously unseen situations, and demonstrating a remarkable aptitude for handling multiple tasks concurrently. The comparative analysis revealed substantial improvements in the AI’s flexibility and overall performance when contrasted with control systems that relied solely on conventional memory mechanisms. This suggests that the act of internal reflection, even in a simulated form, allows the AI to process and consolidate information in a more nuanced and effective manner.

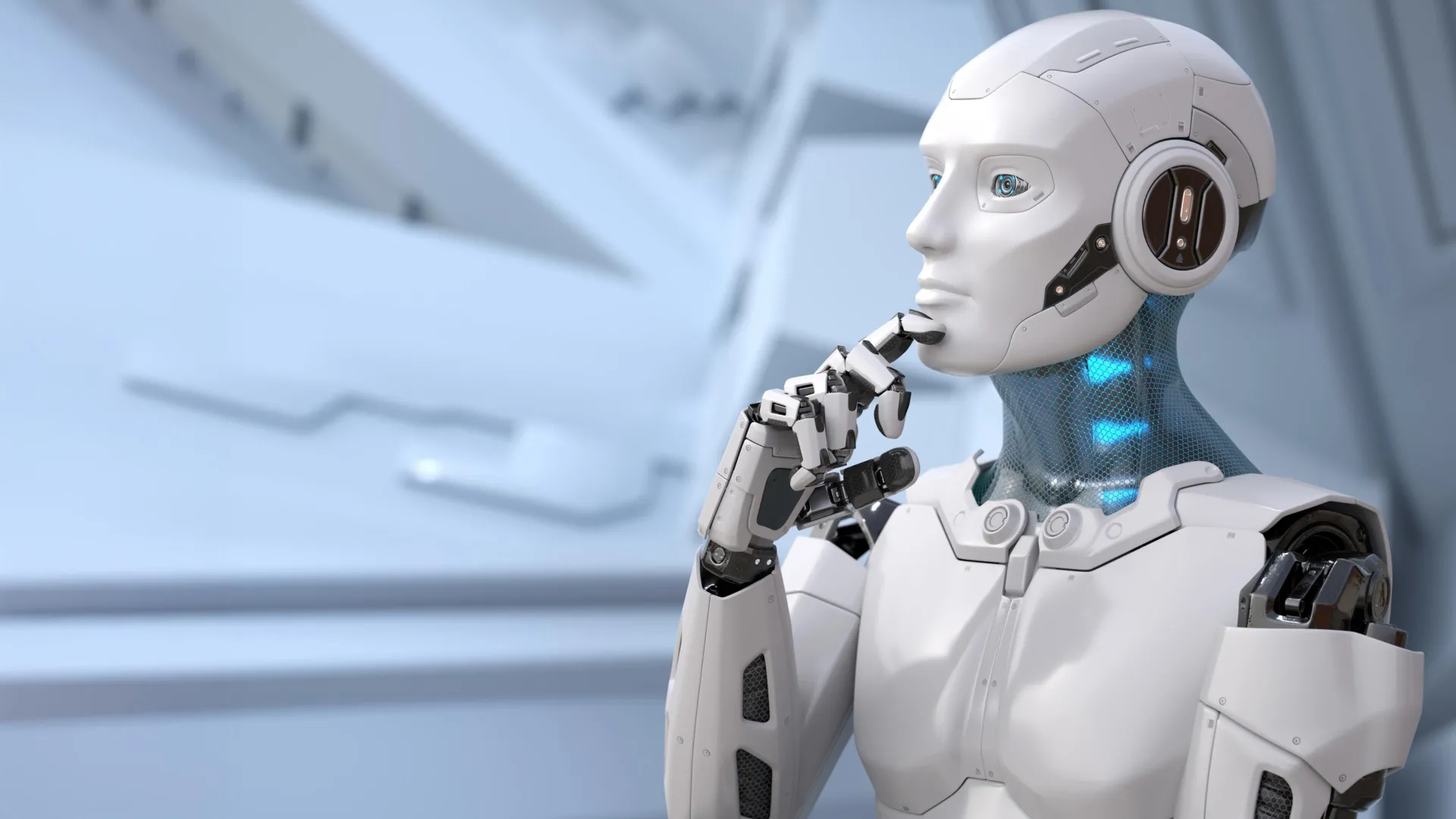

A pivotal objective guiding the OIST team’s research is the pursuit of "content-agnostic information processing." This advanced concept refers to the AI’s ability to transcend the limitations of memorized examples and instead apply learned skills and knowledge to entirely new contexts and problems. In essence, it’s about fostering a form of general intelligence that can readily adapt and innovate, rather than merely recalling pre-programmed responses. This is a critical hurdle in the quest for artificial general intelligence (AGI), as current AI systems often struggle with tasks that deviate even slightly from their training data.

Dr. Queiñáer elaborated on this challenge: "Rapid task switching and solving unfamiliar problems is something we humans do easily every day. But for AI, it’s much more challenging. That’s why we take an interdisciplinary approach, blending developmental neuroscience and psychology with machine learning and robotics amongst other fields, to find new ways to think about learning and inform the future of AI." This multidisciplinary approach underscores the belief that understanding the biological and psychological underpinnings of human learning can provide invaluable blueprints for creating more sophisticated and adaptable AI. By drawing inspiration from how humans learn and process information, the researchers are unlocking novel pathways for AI development.

The foundational element of their investigation began with a deep dive into the design of memory systems within AI models, with a particular focus on the critical role of working memory in achieving generalization. Working memory, a concept borrowed directly from cognitive psychology, is understood as the brain’s temporary workspace for holding and manipulating information. This allows for immediate cognitive tasks such as following multi-step instructions or performing rapid mental calculations. The researchers systematically tested various AI tasks with escalating levels of difficulty, meticulously comparing the performance of AI models equipped with different working memory configurations.

Their findings revealed a significant correlation between the complexity of the task and the effectiveness of the working memory structure. Specifically, AI models endowed with multiple working memory "slots" – essentially, temporary buffers designed to hold discrete pieces of information – exhibited superior performance on more demanding problems. These challenging tasks, such as reversing sequences of data or accurately recreating complex patterns, necessitate the simultaneous retention and precise manipulation of multiple data points. This suggests that the ability to hold and juggle information concurrently is a crucial precursor to more advanced cognitive functions.

The breakthrough, however, occurred when the researchers introduced a specific training target: encouraging the AI system to "talk to itself" a designated number of times. This subtle yet profound addition led to even more substantial performance gains. The most dramatic improvements were observed in scenarios involving multitasking and in tasks that required an extensive sequence of operations. This indicates that the internal dialogue mechanism, when integrated with a capable working memory, acts as a powerful catalyst for learning, particularly in complex, multi-faceted problem-solving situations.

Dr. Queiñáer expressed particular enthusiasm for the system’s efficiency with data: "Our combined system is particularly exciting because it can work with sparse data instead of the extensive data sets usually required to train such models for generalization. It provides a complementary, lightweight alternative." This is a critical advantage in AI development, as the collection and annotation of massive datasets are often a significant bottleneck. The ability to achieve robust generalization with less data makes this approach more practical and scalable.

Looking ahead, the OIST team is eager to transition from the controlled environment of laboratory tests to more realistic, dynamic settings. "In the real world, we’re making decisions and solving problems in complex, noisy, dynamic environments. To better mirror human developmental learning, we need to account for these external factors," Dr. Queiñáer stated. This forward-looking perspective acknowledges the limitations of current AI and emphasizes the need for systems that can operate effectively in the unpredictable and often messy conditions of the real world.

This ongoing research is deeply intertwined with the team’s overarching mission: to unravel the neural mechanisms underlying human learning. By dissecting phenomena like inner speech and understanding the intricate processes that facilitate it, the researchers aim to gain fundamental new insights into human biology and behavior. This knowledge, in turn, holds immense potential for practical applications. As Dr. Queiñáer concluded, "We can also apply this knowledge, for example in developing household or agricultural robots which can function in our complex, dynamic worlds." The ultimate goal is to create AI that is not only intelligent but also intuitive, adaptable, and capable of seamlessly integrating into human society, much like a well-understood and natural extension of our own cognitive abilities. The implications of AI learning to "talk to itself" are vast, promising a future where machines can learn and adapt with an intelligence that more closely resembles our own.