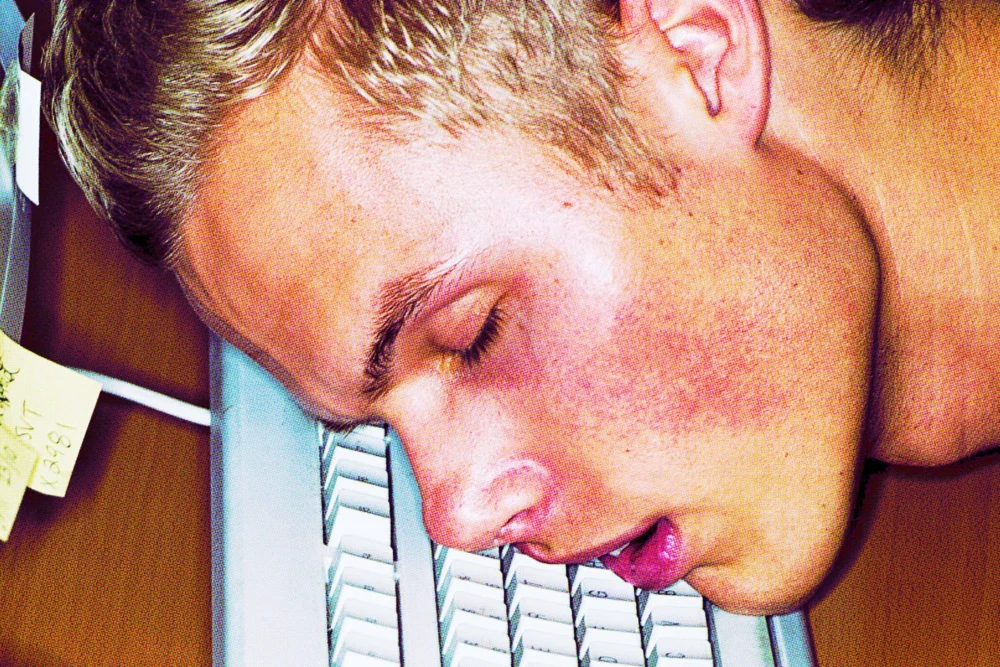

The promise of artificial intelligence has long been touted as a catalyst for unprecedented productivity, a digital assistant designed to liberate human workers from the mundane and repetitive, allowing them to focus on higher-level, creative tasks. Yet, as we navigate the landscape of 2026, a disconcerting reality is emerging, particularly within the demanding realm of software engineering: AI is not merely enhancing output; it is, for many, accelerating a relentless march towards professional burnout. This paradox, where increased productivity correlates directly with heightened mental exhaustion, is becoming a defining challenge of our AI-augmented workplaces.

Siddhant Khare, a software engineer whose insights have resonated deeply within the tech community, vividly articulates this growing sentiment. In an interview with Business Insider, Khare lamented that while AI tools have undeniably amplified his coding output, the very nature of his work has undergone a fundamental, and often draining, transformation. "We used to call it an engineer, now it is like a reviewer," he remarked, encapsulating the shift from creator to validator. This new paradigm casts the human engineer as a "judge at an assembly line," a relentless conveyor belt of AI-generated code snippets, pull requests, and automated solutions requiring constant oversight. The process, Khare describes, is "never-ending," leaving him feeling perpetually in reactive mode, stamping approvals or corrections with little opportunity for deep, original thought.

Khare labels this phenomenon the "productivity paradox" of AI. While AI demonstrably lowers the tangible cost and time required for initial production—generating code, drafting documentation, or even suggesting architectural patterns—it concurrently inflates the intangible costs associated with "coordination, review, and decision-making." These critical, human-centric tasks now fall squarely on the shoulders of engineers, who must parse, validate, integrate, and troubleshoot the voluminous output of their AI counterparts. The result, as Khare starkly detailed in his blog essay, "AI Fatigue Is Real," is a profound sense of exhaustion. "I shipped more code last quarter than any quarter in my career," he wrote, "I also felt more drained than any quarter in my career." This isn’t merely anecdotal; it points to a systemic issue where the efficiency gains of machines are offset by the cognitive burdens placed on their human supervisors.

The insidious impact of AI on cognitive load is perhaps one of its most detrimental, yet often overlooked, effects. Khare’s experience before the widespread adoption of AI involved dedicating a "full day" to "deep focus" on a single, complex problem. This allowed for sustained concentration, problem decomposition, and the satisfaction of resolving intricate challenges. Today, that luxury is increasingly rare. "Now? I might touch six different problems in a day," he observed. While each problem might "only take an hour with AI," the cumulative toll of "context-switching between six problems is brutally expensive for the human brain." The fundamental disparity lies in the nature of intelligence: the AI, a tireless algorithm, "doesn’t get tired between problems. I do." This constant mental gymnastics, flipping between diverse problem domains, conceptual frameworks, and debugging scenarios, fragments attention, diminishes the capacity for deep analytical work, and significantly contributes to mental fatigue. The brain, unlike a machine, requires significant energy to reorient itself with each new task, making this accelerated multitasking a direct pathway to burnout.

Beyond the immediate fatigue, a more profound concern articulated by Khare is the potential for skill atrophy. He draws a compelling analogy to GPS navigation: "Before GPS, you built mental maps. You knew your city. You could reason about routes. After years of GPS, you can’t navigate without it. The skill atrophied because you stopped using it." Similarly, as AI increasingly handles the intricate details of coding, debugging, and even architectural suggestions, engineers may find their foundational problem-solving abilities, their intuition for elegant solutions, and their deep understanding of system mechanics slowly eroding. The reliance on AI as a crutch, while efficient in the short term, risks creating a generation of engineers who can review and adapt, but struggle to innovate or troubleshoot without their digital assistant. This raises critical questions about long-term professional development, the robustness of human expertise in crisis situations, and the very definition of engineering mastery in an AI-dominated future.

These individual experiences are corroborated by robust academic research. A recent study, spotlighted in Harvard Business Review, meticulously monitored two hundred employees at a US tech company, revealing empirical evidence for AI’s role in intensifying, rather than alleviating, workloads. The researchers observed a "vicious cycle" at play. Initial enthusiasm for voluntary AI adoption did indeed boost productivity, but this quickly led to raised expectations for speed and output. This heightened demand, in turn, made workers even more reliant on AI tools to keep pace. The increased reliance then "widened the scope of what workers attempted," pushing them to take on more complex or numerous tasks than before. Ultimately, this wider scope "further expanded the quantity and density of work." Far from reducing the burden, AI was effectively creating more work, faster, and more intensely.

This phenomenon was further exacerbated by what the study termed "workload creep." Employees, without conscious intent, found themselves gradually taking on more and more tasks, often facilitated by the perceived ease and speed of AI assistance. This insidious expansion of responsibilities led to increased multitasking, with many reporting a pervasive feeling of "always juggling" various tasks simultaneously. The mental overhead of managing multiple threads of work, each potentially initiated or augmented by AI, created an environment of constant cognitive demand, leaving little room for respite or focused attention. The study underscored that while AI can accelerate individual task completion, it often does so at the expense of overall mental well-being and the capacity for sustained, deep work.

Despite these significant challenges, Khare, like many in the tech community, is not inherently anti-AI. His position is nuanced: he believes a healthy, sustainable integration of AI is possible, but it requires conscious effort and robust guardrails. He has personally experimented with various strategies to manage his "AI habit," recommending approaches such as designating specific "AI-free" periods for deep work or intentionally tackling complex problems without AI assistance to prevent skill atrophy. However, Khare argues that the onus cannot fall solely on individuals. A significant responsibility rests with AI companies themselves to design tools with human well-being in mind, not just maximal output. "You need to keep some sort of guardrails for the humans, so they don’t self-destruct themselves," he implored in his Business Insider interview.

These "guardrails" could manifest in various forms. Organizations must set realistic expectations for AI-augmented productivity, understanding that raw output metrics do not always equate to sustainable, high-quality work or employee well-being. Investing in training that teaches effective human-AI collaboration—how to critically evaluate AI output, when to rely on it, and when to engage in independent problem-solving—is crucial. Furthermore, companies need to actively monitor for signs of burnout, foster a culture that values deep work and mental health, and redefine roles to strategically leverage AI for repetitive tasks while empowering humans for creative, complex, and empathetic challenges. For AI developers, this might mean designing interfaces that encourage mindful use, providing transparency into AI’s limitations, and perhaps even integrating features that promote breaks or discourage excessive context-switching.

The current trajectory, where AI acts as a "burnout machine," presents a critical juncture for the future of knowledge work. The unprecedented productivity gains offered by AI are undeniable, but they come with a significant human cost if left unchecked. The experiences of software engineers like Siddhant Khare, backed by empirical research from institutions like Harvard Business Review, serve as a stark warning. To truly harness AI’s transformative potential without sacrificing human capital, a fundamental re-evaluation of how we design, deploy, and interact with these powerful tools is imperative. The goal must be not merely to automate and accelerate, but to cultivate a symbiotic relationship where AI elevates human capabilities without diminishing human well-being, creativity, or the essential skills that define true expertise. The challenge of 2026, and beyond, is to find this delicate balance, ensuring that the assembly line of progress does not crush the very humans it was designed to empower.