Today, tensor operations are absolutely indispensable for a vast array of AI systems, underpinning critical functionalities such as sophisticated image processing, nuanced language understanding, and a multitude of other intricate tasks. As the volume of data generated and processed continues its exponential growth, traditional digital hardware, including Graphics Processing Units (GPUs), are increasingly buckling under the strain, facing significant challenges in terms of raw speed, energy consumption, and overall scalability. This escalating demand highlights a critical bottleneck in our current technological capabilities, paving the way for disruptive innovations.

Researchers Demonstrate Single-Shot Tensor Computing With Light, Ushering in a New Era of AI Acceleration

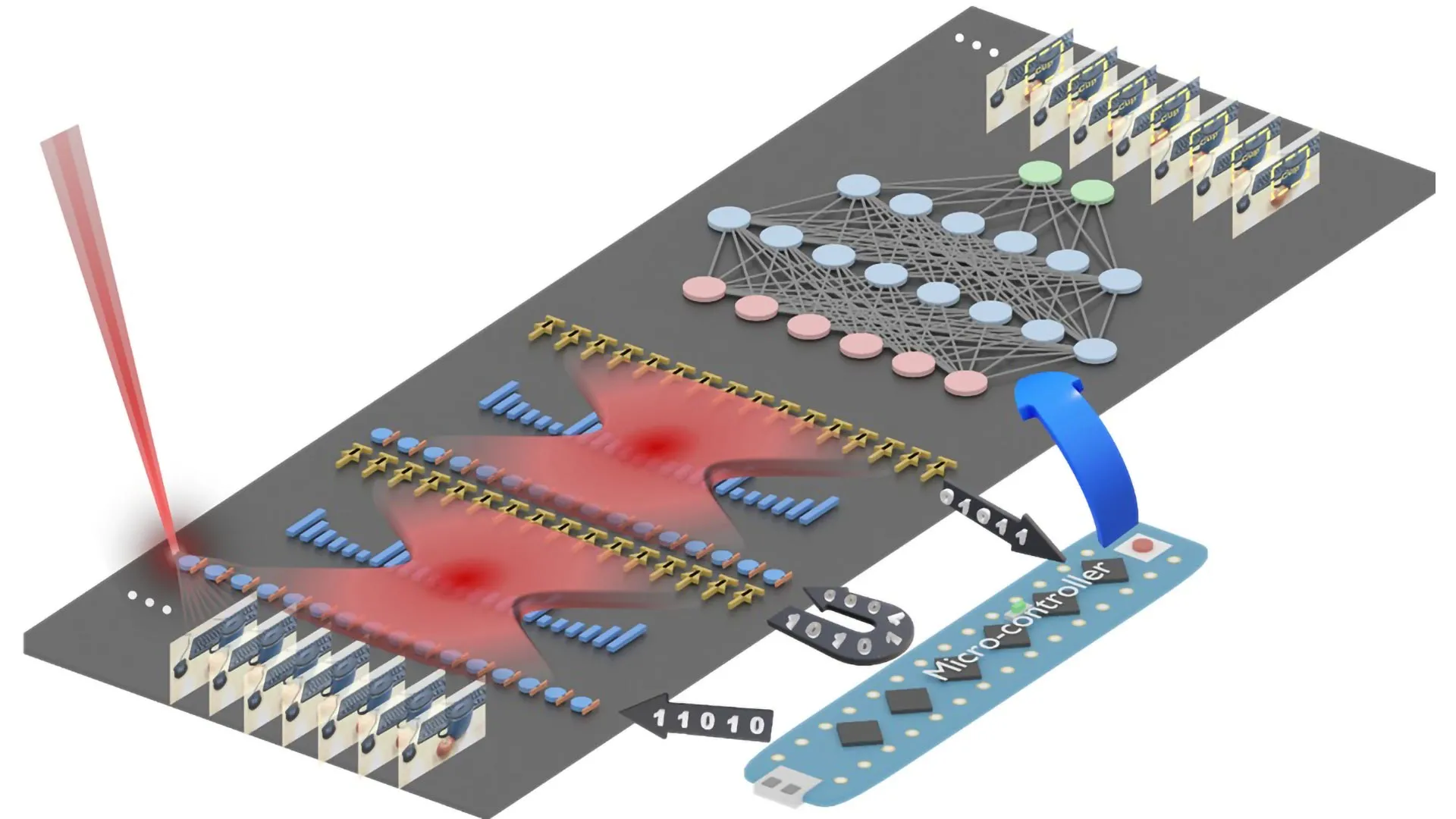

In a groundbreaking development aimed squarely at confronting these formidable challenges, an international consortium of researchers, spearheaded by the visionary Dr. Yufeng Zhang of the esteemed Photonics Group at Aalto University’s Department of Electronics and Nanoengineering, has unveiled a fundamentally novel approach to computation. This innovative method fundamentally redefines the execution of complex tensor calculations, enabling them to be completed within the infinitesimal timeframe of a single passage of light through a meticulously engineered optical system. This revolutionary process, aptly termed "single-shot tensor computing," operates at the intrinsic speed of light itself, promising an unprecedented leap in computational efficiency.

"Our method effectively performs the same sophisticated kinds of operations that are currently handled by today’s most advanced GPUs, including intricate convolutions and complex attention layers, but it accomplishes these tasks at the speed of light," enthusiastically explains Dr. Zhang. "Rather than relying on the limitations of conventional electronic circuits, we are ingeniously leveraging the inherent physical properties of light to orchestrate the simultaneous execution of a multitude of computations." This paradigm shift moves computation away from sequential electronic switching and towards the parallel processing capabilities inherent in wave phenomena.

Encoding Information Into Light for High-Speed Computation: A Symphony of Amplitude and Phase

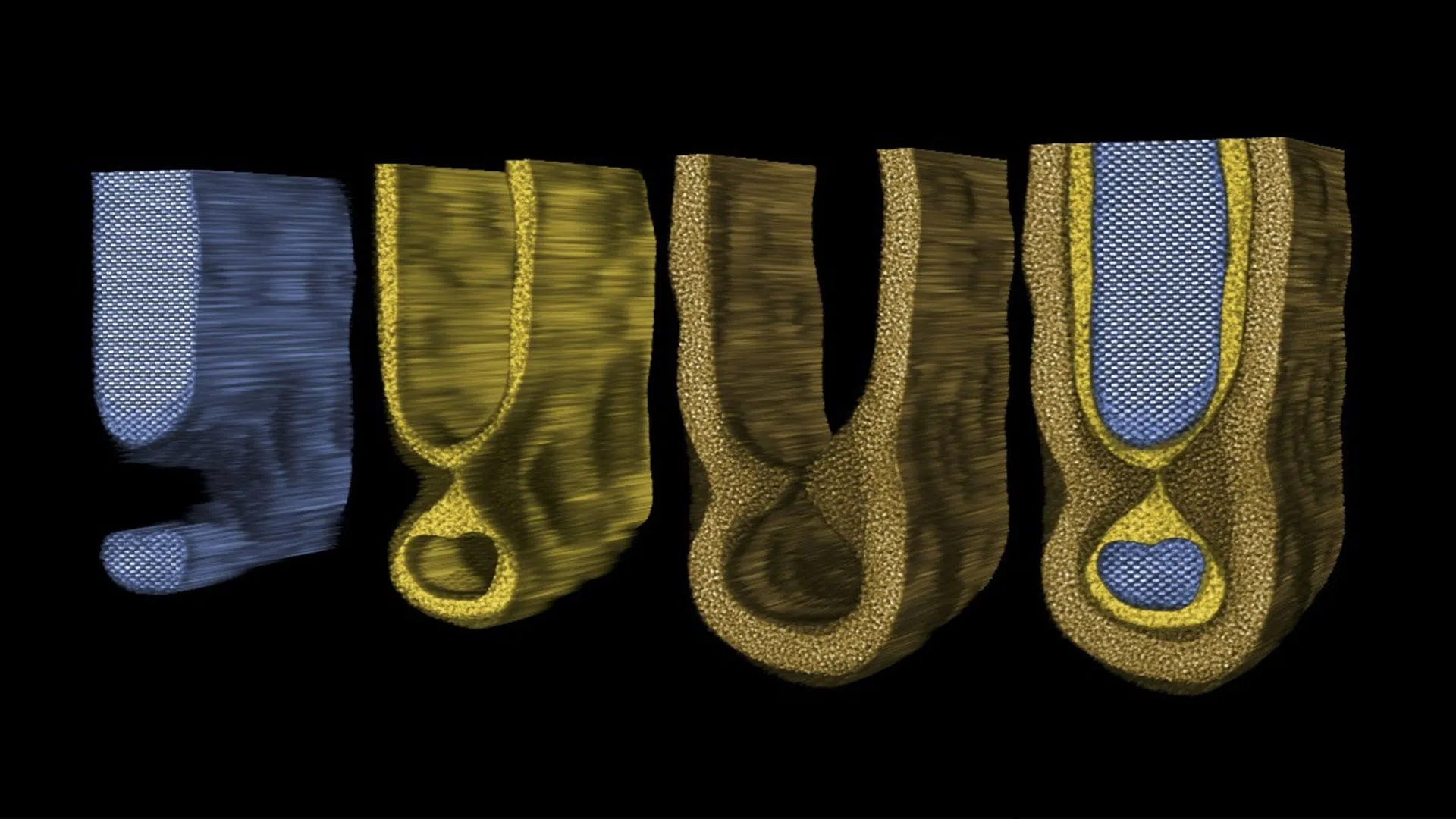

The remarkable achievement of the research team lies in their ingenious method of embedding digital information directly into the amplitude and phase characteristics of light waves. This process effectively transforms numerical data into tangible physical variations within the optical field. As these intricately encoded light waves propagate and interact with each other, they spontaneously and automatically execute fundamental mathematical procedures, most notably matrix and tensor multiplications, which form the bedrock of deep learning algorithms. By strategically employing multiple wavelengths of light, the researchers have further expanded the capabilities of their technique, enabling it to support even more intricate and higher-order tensor operations, thus unlocking a new dimension of computational power.

To further illustrate the profound implications of their work, Dr. Zhang offers a vivid analogy: "Imagine you are a customs officer tasked with inspecting every single parcel that passes through your station. In a conventional scenario, you would have to process each parcel individually, sending it through multiple machines, each with a different function, and then meticulously sorting them into the correct bins. This process is inherently sequential and time-consuming." He continues, "Our optical computing method, in stark contrast, effectively merges all parcels and all machines into a single, unified operation. We engineer multiple ‘optical hooks’ that precisely connect each input parcel to its designated output bin. With just one operation, a single pass of light, all inspections and sorting happen instantaneously and in perfect parallel." This analogy powerfully conveys the elimination of serial processing bottlenecks and the achievement of true parallelism.

Passive Optical Processing With Wide Compatibility: Simplicity Meets Power

One of the most striking and advantageous aspects of this novel method is its remarkable simplicity and minimal reliance on active intervention. The complex mathematical operations are intrinsically performed as the light naturally propagates through the optical system, eliminating the need for continuous active control or the energy-intensive electronic switching that characterizes traditional computing architectures during computation. This inherent passivity contributes significantly to the potential for reduced power consumption and increased operational efficiency.

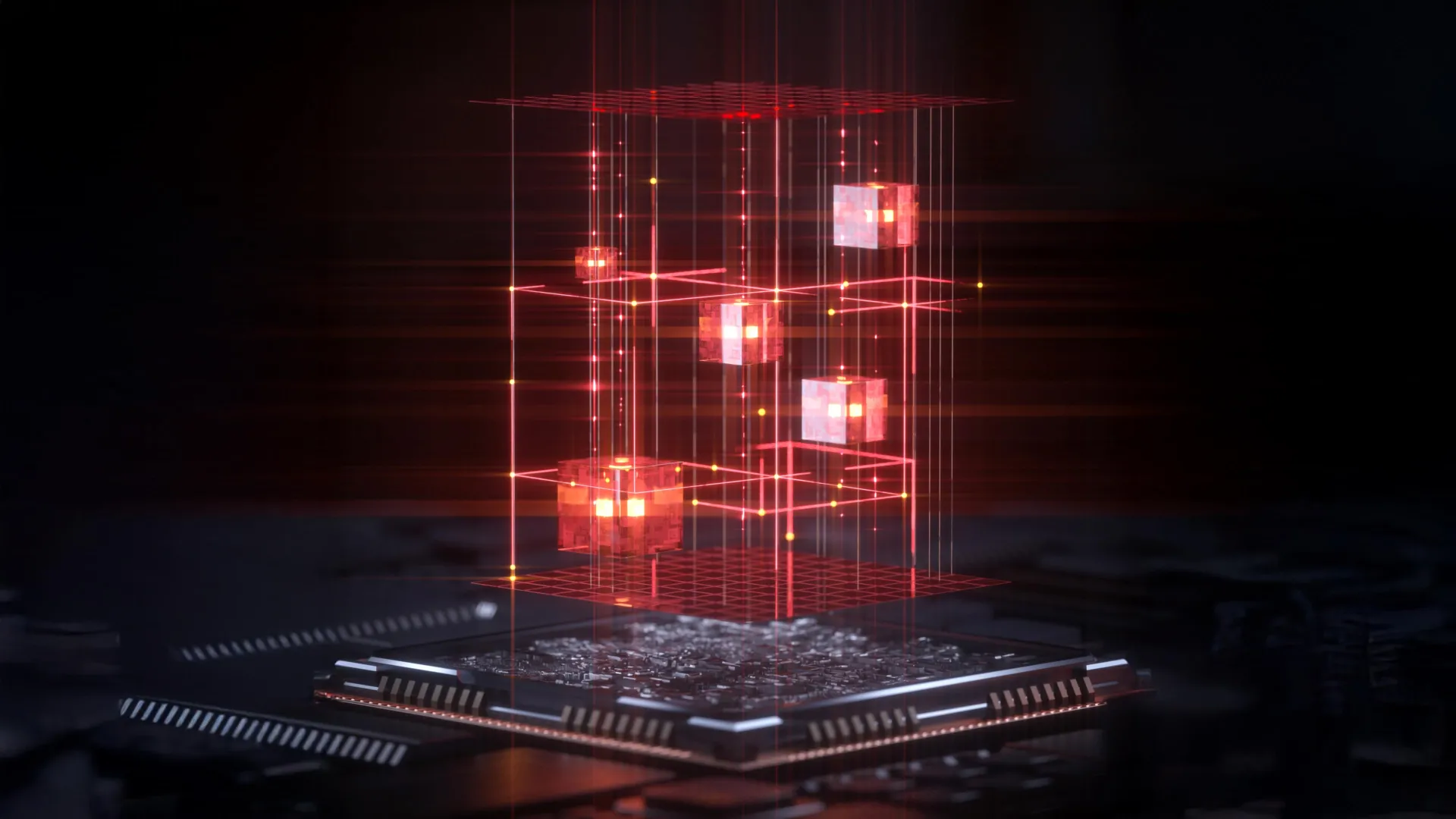

"This innovative approach possesses remarkable flexibility and can be implemented on virtually any existing optical platform," states Professor Zhipei Sun, the distinguished leader of Aalto University’s Photonics Group and a key figure in this research. "Looking ahead, our ambitious plan is to integrate this powerful computational framework directly onto photonic chips. This integration will pave the way for the development of light-based processors capable of executing extremely complex AI tasks with exceptionally low power consumption, a critical requirement for future sustainable computing." The modularity and compatibility of the approach suggest a smooth integration pathway into existing optical infrastructure.

Path Toward Future Light-Based AI Hardware: Bridging the Gap to Commercialization

Dr. Zhang expresses a clear and pragmatic vision for the future, emphasizing that the ultimate objective is to meticulously adapt and integrate this groundbreaking technique into the existing hardware and platforms currently utilized by major technology corporations. He optimistically estimates that the method possesses the potential to be seamlessly incorporated into such large-scale systems within the next three to five years, marking a relatively rapid transition from laboratory innovation to practical application.

"This will undoubtedly usher in the creation of an entirely new generation of optical computing systems, significantly accelerating the performance of complex AI tasks across a myriad of diverse fields," he concludes with conviction. The implications for fields ranging from autonomous vehicles and advanced medical diagnostics to scientific research and financial modeling are profound and far-reaching.

The seminal study detailing this revolutionary advancement was formally published in the prestigious scientific journal Nature Photonics on November 14th, 2025, marking a significant milestone in the ongoing quest for more powerful, efficient, and scalable artificial intelligence. This publication signifies the culmination of years of dedicated research and development, and it stands as a testament to the transformative potential of light-based computing.